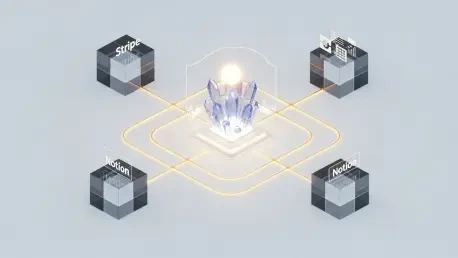

The digital gold rush of the current decade has shifted the primary focus of cybercriminals from individual databases to the very plumbing that connects modern artificial intelligence to corporate data. When Braintrust, a prominent AI observability platform, disclosed a breach of its cloud environment, the ripple effect touched industry giants like Stripe and Notion. This single event signaled a definitive shift in the threat landscape, where the infrastructure managing API keys has become the most profitable target for sophisticated actors. This analysis explores how the centralization of these “keys to the kingdom” creates systemic risks across the global AI supply chain.

The Shift Toward AI Infrastructure Targeting

The Growing Statistical Risk: AI Supply Chains

Recent cybersecurity data reveals a troubling surge in unauthorized API usage, with credential leaks within cloud-based AI workflows rising at an alarming rate. Attackers no longer bother with the front door of a single enterprise; instead, they target SaaS platforms that aggregate thousands of API keys. This “middleman” strategy allows a single successful breach to yield a harvest of credentials from multiple high-value targets simultaneously.

Furthermore, the proliferation of “shadow AI”—where employees use unsanctioned tools—leaves organizations with massive blind spots in their secret management. This lack of visibility makes it nearly impossible to track where organization-level secrets are stored or how they are being accessed. Consequently, the statistical probability of a credential-based breach has become a primary concern for chief information security officers globally.

Real-World Implications: The Braintrust Incident and Beyond

The intrusion into Braintrust’s AWS accounts forced immediate action across its client roster, highlighting the fragile nature of shared security models. Beyond the initial risk of data exposure, stolen keys are frequently repurposed for “computational resource hijacking.” In these scenarios, malicious actors use pilfered credentials to fund massive, unauthorized model training or inference tasks, leaving the victimized company with an astronomical cloud bill and degraded service performance.

In several documented cases, businesses observed unusual spikes in AI activity within minutes of a credential exposure. These incidents are not merely theoretical risks; they represent immediate financial and operational crises. When a central provider is compromised, the speed at which bad actors can exploit those keys often outpaces the manual rotation efforts of the affected engineering teams.

Industry Perspectives on Centralized Credential Vulnerabilities

Security researchers argue that the centralization of sensitive API keys within evaluation and observability tools creates “high-value” targets that are too lucrative for hackers to ignore. These platforms require deep access to function, yet this very access creates a paradox where the tools meant to improve AI performance become the weakest link in the security chain. The reliance on organization-level secrets, rather than scoped or ephemeral credentials, significantly amplifies the potential blast radius of any single leak.

The Future of AI Infrastructure Security: Projections and Challenges

To combat these evolving threats, the industry is pivoting toward a Zero-Trust Architecture specifically designed for AI development environments. This approach emphasizes that no service, regardless of its position in the stack, should be inherently trusted with long-lived credentials. Instead, the move toward automated, short-lived credentialing and real-time anomaly detection is becoming the new standard for preventing the exploitation of exposed keys.

Regulatory pressure is also mounting, as governments demand higher transparency from AI infrastructure providers regarding their security postures. Balancing the need for seamless integration with the necessity of rigorous, proactive credential rotation remains a significant challenge. However, the organizations that successfully implement these automated safeguards will be the ones capable of scaling their AI initiatives without falling victim to the inherent risks of the modern supply chain.

By recognizing the critical shift toward infrastructure-based threats, enterprises began demanding higher security transparency from their AI partners. This transition away from static secret management toward dynamic, resilient systems provided the necessary foundation for protecting the proprietary workflows driving innovation. Ultimately, the industry learned that securing the AI revolution required more than just better models; it demanded a fundamental redesign of how the world handles digital trust.