Software now ships at machine speed, and the uncomfortable truth is that AI-generated code and autonomous agents do not simply accelerate delivery—they amplify hidden risks, replicate insecure patterns at scale, and dissolve the familiar checkpoints that once slowed dangerous changes from reaching production. This report examines how delivery moved from human-paced workflows to AI-orchestrated pipelines, why traditional security controls faltered, and which enforceable, zero-trust architectures have emerged as the baseline for safely operating at today’s velocity.

Across cloud, platform engineering, and DevSecOps, a new operating model has taken hold: security as a product constraint, encoded in policy and enforced by platforms from prompt to production. The shift has not been cosmetic. Identity, provenance, and runtime behavior now define trust, while continuous evidence changes how audits are passed and incidents are investigated. The pages that follow trace the market’s momentum, the hard problems that resisted old tools, and the reference patterns that separated leaders from laggards.

The AI-Native Security Landscape: Scale, Stakes, and Who Matters Now

From Human-Paced to Machine-Speed: How Generative AI Rewrites Software Delivery

Generative AI compressed ideation, coding, and review into minutes, not sprints, flooding repositories with functional changes that looked plausible yet frequently imported outdated libraries, permissive defaults, or fragile patterns. Agents began triaging tickets, writing tests, opening pull requests, and even merging them, collapsing separation between creation and approval into a single automated loop.

This velocity reframed risk. Instead of isolated defects, organizations faced systemic replication of insecure templates across services and teams. The practical implication was stark: pipelines needed to assume unreviewed changes would attempt to ship and to enforce non-negotiable constraints automatically, irrespective of who or what wrote the code.

Segments and Surface AreCode, Pipelines, Runtime, and Governance Converge

Attack surface expanded along four intertwined segments: code generation, CI/CD automation, runtime execution, and governance data. Unsafe code paths, mis-scoped connectors, and permissive deployment gates interacted in ways that static checks seldom anticipated, especially when agents held broad, tool-enabled access.

Moreover, governance itself became a surface. Prompts, model variants, fine-tuned weights, and agent configurations turned into artifacts that required lineage and policy. A secure delivery program now had to manage not only binaries but also the instructions, identities, and context that produced them.

Key Players and Platforms: Cloud, CI/CD, Security Vendors, Open Source, and Standards Bodies

Cloud providers embedded provenance, signing, and identity-bound execution deeper into managed services, while CI/CD platforms exposed native policy-as-code and attestation gates. Security vendors shifted from pattern-based scanners to behavior-aware defenses, integrating IAST, RASP, and data-flow analysis that spoke the language of agents and tools.

Open source communities accelerated standards such as SLSA and SBOM extensions for AI, and industry bodies advanced NIST AI RMF, OWASP for GenAI, and MITRE ATLAS. Together they created a common fabric for enforceable controls, easing integration across heterogeneous stacks.

Why It Matters: Systemic Risk From Replicated Patterns and Agent-Driven Operations

Models trained on noisy corpora reproduced legacy anti-patterns—hardcoded secrets, broad IAM roles, and deprecated APIs—that spread across microservices like genetic traits. In parallel, attackers used the same AI capabilities to speed reconnaissance, craft targeted prompts, and generate exploits that slipped through static defenses.

Agent-driven operations magnified consequences. A single mis-scoped connector or unchecked tool invocation could traverse environments in seconds, turning what once was a contained mistake into a cross-system incident. Only platform-enforced constraints with real-time containment could keep pace.

Momentum Reshaping Security at Machine Speed

Trends Driving AI-Native Delivery and New Security Opportunities

Three shifts defined the ermodel-assisted creation became default, agents took over repetitive engineering work, and policy moved from guidance to gate. These trends enlarged the buyer base for identity, provenance, and runtime controls while shrinking tolerance for noisy tools that blocked flow without raising true signal.

At the same time, developer experience gained strategic status. Golden paths, secure-by-default templates, and guardrailed prompt libraries proved decisive, because teams adopted controls that made the fast path the safe path and bypassed those that created friction without clarity.

Market Signals and 2026 Outlook: Adoption Curves, Spend Priorities, and Performance Benchmarks

Budgets prioritized identity modernization, policy automation, runtime enforcement, and unified telemetry that linked prompts, models, principals, and actions. Performance benchmarks shifted from raw vulnerability counts to time-to-block for policy violations, false-positive rates, and audit readiness with continuous evidence.

Adoption curves favored platform-native controls over bolt-on tools. Organizations that consolidated on policy engines, standardized attestations, and AI-BOM achieved lower incident rates and faster mean time to remediate, establishing a repeatable pattern for sustainable scale.

Hard Problems We Must Solve to Ship Safely at AI Scale

Why Legacy Controls Break: Manual Reviews, CVE Scanners, and Periodic Audits at Their Limits

Manual review cycles could not match agent throughput, and quarterly audits arrived after drift had already compounded. CVE-focused scanners missed novel failure modes that did not map to known signatures, especially when risk emerged from identity scope or cross-service behavior.

Even effective point tools failed when isolated. Without enforced provenance, identity-bound builds, and deterministic gates, findings piled up while unsafe code still deployed. The lesson was clear: security required automatic stoppage, not just detection.

AI-Native Threats That Evade Pattern Matching: Prompt Injections, Tool Abuse, Identity Misuse, Model Poisoning

Prompt injection, both direct and indirect, subverted agent intent, turning documentation or tickets into control channels. Tool and connector abuse let compromised agents pivot laterally, while identity misuse weaponized legitimate permissions to exfiltrate data.

Model supply chain risks—poisoned fine-tunes, tampered weights, or unvetted checkpoints—altered behavior before a single line of code executed. Pattern matchers rarely saw these moves; provenance, sandboxed evaluation, and runtime policy did.

Precision Over Noise: Building Enforceable, Low-False-Positive Controls That Teams Trust

Controls had to be precise enough to block only what mattered. Data-flow constraints, taint tracking, and context-aware policies reduced spurious alerts by grounding enforcement in observed behavior rather than broad heuristics.

Trust grew when platforms explained violations in developer terms—entry points, sinks, and identity scopes—with actionable fixes. This clarity turned security from adversary into accelerator, shortening feedback loops and discouraging unsafe workarounds.

Shifting Accountability: From Ad Hoc Security Checks to Platform-Enforced Product Constraints

Security matured from a specialist function to an attribute engineered into the platform. Security teams authored invariants—minimum attestation standards, allowed data movement, identity boundaries—while engineering encoded them as gates and defaults.

This redistribution clarified ownership. Teams shipped faster because passing gates meant compliance was already satisfied, and audits drew on continuous evidence rather than reconstructed narratives.

Compliance, Assurance, and Zero-Trust Standards for AI-Driven Pipelines

The Rulebook in Flux: NIST AI RMF, EU AI Act, ISO/SOC, OWASP GenAI, MITRE ATLAS, SLSA, and SBOM/AI-BOM

Standards converged on risk-based governance with observable controls. NIST AI RMF framed risk categories and mitigations, while the EU AI Act and ISO/SOC aligned expectations for documentation, testing, and accountability.

SLSA strengthened build integrity, and SBOM evolved toward AI-BOM to capture prompts, models, datasets, and connectors. OWASP and MITRE contributed concrete attack patterns and countermeasures, aiding threat modeling for agent-intensive systems.

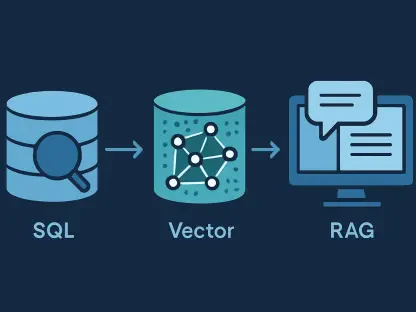

Continuous Evidence by Design: Provenance, Attestations, Lineage, and Audit-Ready Telemetry

Assurance shifted from snapshots to streams. Every build carried attestations for source, dependencies, and environment; every deployment embedded provenance that tied artifacts to identities, prompts, and policies.

Telemetry became audit-ready by default. Unified logs stitched together model calls, agent actions, policy decisions, and runtime outcomes, enabling rapid investigations and defensible compliance reporting.

Policy as Code Everywhere: Enforcing Identity, Data Boundaries, and Deployment Gates Across SDLC

Policy engines enforced identity scopes, data residency, and model usage constraints across code, build, deploy, and run. Violations blocked automatically, with exemptions tied to time, scope, and explicit human approval.

Treating policy as versioned code created traceability. Changes to rules were reviewed and tested like application features, reducing drift and preventing accidental weakening of controls.

The Road Ahead: Architectures, Disruptors, and Where Growth Will Come From

Reference Blueprint for Security-First Delivery: Zero Trust From Prompt to Production

A mature blueprint anchored on five layers: identity-first access; signed and attestable artifacts; policy-gated pipelines; runtime behavior enforcement; and continuous governance with lineage. Each layer assumed the others might fail and provided compensating controls.

Crucially, prompts and model selections were treated as inputs with provenance, not ambient context. This view aligned AI-era artifacts with traditional software assets under a common trust model.

Treating Agents as First-Class Identities: Least Privilege, Scoped Roles, Ephemeral Access, and Lifecycle Controls

Agents operated as named principals with roles scoped to specific repos, environments, and tools. Access was short-lived, obtained through on-behalf-of delegation or keyless signing, and logged end to end.

Lifecycle hygiene mattered. Unused agents were retired, permissions rotated, and behaviors periodically reviewed against expected task boundaries to catch silent scope creep.

Runtime Reality Checks: IAST/RASP, Taint Tracking, Data-Flow Constraints, and Deterministic Containment

Runtime controls observed what code and agents actually did. IAST illuminated exercised paths during tests; RASP guarded production with in-process policies; taint tracking enforced data-handling rules from entry to sink.

Containment emphasized determinism. When an agent attempted restricted actions—querying metadata services, invoking unapproved tools, crossing data boundaries—blocking was immediate, logged, and minimally disruptive to legitimate flow.

Traceable AI Supply Chains: AI-BOM, Model and Prompt Lineage, and Source-of-Truth Tagging

AI-BOM recorded model bases, fine-tunes, datasets, prompts, and connectors, each bound to accountable owners. Source control tagged AI-generated commits with originating prompts and model versions to make authorship attributable.

This lineage reduced mean time to understand incidents and supported targeted patching. Instead of sweeping rebuilds, teams could remediate specific model or prompt variants tied to risky behavior.

Operating Models That Scale: Golden Paths, Automated Assurance, and Human Gating for High-Risk Actions

Golden paths supplied hardened templates, guardrailed prompts, and pre-approved connectors, minimizing bespoke risk. Automated assurance ran continuously, surfacing deviations early and explaining them clearly.

Human gating remained for high-impact changes—broad IAM updates, cross-environment deployments, or model switches with material risk. By reserving human attention for the few decisions that warranted judgment, throughput stayed high without sacrificing control.

What Good Looks Like by 2026—and How to Get There Now

Non-Negotiables for 2026: Policy Automation, Identity Modernization, AI-BOM, Runtime Enforcement, and DLP

Effective programs standardized on policy engines across CI/CD and clusters, unique scoped identities for agents, comprehensive AI-BOM, runtime enforcement with low false-positive rates, and data loss prevention that covered inputs, outputs, and logs. These became table stakes, not differentiators.

Organizations that lacked any one of these pillars faced recurring incidents, audit delays, or stalled delivery. Those that implemented all five reported steadier release cadence and fewer urgent security stops.

A Pragmatic Adoption Path: Milestones, KPIs, and Phased Rollout Across Teams and Environments

Progress favored phased rollout. Start with identity hardening and provenance in the build, then enforce deployment gates, followed by runtime containment and unified telemetry. Each phase established measurable KPIs—policy coverage, time-to-block, alert precision, and audit completeness.

Success depended on embedding controls into developer tools and workflows. When security surfaced as helpful guidance inside pull requests and pipelines, adoption spread without heavy mandates.

Red Teaming for Agents: Continuous Adversarial Testing for Prompt Injection, Tool Misuse, and Sleeper Behaviors

AI red teams probed for direct and indirect prompt injection, tool abuse, identity escalation, and sleeper behaviors that activated under specific triggers. Test suites evolved alongside models and policies, mirroring production tasks and connectors.

Findings fed into hardened prompts, stricter scopes, and refined runtime rules. The loop closed when results produced new policy tests that guaranteed regressions were blocked automatically.

Avoiding the Pitfalls: Reducing False Positives, Aligning Ownership, and Making the Secure Path the Easiest Path

False positives eroded trust and invited bypasses. Precision improved when policies referenced concrete identities, data classes, and runtime signals rather than generic patterns. Clear ownership further reduced friction by aligning responsibilities between platform, product, and security.

Developer experience proved decisive. Secure-by-default templates and one-click golden paths encouraged teams to stay within governed boundaries, while transparent exceptions avoided shadow pipelines.

Conclusions and Recommendations: Evidence-Driven Enforcement That Enabled Speed Without Sacrificing Safety

The market’s trajectory confirmed that scale and speed reshaped security, and durable wins came from platform-enforced constraints rather than manual heroics. Provenance, identity, and runtime behavior formed the new trust triad, while continuous evidence simplified audits and accelerated incident response.

Looking ahead, the most effective next moves centered on deepening policy coverage to new agent types, tightening identity scopes with time-bound credentials, and expanding AI-BOM to include evaluation datasets and safety test suites. Investments that coupled low-friction developer experience with precise, observable enforcement continued to compound returns, and organizations that treated security as an architectural property rather than a checkpoint remained best positioned to ship fast without giving up safety.