The high-stakes transition from a controlled pilot environment to a full-scale corporate deployment often reveals the precarious nature of retrieval-augmented generation systems that rely on a single data source. During a demonstration, an internal assistant appears flawless, smoothly answering questions about product features and company history using a basic vector database and a modern large language model. However, the moment this system encounters the messy reality of production—where data is fragmented across legacy databases, real-time spreadsheets, and thousands of unstructured documents—the illusion of intelligence frequently shatters. This failure is rarely a reflection of the model’s reasoning capabilities but is instead a direct result of a retrieval layer that prioritizes semantic “vibes” over factual precision.

Consider the common scenario where a sales representative asks for a specific contract renewal date and receives a beautifully written, highly confident paragraph that happens to be cited from the wrong client’s document. This occurs because the vector search identified a high degree of conceptual similarity between the query and a document from a different account, failing to recognize that the client name was the most critical filter. In an enterprise setting, fluency without accuracy is a liability, not an asset. Success in the current landscape of late 2026 requires moving past the simplistic idea that more data equals better answers and instead building an architecture that understands exactly where the truth lives and how to extract it with surgical precision.

Beyond the Demo: Why Fluent AI Assistants Often Fail in Production

The disparity between a successful demo and a failed production rollout usually centers on the complexity of real-world data environments. In a controlled environment, a vector database is often loaded with clean, static information that lacks the contradictions and versioning issues found in a live corporate repository. When an AI assistant goes live, it must suddenly navigate a landscape where a compliance officer might ask about a current data retention policy and receive a version from several years ago because it was semantically similar to the query. The model, designed to be helpful and fluent, summarizes this outdated information without a disclaimer, leading to potential regulatory risks. The failure here is systemic; the retrieval layer handed the model stale data, and the model had no external context to verify its accuracy.

This phenomenon highlights a fundamental truth: retrieval-augmented generation is only as strong as the “R” in its name. Most teams discover that applying a single retrieval method to every user query is the primary driver of failure. Vector search is an incredible tool for finding conceptual matches, but it is not a replacement for the deterministic logic of a relational database. When a system treats every question as a search for similarity, it loses the ability to handle exact lookups or role-based access controls effectively. If a developer can query data their role should not access simply because it was retrieved in a broad vector sweep, the system has failed its primary mission of security and governance.

To overcome these challenges, organizations must shift their focus from the generative capabilities of the model to the strategic management of the data pipeline. This involves recognizing that the model is simply a processor of the context it is provided. If the context is wrong, stale, or unauthorized, the output will follow suit. Building a robust production system requires a retrieval layer that is aware of data freshness, permission levels, and the specific “shape” of the question being asked. By moving away from the illusion of universal similarity, teams can start constructing an architecture that prioritizes the retrieval of the right data over the generation of a confident-sounding answer.

The Hidden Cost of One-Size-Fits-All Retrieval

The industry-wide reliance on vector databases as a universal solution for retrieval has created a hidden technical debt that surfaces during scale. While vector embeddings excel at matching the general intent of a query, they are fundamentally poor at performing structured aggregations, such as calculating revenue totals or filtering by specific date ranges. Using a vector search to answer a question about “total sales in the second quarter” is akin to using a compass to measure the exact length of a room; it provides a general direction but lacks the precision required for the task. This mismatch leads to “hallucinations” that are actually just the model doing its best with the wrong set of numbers provided by a weak retrieval layer.

In an enterprise setting, using the wrong retrieval method is not merely a matter of inefficiency—it introduces significant compliance and operational risks. When an AI system ignores hard metadata filters in favor of semantic similarity, it risks exposing sensitive information to unauthorized users or providing incorrect legal guidance based on outdated documents. The cost of these errors is far higher than the computational expense of a more complex retrieval architecture. To bridge this gap, modern teams are implementing what is known as a Retrieval Decision Framework. This framework acts as a traffic controller, analyzing the user’s intent and routing the query to the most appropriate data source, whether that be a structured SQL database, a keyword search engine, or a vector store.

The move toward a Retrieval Decision Framework represents a maturation of the field, acknowledging that different types of information require different retrieval logic. A question about a specific numerical value requires a deterministic path, while a question about general sentiment benefits from the broad reach of embeddings. By categorizing queries at the point of entry, developers can ensure that the system remains both accurate and secure. This approach also allows for better resource allocation, as simple lookups can be handled by efficient SQL queries, reserving more expensive vector processing for queries where intent and nuance are truly necessary.

Mapping Data Retrieval Methods to Specific User Needs

SQL remains the undisputed king of deterministic data, serving as the essential tool for any query that demands binary accuracy. When a user asks for revenue totals, contract expiration dates, or specific record filters, the answer exists in a structured table, not a conceptual cloud. SQL allows for exact aggregations and joins that vector databases simply cannot replicate. Furthermore, SQL is the only reliable choice for real-time data freshness, as relational databases reflect the current state of a system at the exact moment of the query. Relying on a vector index for a question about “current inventory levels” is inherently risky because the index might be hours or days behind the actual database state.

Keyword search, often powered by engines like Elasticsearch and the BM25 algorithm, remains a vital component of the retrieval stack, particularly when exact phrasing is paramount. In many professional fields, specific terms—such as legal citations, API error codes, or specialized policy names—carry more weight than the general concept of the sentence. Keyword engines allow for precise control over text analysis, field-specific boosting, and complex metadata filtering that modern vector stores often struggle to match. When a developer searches for a specific “v2.4 deprecation notice,” they need that exact document, not a conceptually similar article about software updates in general.

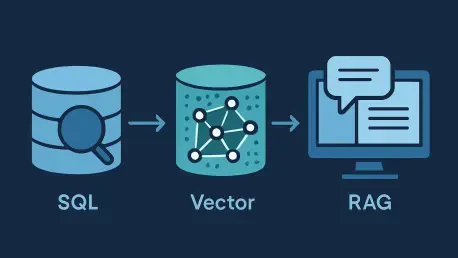

Vector retrieval finds its true utility in handling intent-based queries where the user might not know the exact terminology. It is the perfect tool for identifying themes in customer feedback, summarizing long-form support tickets, or answering questions phrased in natural language that use synonyms. However, even the most advanced embedding models have limitations; vector similarity does not equate to factual correctness. A high cosine similarity score only indicates that two pieces of text are related in a latent space, not that the information is verified or authorized for the user. By isolating these three paths—SQL for structure, Keyword for exactness, and Vector for intent—developers can build a system that responds with the appropriate tool for the specific job.

Lessons from the Field on Hybrid Precision and Governance

High-performing production environments have proven that the majority of complex queries do not fit neatly into a single retrieval bucket. Instead, they require a hybrid approach that merges the strengths of different systems into a unified response. For instance, a query asking for “the top three complaints from enterprise customers in the last thirty days” requires a SQL-style filter for the customer tier and date range, followed by a vector-based analysis of the complaint sentiment. Systems that attempt to solve this using only one method either fail to filter correctly or fail to understand the sentiment. Industry leaders are increasingly adopting Reciprocal Rank Fusion to merge results from different systems into a single, prioritized list of candidates for the model.

Governance has also become a central pillar of successful RAG implementations, moving from an afterthought to a core architectural requirement. The emergence of the Model Context Protocol highlights a significant shift toward agentic retrieval, where AI systems use governed tool calls to interact with live data through established APIs and CRMs. This approach ensures that the AI is not just searching a static index but is instead interacting with the organization’s current source of truth through a layer that enforces permissions and business logic. This move toward “live” retrieval significantly reduces the risk of data staleness and ensures that every piece of information provided to the model has passed through a standard security gate.

Furthermore, precision in retrieval is being enhanced through the use of re-ranking models. After a set of candidate documents is retrieved from SQL, keyword, and vector sources, a specialized cross-encoder model can be used to evaluate each document’s actual relevance to the query. This step acts as a final quality check, ensuring that the most pertinent information is placed at the top of the context window. While this adds a small amount of latency, the increase in factual accuracy and the reduction in model confusion make it a worthwhile trade-off for enterprise-grade applications. These hybrid strategies demonstrate that the future of AI is not just about larger models, but about more intelligent and governed data access.

A Framework for Building a Trustworthy Hybrid RAG Pipeline

Transitioning from a fragile demo to a robust production system requires a disciplined framework that prioritizes data integrity and query routing. The process begins with a lightweight intent classifier that serves as the entry point for every user interaction. This classifier determines if a query is structured, semantic, or a mixture of both, and routes it accordingly. Before any data reaches the generative model, hard metadata filters must be applied to enforce role-based access control and ensure that only authorized documents are considered. This prevents the “leaking” of information and ensures that the retrieval process respects the same security boundaries as any other enterprise application.

Once the relevant data chunks are gathered from the various retrieval paths, the system should employ a re-ranking step to verify the suitability of the context. This stage is critical for filtering out noise that might have a high similarity score but low actual utility. Following re-ranking, the model should be governed by a strict evidence-based generation policy. This means the model is explicitly instructed to only answer using the provided context and to provide direct citations for every claim it makes. If the retrieved context is insufficient to answer the question, the system should be designed to admit it cannot find the answer rather than attempting to fill the gaps with model-weighted knowledge.

The final layer of a trustworthy pipeline is the implementation of active guardrails that monitor for freshness and contradictions. Every retrieved chunk should carry a timestamp, and if the data is deemed too old for the specific query type, the system should either flag the staleness or attempt a live refresh. By integrating these permission, freshness, and correctness checks directly into the retrieval flow, organizations can transform their AI from a risky experiment into a reliable business asset. This structured approach ensures that the final output is not just a fluent response, but a verified and auditable piece of information that users can act upon with confidence.

Building a production-ready system was once viewed as a challenge of fine-tuning models, but experience has shifted that perspective toward the governance of the data itself. Teams that focused on the orchestration of SQL, keyword search, and vector retrieval were the ones who successfully moved their assistants out of the lab and into the hands of users. This journey required a departure from the “one-size-fits-all” mentality and a commitment to architectural precision. In the end, the most valuable AI systems were not necessarily those with the largest parameter counts, but those that were built upon a foundation of governed, multi-path retrieval. The transition to this hybrid model allowed organizations to maintain security while unlocking the true potential of their unstructured and structured data assets. By treating retrieval as a first-class engineering problem, the industry moved closer to creating AI that could be trusted in even the most regulated environments. This shift underscored the reality that in the world of enterprise AI, the quality of the answer was always a direct reflection of the integrity of the retrieval process. Moving forward, the focus remained on refining these decision frameworks and integrating even more live data sources through governed protocols. The era of the simple vector demo ended, replaced by a new standard of hybrid, high-precision retrieval that prioritized facts over fluency. This evolution ensured that AI remained a powerful tool for productivity rather than a source of sophisticated misinformation. Through the careful application of these principles, the gap between conceptual AI and practical utility was finally bridged.