The realization that a green deployment pipeline does not equate to a healthy production environment has finally forced a fundamental reckoning within the global engineering community. For years, the industry relied on Infrastructure as Code to automate the provisioning of resources, yet this approach frequently failed to address the chaotic reality of high-scale, cloud-native environments. Intent-Driven Infrastructure has emerged as the logical successor, moving beyond the limitations of static snapshots to create systems that do not just exist but actively participate in their own survival. This review examines the architectural shift toward behavioral autonomy and evaluates how this technology is redefining the boundaries of reliability and operational efficiency in modern enterprise landscapes.

The transition from traditional automation to intent-driven systems represents a departure from the “fire and forget” mentality of early cloud management. In the previous era, engineers defined specific resources—such as a virtual machine with specific memory and storage—and expected the platform to maintain those exact parameters. However, as systems became more distributed and ephemeral, the gap between the desired configuration and the actual system state began to widen. Intent-Driven Infrastructure addresses this by focusing on outcomes rather than instructions. Instead of mandating the number of servers, an organization specifies the required latency or throughput, allowing the underlying platform to determine the optimal configuration required to meet those goals in real time.

This shift is particularly relevant because modern cloud-native environments are effectively living organisms. They are characterized by constant flux, where traffic patterns, third-party API performance, and hardware reliability change by the second. Static Infrastructure as Code is fundamentally ill-equipped to handle these “Day 2” operations because it lacks a feedback loop; it can build a house, but it cannot fix a leak that develops six months later. Intent-driven systems bridge this gap by integrating observability directly into the control plane, ensuring that the infrastructure remains aligned with business objectives regardless of environmental volatility.

The Shift from Static Configuration to Dynamic Intent

The limitations of Infrastructure as Code became glaringly apparent as organizations reached the limits of manual intervention. In a static model, every change requires a human to update a script, test the configuration, and trigger a deployment pipeline. This process, while safer than manual console clicks, is inherently slow and reactive. It creates a bottleneck where the speed of the business is limited by the speed at which an engineer can respond to a dashboard alert. Intent-Driven Infrastructure removes this bottleneck by replacing imperative commands with declarative intentions, allowing the system to handle the “how” while humans focus on the “what.”

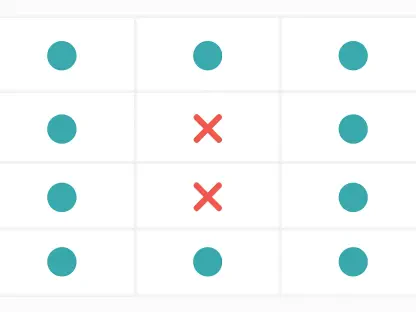

Moreover, the emergence of this technology signals a move away from “snapshot” thinking. A Terraform file or a CloudFormation template is a record of what the infrastructure was supposed to look like at the moment of deployment. It does not account for the inevitable “drift” that occurs when an autoscaler kicks in, a node fails, or a security patch is applied out of band. Intent-driven systems, in contrast, treat the desired state as a continuous requirement rather than a one-time event. This ensures that the system is always working to converge the current reality with the stated intent, providing a level of resilience that static code simply cannot match.

The transition also facilitates a more sophisticated approach to multi-cloud and hybrid environments. When the focus is on intent, the specific underlying provider becomes less relevant. An organization can declare an intent for high availability across regions, and the intent-driven orchestrator can manage the nuances of different cloud APIs to achieve that outcome. This abstraction layer is vital for modern enterprises looking to avoid vendor lock-in while maintaining a consistent operational posture across diverse and complex digital estates.

Core Components of Intent-Based Systems

Intent-Driven Control Loops

The primary mechanism of Intent-Driven Infrastructure is the continuous control loop, a concept borrowed from robotics and cybernetics. This cycle consists of four distinct phases: declaration, observation, evaluation, and decision. In the declaration phase, the user defines the desired state through a high-level policy. The observation phase involves the system constantly gathering telemetry data from every corner of the environment, from network latency to database query times. Unlike traditional monitoring, this data is not just for human consumption; it is the vital fuel for the machine-led decision-making process.

Once the data is gathered, the evaluation phase compares the real-world metrics against the declared intent. This is where the true power of the technology lies. Instead of triggering an alert because a single metric hit a threshold, the system evaluates the context of the entire environment. If the intent is “sub-100ms latency” and the current latency is 150ms, the system does not just scale up; it looks for the root cause. If it determines that the latency is caused by a cold-start issue in a serverless function, it might pre-warm resources rather than simply adding more instances. This systemic reasoning distinguishes intent-driven loops from simple, rigid automation scripts.

The final phase, the decision, is where the system takes action to close the gap between reality and intent. This could involve rebalancing workloads, adjusting network routes, or even rolling back a recent deployment that introduced a performance regression. Because this loop runs thousands of times per hour, the system can detect and resolve micro-fluctuations before they ever escalate into a user-facing outage. This creates a self-healing environment that requires significantly less manual oversight, allowing engineering teams to reclaim time previously spent on “toil” and routine maintenance.

Policy as Code and Admission Control

Continuous enforcement is the cornerstone of safety in an intent-driven world. Policy as Code extends the principles of Infrastructure as Code by defining the “guardrails” within which the system must operate. This is not just about provisioning; it is about the ongoing governance of the environment. Admission control mechanisms act as the gatekeepers, ensuring that no change—whether initiated by a human or an automated process—violates the core security or operational policies of the organization. If an intent-driven loop decides to scale a cluster, the policy engine ensures that the new resources meet encryption standards and are placed in the correct security zones.

This layer of defense is critical for preventing security drift. In traditional environments, security is often a “point-in-time” check during the audit or deployment phase. However, in a living cloud environment, a system that was secure at 9:00 AM could be vulnerable by 9:05 AM due to a configuration change or an unexpected interaction between services. Policy as Code provides a persistent watchtower that monitors for these deviations. If a resource becomes non-compliant, the system can automatically bring it back into alignment or isolate it from the network, providing a level of proactive security that manual teams find impossible to maintain at scale.

Furthermore, these policies provide the necessary context for autonomous decision-making. By encoding engineering judgment into the platform, organizations can ensure that the system does not make “technically correct but operationally disastrous” decisions. For example, a policy might forbid any infrastructure changes during a critical “black Friday” sales window, even if the intent-driven loop detects a minor optimization opportunity. This synergy between autonomous action and human-defined constraints creates a balanced ecosystem where speed does not come at the expense of stability.

Behavior-Centric Performance Modeling

The industry is currently witnessing a transition from tracking raw configuration metrics to monitoring actual system outcomes. In the past, success was measured by whether a server was “up” or how much CPU was being utilized. However, these metrics are often disconnected from the actual user experience. A server can be “up” and using 10% CPU while still returning 500-level errors to every customer. Behavior-centric performance modeling shifts the focus to what the system is actually doing for the user. It prioritizes indicators like latency, error rates, and availability as perceived by the end client.

By modeling the infrastructure around these behaviors, intent-driven systems can make more intelligent trade-offs. If the primary intent is user-perceived availability, the system might choose to route traffic through a more expensive network path if the primary path is experiencing packet loss. This type of value-based decision-making is only possible when the infrastructure is aware of its own performance characteristics. It moves the conversation away from “is the hardware working?” to “is the business service functioning as promised?”

This approach also simplifies the management of Service Level Objectives (SLOs). Instead of engineers manually correlating dozens of disparate dashboards to understand the health of a service, the intent-driven platform provides a unified view of performance relative to the SLO. It can predict when an SLO is at risk of being breached and take preemptive action to prevent it. This predictive capability is a significant advancement over reactive alerting, as it allows organizations to maintain a consistent level of service even during periods of extreme volatility or unforeseen technical challenges.

Emerging Trends in Autonomous Operations

The latest developments in the field are characterized by a move from simple “if-this-then-that” logic to true machine autonomy. Early automation was essentially a series of hard-coded scripts that could only handle anticipated scenarios. If an event occurred that was not in the script, the automation failed. Modern autonomous operations, however, utilize machine learning and advanced heuristics to exercise judgment based on the specific environmental context. These systems do not just follow a path; they navigate a landscape, adjusting their strategy based on the real-time feedback they receive from the infrastructure.

One notable trend is the integration of “causal reasoning” into autonomous systems. Unlike traditional AI, which might identify a correlation between two events, causal AI attempts to understand the “why” behind a system failure. By identifying the root cause of an issue, the autonomous agent can take more precise corrective action. For instance, if a database is slow, the system can determine if the cause is a poorly optimized query, a saturated network link, or a failing storage volume. This precision reduces the risk of “automated mistakes,” where a system tries to solve a software problem by throwing more hardware at it, which often only exacerbates the underlying issue.

Additionally, we are seeing the rise of “collaborative autonomy,” where multiple intent-driven agents work together to manage a complex ecosystem. In a large enterprise, there might be one agent managing the network, another managing the compute clusters, and a third managing security. These agents communicate and negotiate to ensure that their actions are aligned and do not conflict. This micro-management at scale allows for a level of optimization that is far beyond human capability, turning the infrastructure into a self-tuning machine that continuously optimizes for cost, performance, and security simultaneously.

Real-World Applications and Implementation Scenarios

The practical value of Intent-Driven Infrastructure is most visible during high-pressure events, such as traffic surges in Kubernetes clusters. During a sudden spike in user activity, traditional autoscalers often struggle to keep up, leading to “cascading failures” where overloaded services take down their neighbors. An intent-driven system handles this differently. It recognizes the surge and, based on the intent of “maintaining user experience,” might temporarily disable non-essential background tasks to free up resources for the primary traffic. It does this without human intervention, reacting in milliseconds rather than the minutes it would take for an engineer to diagnose the problem.

Another critical application is the management of failed deployments. Automated rollbacks are a standard feature of many modern platforms, but intent-driven systems take this a step further. Instead of just rolling back if a health check fails, the system monitors the actual behavior of the new code in production. If the new version meets its health checks but causes a subtle increase in database latency that threatens the overall system stability, the intent-driven orchestrator can initiate a “canary” rollback. It moves traffic back to the stable version and isolates the new version for analysis, effectively preventing a minor bug from turning into a major outage.

Notable implementations in the financial services and telecommunications sectors have demonstrated the ability of these systems to resolve performance breaches autonomously. In one scenario, a global bank utilized an intent-driven network to automatically reroute high-frequency trading traffic after detecting a sub-millisecond degradation on a primary fiber link. The system identified the latency increase, evaluated alternative paths, and executed the switch long before any human operator could have even seen the alert. This level of responsiveness is no longer a luxury; in many industries, it is a fundamental requirement for remaining competitive in a digital-first economy.

Technical and Operational Challenges

Despite its clear benefits, Intent-Driven Infrastructure is not without significant challenges. The most immediate hurdle is the complexity of defining precise intent. Moving from “I want five servers” to “I want 99.99% availability with a 50ms response time” requires a much deeper understanding of the application and its dependencies. If the intent is poorly defined, the system may take actions that are technically correct according to the instruction but harmful to the overall business. This “alignment problem” requires a new set of skills for engineers, who must now think like systems architects rather than just script writers.

There is also the persistent risk of “automated mistakes.” When a system has the power to make significant changes to the environment without human approval, the potential for a small error to propagate at machine speed is high. An incorrectly configured control loop could, in theory, delete an entire production environment in an attempt to “rebalance” it. Mitigating this risk requires robust testing frameworks and “dry run” capabilities where engineers can simulate the system’s reaction to various scenarios before giving it full autonomy. Building trust in these autonomous systems is a slow process that requires transparent logging and clear explanations for every action taken by the machine.

Furthermore, the cultural shift required for engineering teams is often underestimated. For decades, the measure of a good sysadmin or DevOps engineer was their ability to “fight fires” and manually tune systems. Intent-Driven Infrastructure renders many of these traditional skills obsolete. It requires a move toward a “platform engineering” mindset, where the goal is to build the framework that allows the system to manage itself. Overcoming the resistance to this change and reducing “alert fatigue” through better systemic reasoning are ongoing development efforts that are as much about people and processes as they are about technology.

The Future of Self-Correcting Infrastructure

The horizon of infrastructure management is defined by the integration of increasingly sophisticated AI-driven decision-making. As the data gathered by intent-driven systems grows in volume and quality, the ability of these systems to predict and prevent failures will reach new heights. We are moving toward a state of “anticipatory infrastructure,” where the system can sense a burgeoning issue—such as a failing hard drive in a distant data center or a burgeoning security threat on the dark web—and take corrective action before it ever impacts the production environment. This proactive stance will redefine the meaning of reliability.

The role of the engineer is also poised for a long-term transformation. Rather than being manual operators who respond to the whims of the infrastructure, engineers will become the designers of behavioral frameworks. They will spend their time defining the high-level goals, ethical constraints, and financial boundaries within which the autonomous systems operate. The engineer becomes a “governor” of the system, steering the digital estate toward strategic outcomes while the machine handles the tactical execution. This shift will allow for a massive increase in the scale of systems that a single human can effectively manage, unlocking new possibilities for global-scale applications.

In the long term, we can expect the emergence of “sovereign infrastructure,” where the intent-driven platform is capable of managing its own lifecycle, including negotiating for power, cooling, and hardware replacement in a decentralized marketplace. While this may sound like science fiction, the foundational components—intent, policy, and autonomy—are already being deployed in production today. The trajectory is clear: the less a human has to touch the infrastructure, the more resilient, efficient, and scalable that infrastructure becomes. The future is a world where the system does not just follow orders; it understands the mission.

Strategic Assessment and Summary

The evolution from Infrastructure as Code to Intent-Driven Infrastructure was a necessary response to the overwhelming complexity of modern cloud ecosystems. While IaC provided the initial foundation for automation, it has proven insufficient for managing the “Day 2” reality of living, breathing systems. The move toward intent-based models represents a strategic pivot from prescriptive management to outcome-based governance. It acknowledges that in a world of microservices and ephemeral resources, the only way to maintain stability is to build that stability directly into the fabric of the platform itself.

The current state of the technology is one of high potential tempered by significant implementation hurdles. Organizations that successfully navigate the shift to intent-driven models are seeing dramatic improvements in MTTR (Mean Time To Recovery) and overall system resilience. However, the requirement for precise intent definition and the need for a major cultural shift in engineering teams cannot be ignored. The verdict is clear: Intent-Driven Infrastructure is no longer a niche experimental concept; it is the vital architecture for any organization that intends to build and operate resilient, self-sustaining cloud architectures in an increasingly unpredictable world.

The transition toward intent-driven models represented a fundamental shift in how digital systems were perceived. By moving the focus from static configuration to dynamic behavior, organizations successfully reduced the burden of manual operations and improved the reliability of their services. This change necessitated a new approach to engineering, where the design of behavioral frameworks became more important than the writing of deployment scripts. The adoption of these autonomous systems was not merely a technical upgrade but a strategic move that allowed businesses to operate at a scale and speed that was previously impossible. Looking forward, the continued refinement of these self-correcting architectures will be essential for managing the next generation of global digital infrastructure. Organizations must now focus on maturing their policy definitions and enhancing the observability feedback loops to ensure their autonomous systems remain aligned with ever-evolving business goals. This progress will solidify the role of infrastructure as a proactive partner in business success rather than a reactive cost center.