Vijay Raina is a seasoned expert in enterprise SaaS technology and software architecture, currently serving as a lead software engineer. With extensive experience in designing scalable systems and leading development teams, Vijay has moved beyond the initial skepticism surrounding AI tools to pioneer what he calls “Agentic Development.” By leveraging tools like Cursor and Claude, he has transformed his workflow from manual coding to a high-level orchestration of AI agents, focusing on strategic planning and rigorous architectural oversight.

The following discussion explores the shift from writing code to refining AI-generated plans, the dangers of subtle logical hallucinations, and the organizational bottlenecks created when development speed outpaces traditional review cycles.

When shifting from simple code suggestions to an agentic workflow, how does your focus move from writing code to refining plans? What specific steps do you take to catch missing scenarios or wrong assumptions during the initial brainstorming phase with the AI?

In this new workflow, my role has shifted from being a builder to acting as a lead architect and peer reviewer for an invisible team. I start by brainstorming the problem with the AI, but I don’t let it write a single line of code until we have a rock-solid plan. I meticulously read through the proposed steps, looking specifically for edge cases—like how a new feature handles a network failure or a database timeout—that the AI might have glossed over. It’s a rhythmic process of adding context and providing feedback until the plan perfectly aligns with the project requirements. Once the plan is refined, the development phase follows, often resulting in working code that includes up to 85% unit test coverage as a standard part of the deliverable rather than a last-minute addition.

Subtle hallucinations in logic, such as an AI-inferred legacy fallback that wasn’t requested, can be more dangerous than made-up syntax. How do you maintain the domain knowledge needed to spot these errors, and what is your process for validating that the AI’s assumptions match the actual requirements?

The danger isn’t the AI making up a library; it’s the AI making a “reasonable” but incorrect logical leap based on common patterns. For instance, I recently worked on a feature flag implementation where the AI automatically added a fallback to a legacy flow during a network error, even though my requirements specifically forbade it for safety reasons. To catch these, I treat the AI’s plan as a formal proposal that requires a deep understanding of the existing system’s constraints. You have to maintain your edge as a domain expert because catching these subtle assumptions is actually more mentally demanding than writing the code yourself. I validate every “logical inference” against the specific business logic to ensure the AI hasn’t prioritized a generic coding pattern over a specific project necessity.

If a five-day task is completed in one day, existing review cycles and time-zone delays often become the primary bottleneck. How can organizations redesign their delivery processes to match this increased pace? What specific changes should be made to traditional review windows to prevent frustration?

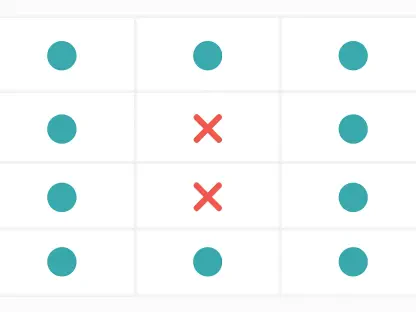

We are currently seeing a massive disconnect where a task that used to take five days is finished in one, yet it still sits in a 24-hour review queue designed for a slower era. Organizations need to recognize that the bottleneck has shifted from “writing” to “merging,” and they must start redesigning these windows to be more dynamic. This might mean moving away from rigid 24-hour global review policies and instead creating “fast-track” lanes for AI-assisted PRs that meet certain safety criteria. It is incredibly frustrating for an engineer to have a “ready” feature sitting idle for days simply because the organizational process hasn’t kept pace with the technology. We need to start having honest conversations about how to shorten these feedback loops without sacrificing the quality that human oversight provides.

AI tools enable massive code changes, yet keeping pull requests small and reviewable remains essential. What discipline is required to break down agentic outputs into manageable slices? How do you ensure that a “working” deliverable also meets the standard of being easy for a human to audit?

Even though the AI is capable of generating massive, multi-file changes in seconds, I exercise strict discipline by breaking the work into small, digestible slices before the AI starts. I plan my sessions around specific sub-tasks so that each output results in a Pull Request that a human colleague can actually understand and audit in a few minutes. This intentional decomposition prevents the “wall of code” effect that often leads to reviewers rubber-stamping changes they don’t fully comprehend. My goal is to ensure that while the AI did the heavy lifting, the final PR remains a “human-scale” deliverable that honors our team’s standard for maintainability and clarity.

If we integrate agents directly into the review process to check test coverage and find conflicts, where should the line be drawn between automated assistance and human judgment? What foundational requirements, such as test suite reliability, must be in place before an organization can safely trust AI-assisted merges?

The line should be drawn at the distinction between “validation” and “decision-making.” Agents are fantastic at the objective parts of a review—identifying merge conflicts, checking if test coverage has dropped, or ensuring linting rules are met—but humans must remain the final decision-makers for architectural alignment. To even consider this level of automation, an organization must have a rock-solid foundation of comprehensive unit and integration tests that they trust implicitly. These tests serve as the “contract” that the agent validates; if the test suite is flaky or incomplete, you cannot safely allow an agent to assist in the merge process. Once that trust in the test suite is established, the agent becomes a powerful validator, freeing humans to focus on high-level design choices.

As implementation details become increasingly automated, how is the role of the software architect evolving to prioritize problem framing and system decomposition? What new skills must a lead engineer develop to manage a team of agents while maintaining a high-level vision of the system?

The architect’s role is actually expanding because the “thinking” part of the job has become more critical than the “doing” part. A lead engineer now needs to be an expert in problem framing—learning how to describe a complex system so precisely that an agent can execute it without losing the broader vision. You have to develop a sharp eye for decomposing systems into reviewable slices and a heightened “smell” for flawed assumptions hidden in an AI’s plan. It’s a shift toward more strategic thinking, where your value lies in your ability to maintain a clear picture of the “why” and “how” while the “what” is being generated by the tools at your disposal.

What is your forecast for agentic development?

I believe we are rapidly approaching a point where the “solo developer” will effectively operate with the throughput of a small team, but this will lead to a significant “process wall” in most companies. In the near future, the most successful organizations won’t just be the ones using the best AI tools, but the ones that have completely reimagined their CI/CD pipelines to include AI-assisted reviews and automated validation agents. We will see the role of the developer shift almost entirely to that of a “Reviewer-in-Chief,” where the primary skill is no longer syntax or library knowledge, but the ability to audit AI logic and maintain architectural integrity at high speeds. Eventually, the manual “pull request” as we know it will evolve into a continuous, agent-verified stream of improvements, provided we can build the trust and testing infrastructure to support it.