Modern developers building AI-powered applications frequently encounter a fundamental architectural challenge where traditional linear API routes struggle to manage multi-step, asynchronous, and branching logic effectively. As artificial intelligence moves beyond simple text-based responses into complex media generation, multi-agent reasoning, and automated tool use, the underlying software must evolve from rigid scripts into flexible, state-driven ecosystems. This shift is driven by the reality that an AI request is rarely a single transaction; it is a journey involving intent analysis, credit verification, external service calls, and recursive prompt refinement. Handling these processes with nested conditional statements leads to a phenomenon known as “spaghetti state,” where the flow of data becomes untraceable and modifications to one feature inadvertently break three others. To solve this, engineering teams are increasingly turning to a “Shell and Node” architecture, which decouples state management from execution logic. This design pattern ensures that the application remains robust even as user demands and AI capabilities grow in complexity, providing a structured framework for managing the high-latency and unpredictable nature of modern machine learning services.

The transition to a state-driven engine represents a departure from traditional request-response cycles, favoring a model where the application state is a living entity that evolves through a series of specialized transitions. In this environment, the “Shell” provides the protective runtime and orchestration, while “Nodes” act as isolated, deterministic workers that focus on single tasks. By implementing a centralized “Router,” the developer gains a bird’s-eye view of every possible path a user might take through the system, from a basic chat interaction to a complex video generation pipeline. This clarity is essential for debugging and scaling, as it allows for granular monitoring of each step in the workflow. Furthermore, a state-driven approach facilitates better user experiences by enabling real-time status updates through streaming protocols. When the engine knows exactly which node is executing and what the current state contains, it can provide transparent feedback to the user, such as “analyzing intent” or “processing image,” transforming a black-box operation into a visible, engaging process.

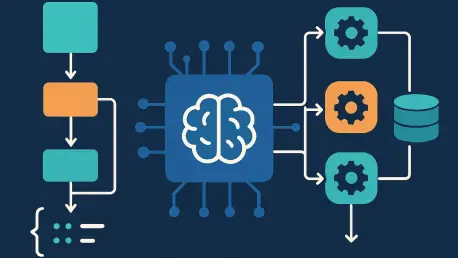

1. Architecture Overview: The Four Pillars of the Shell and Node Pattern

The “Shell and Node” architecture is built upon a foundation of four distinct components that operate in harmony, much like a modern industrial assembly line designed for high-precision manufacturing. At the heart of this system lies the State, which functions as a global context object or a shared “state bus” that carries all the necessary information from the start of the request to the final delivery. This object serves as the single source of truth, ensuring that every part of the engine has access to the data it needs without relying on global variables or side effects. Moving alongside this state are the Nodes, which are specialized action units responsible for performing specific, isolated tasks such as evaluating a prompt, checking a user’s credit balance, or calling an external image generation API. By restricting each node to a single responsibility, the system becomes inherently modular, allowing developers to swap out an LLM provider or update a billing logic without reconfiguring the entire application.

Driving the movement of the state bus is the Pathfinder, or Router, a logical layer that examines the current state and determines which action unit should be engaged next. Unlike hardcoded sequences, the router makes decisions dynamically based on the evolving data within the state object, enabling complex branching logic that can adapt to the user’s intent in real-time. Finally, the Execution Loop, known as the Engine, provides the mechanical energy for the entire process, repeatedly calling the router and the identified node until the workflow reaches a terminal state. This conveyor-belt mechanism ensures that the state moves through the system in a controlled, predictable manner, handling the orchestration of both synchronous and asynchronous tasks. Together, these four pillars create a resilient framework that can manage the inherent volatility of AI services, providing a stable environment for building features that require high reliability and sophisticated logic.

2. Structuring the State Bus: Managing the Shared Memory

Designing a robust State Bus requires a disciplined approach to data modeling, starting with the establishment of immutable inputs that remain constant throughout the entire lifecycle of a workflow. These inputs typically include critical identifiers such as the user UUID, session details, and the initial configuration parameters provided by the front-end, such as the selected AI model or desired aspect ratio for media generation. By treating these fields as read-only constants, the engine prevents accidental modifications that could lead to inconsistent behavior or security vulnerabilities. Surrounding these immutable inputs are phase-specific sub-objects, which act as dedicated storage areas for the outputs generated by each node. For example, an “evaluation” object might store the results of an intent analysis, while a “credit” object tracks the ID of a successful billing reservation. This compartmentalization ensures that the global state remains organized even as the workflow grows to include dozens of different steps and data types.

To maintain strict data integrity within this shared memory, developers utilize discriminated unions and completion flags to signal the progress of the engine. Discriminated unions allow the state to represent different types of data safely; for instance, a media payload can be explicitly typed as an “image” or a “video,” each with its own required fields like resolution or duration. This prevents the runtime errors that occur when a node expects image data but receives video parameters instead. Furthermore, the presence of a specific sub-object serves as a natural completion flag, eliminating the need for redundant boolean variables like “isFinished” or “isProcessed.” If the router sees that the “evaluation” object exists, it knows that the intent analysis step has already been performed. This design philosophy transforms the state bus into a self-documenting record of the workflow’s journey, making it easier for developers to trace exactly how a user’s request evolved from a raw string of text into a completed digital asset.

3. Implementing Workflow Nodes: Building Predictable Action Units

Workflow nodes are designed as pure functions that adhere to a strict, predictable signature, taking the current state and an optional stream writer as their only inputs. This functional approach is critical for the stability of AI applications, as it ensures that each node is deterministic and side-effect-free, making them exceptionally easy to unit test and verify. When a node is invoked, it performs its specific task logic—whether that involves querying a vector database, calling a large language model, or calculating the cost of a transaction—and then returns a partial state update. The engine then takes this partial update and merges it back into the global state bus, ensuring that the central record is updated without requiring the node to understand the full complexity of the entire system. This isolation allows developers to focus on the business logic of a single task at a time, significantly reducing the cognitive load associated with maintaining a large, multi-faceted application.

In the context of AI services where failures are common and latency is high, nodes must also implement atomic patterns for sensitive operations, such as credit deduction or resource allocation. Using a “reserve and confirm” method within a node ensures that a user’s credits are only permanently deducted after an expensive AI generation has successfully completed. If a node responsible for submitting a task to an external API fails, the engine can catch the error and trigger a cleanup operation that releases the reserved credits, preventing the user from being charged for a failed request. This level of transactional safety is often missing in simpler API designs but is essential for production-grade applications that manage financial or computational resources. By building these safeguards directly into the node logic, the workflow engine becomes a self-healing system capable of navigating the edge cases and timeouts that frequently plague distributed machine learning environments.

4. Centralizing Routing Logic: The Intelligence of the Pathfinder

The router functions as the brain of the workflow engine, providing a centralized location where all pathfinding logic is defined and maintained. By inspecting the current contents of the state bus, the router determines the optimal next step, whether that involves proceeding to the next logical task, branching off into a specialized error-handling routine, or terminating the process entirely. One of the primary responsibilities of the router is to check for existing failures; if an error object is present in the state, the router immediately directs the engine to an “end” state to prevent further resource consumption. This short-circuiting behavior is vital for maintaining system efficiency, as it stops the engine from attempting to generate an image or process data if a prerequisite step, such as a credit check or a content safety filter, has already failed. This logic is much cleaner than scattering “try-catch” blocks throughout various API endpoints, as it consolidates failure handling into a single, visible decision point.

Beyond error management, the router uses the state’s structure to evaluate completion and branch by intent, allowing the system to handle a diverse range of user goals through a unified interface. For example, the router might see that a user wants to generate a video rather than a simple chat response and will steer the state bus toward nodes specifically designed for high-compute media tasks. By using the presence or absence of specific sub-objects as triggers, the router can dynamically adapt to the evolving context of the conversation. If a user provides an ambiguous prompt, the router might detect a lack of necessary information in the “evaluation” object and route the state to a “clarify” node instead of a “submit” node. This centralized approach ensures that the application’s behavior is consistent and predictable, providing a clear map of the entire system’s capabilities that can be easily audited or expanded as new features are introduced to the AI platform.

5. Running the Engine: Powering the Execution Loop

The engine serves as the conveyor belt for the state-driven system, orchestrating the continuous cycle of routing and execution that moves a request from inception to completion. At the start of this loop, the engine references a central node registry—a mapping of string names to their corresponding node functions—which allows the engine to invoke the correct logic without needing to know the internal details of each task. As the main loop executes, it calls the router to identify the next step and then fetches and runs the associated node. This repetitive process continues until the router returns a terminal name, such as “end” or “suspend,” signaling that the workflow is either finished or waiting for external input. By maintaining this high-level loop, the engine abstracts away the complexities of control flow, providing a uniform way to execute everything from simple text generation to multi-day processing tasks.

During the execution of each node, the engine manages the merging of data into the global state bus using shallow merging techniques, which keep the update process efficient and straightforward. This approach ensures that nodes only need to provide the specific fields they have updated, while the engine handles the responsibility of maintaining the overall structure of the state. Furthermore, the engine is responsible for global error management, acting as a final safety net for the entire workflow. If an unhandled exception occurs within a node, the engine catches the error, records it in the state bus, and triggers any necessary cleanup routines, such as canceling credit holds or notifying the user through the stream writer. This centralized management of the runtime environment makes the application significantly more resilient to the unpredictable nature of AI models and third-party APIs, ensuring that the system fails gracefully and maintains data consistency at all times.

6. Managing Long-Running Tasks: The Two-Phase Execution Model

AI applications often struggle with the limitations of serverless environments, where execution timeouts are significantly shorter than the time required for complex image or video generation tasks. To overcome this hurdle, the state-driven engine utilizes a two-phase execution model that allows a workflow to pause and resume across different function invocations. In the first phase, the engine runs until it reaches a node that submits a request to an external service, such as a video rendering farm or a heavy-duty model provider. Once the task is successfully submitted, the engine enters a “suspend” state, and the current state bus is persisted to a durable storage solution, like a database or a specialized key-value store. This allows the initial serverless function to terminate cleanly without waiting for the slow external process, saving on compute costs and avoiding platform-enforced timeouts.

The second phase of the execution model is triggered by an incoming webhook notification from the external service, signaling that the long-running task has been completed. Upon receiving this notification, a new instance of the engine is initialized, the suspended state is reloaded from storage, and the results of the external task are injected back into the state bus. The router then identifies the next logical step—such as uploading the generated media to a content delivery network or notifying the user—and the engine resumes the workflow from the exact point where it was paused. This seamless transition between phases ensures that the user’s journey is uninterrupted, even though the actual processing might have taken several minutes and occurred across multiple distributed systems. This architecture is particularly effective for 2026-era applications that combine rapid LLM reasoning with high-compute generative tasks, providing a scalable way to manage the varying latencies of the modern AI stack.

7. Expanding the System: Scalability and Future-Proofing

The modular nature of the “Shell and Node” architecture makes expanding the system’s capabilities a straightforward and risk-free process, which is essential for staying competitive in the fast-moving AI industry. When a team needs to introduce a new feature, such as a video style transfer tool or a more advanced intent classifier, they follow a simple three-step process: creating the functionality, updating the pathfinder, and linking to the registry. First, a developer writes a new node function that encapsulates the logic for the new feature, ensuring it adheres to the standard state-in, state-out contract. Because this node is isolated, it can be developed and tested independently of the rest of the engine, reducing the likelihood of introducing regressions into existing workflows. This separation of concerns is a major advantage over traditional monolithic architectures where every change requires a full regression test of the entire application.

Once the new node is ready, the developer updates the router to include the new logic paths, allowing the engine to recognize when the new feature should be engaged based on the user’s state. Finally, the new function is added to the engine’s node map, making it available for execution. This structured approach to scaling ensures that the codebase remains clean and organized, even as the application grows to support hundreds of different AI-driven features. In the context of the mid-2020s, where AI models and user requirements changed with incredible speed, this architecture proved to be a critical asset for engineering teams. The ability to iterate quickly without compromising system stability allowed developers to build more ambitious, multi-modal applications that pushed the boundaries of what was possible with generative technology. By focusing on a state-driven foundation, these teams created a legacy of resilient software that could adapt to the future of AI without requiring a complete architectural overhaul.

The implementation of a state-driven workflow engine transformed the way developers approached AI application design, shifting the focus from linear scripts to resilient, adaptable systems. By adopting the “Shell and Node” pattern, organizations successfully navigated the challenges of high latency, unpredictable model behavior, and the need for complex branching logic. This architectural transition enabled the creation of sophisticated media generation platforms that maintained high reliability while managing millions of concurrent user requests. The decoupling of state from execution ensured that every interaction was traceable, testable, and scalable, providing a foundation for the next generation of intelligent software. Ultimately, the move toward state-driven architectures was a defining moment for the industry, as it provided the structural integrity needed to turn experimental AI features into robust, production-ready services. These principles remain a cornerstone for any developer seeking to build powerful, multi-step AI workflows that are as reliable as they are innovative.