The transition from a successful laboratory demonstration to a fully functional production environment often reveals a jarring disconnect between theoretical potential and operational reality. While standard Retrieval-Augmented Generation systems appear impressive during initial testing, they frequently buckle under the weight of enterprise-scale data demands and complex user inquiries. In 2026, the industry has reached a turning point where the simple “retrieve and respond” loop is no longer sufficient for high-stakes applications. Developers are discovering that adding more raw data or upgrading to a larger model rarely fixes the underlying instability of a naive architecture. Instead, the solution lies in a structural shift toward a dedicated control plane. The Model Context Protocol (MCP) has emerged as this essential architectural layer, providing the missing link that transforms fragile, unpredictable pipelines into robust reasoning engines capable of handling the intricacies of modern business intelligence and customer interaction at scale.

The Limitations of Standard RAG

Structural Fragility: Why Simple Pipelines Fail in Production

Naive RAG implementations typically follow a rigid linear path that prioritizes mathematical similarity over actual thematic relevance, creating a fundamental gap in intelligence. When a user submits a query, these systems use vector embeddings to find the most similar text chunks, but “similar” often translates to shared keywords rather than shared intent. This leads to a scenario where the Large Language Model is flooded with technically related but practically useless information, resulting in responses that are factually grounded in the wrong context. Furthermore, the phenomenon known as “lost in the middle” occurs when excessive context is stuffed into a prompt, causing the model to ignore the most critical data points located in the center of the text block. As a result, operational costs and latency skyrocket while the quality of the output remains stubbornly inconsistent, proving that simple vector search is an insufficient foundation for production-grade reliability in 2026.

Standardized pipelines also struggle to adapt to the dynamic nature of multi-hop reasoning or the necessity of integrating real-time data from external APIs. Most legacy RAG setups operate within a closed loop, assuming that all necessary information is already present within the pre-indexed vector database. However, modern enterprise queries often require the model to synthesize information from multiple sources, perform calculations, or verify facts against live financial or logistical systems. A standard pipeline lacks the conditional execution logic to pause, fetch additional data, or clarify a user’s intent. This rigidity forces developers to write increasingly complex and brittle “wrapper” code around the model, which becomes impossible to maintain as the system scales. Without a centralized orchestration layer, the entire architecture becomes a series of disconnected patches that fail to address the core need for flexible, intent-driven information retrieval and processing.

Security and Transparency: The Risks of Unmanaged Context

Beyond the immediate issues of efficiency and accuracy, standard RAG configurations often operate in a dangerous vacuum regarding security and oversight. When an LLM is granted direct access to retrieved data without a mediating layer, it becomes vulnerable to sophisticated prompt injection attacks and data poisoning. In many naive setups, there is no mechanism to verify the sensitivity of the information being pulled into the context window, leading to accidental leakage of proprietary or personal data. Furthermore, the “black box” nature of these systems makes it nearly impossible for engineers to audit why a specific piece of information was chosen or how the final prompt was constructed. In the current regulatory landscape of 2026, this lack of transparency is a major liability, as organizations are increasingly required to provide clear data lineage and explainable AI outputs for every automated decision or customer-facing interaction.

The absence of a centralized control plane also means that security policies must be hard-coded into every individual application, creating a fragmented and inconsistent defense posture. If a vulnerability is discovered or a new data privacy regulation is enacted, developers must manually update every retrieval script across the entire organization. This decentralized approach is prone to human error and leaves significant gaps in the enterprise security perimeter. Without a way to sanitize inputs and outputs in real-time or to enforce a global “AI firewall,” organizations risk exposing their most valuable intellectual property to the model’s training memory or unauthorized users. The move toward a more structured protocol is not merely a technical preference but a strategic necessity to ensure that AI systems remain compliant, secure, and fully auditable as they become more deeply integrated into critical business infrastructure.

Implementing the Model Context Protocol

The Context Plane: Establishing a Control Plane for AI Reasoning

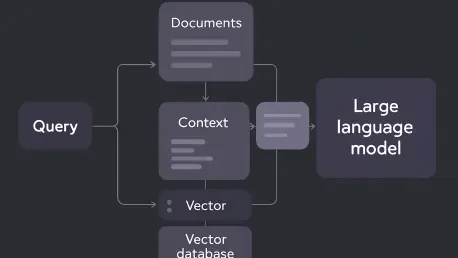

The Model Context Protocol functions as a sophisticated orchestration layer that effectively decouples the retrieval logic from the core application code, acting as the “Kubernetes for context.” By sitting between the data sources and the Large Language Model, the MCP server manages the entire lifecycle of context selection, ranking, and transformation. This allows the application to remain lightweight while the protocol handles the heavy lifting of determining which information is truly necessary for a given task. Instead of a simple function call to a database, the application communicates its high-level goal to the MCP server, which then utilizes various strategies—such as semantic search, keyword filtering, and cross-encoder re-ranking—to curate the most precise signal possible. This architectural shift ensures that the model receives only high-fidelity information, which directly translates to higher accuracy and lower token consumption.

Implementing an MCP-driven architecture enables a move toward intent-driven orchestration, where the system can dynamically adjust its retrieval strategy based on the complexity of the user’s request. For instance, a simple factual question might trigger a straightforward vector search, while a complex analytical query could prompt the MCP server to initiate a multi-stage process involving several different data repositories. This level of flexibility is achieved through a standardized interface that allows different models and data tools to communicate seamlessly. In 2026, this interoperability is crucial for avoiding vendor lock-in, as it allows organizations to swap out their underlying LLMs or vector databases without having to rewrite their entire retrieval logic. The protocol acts as a universal translator, ensuring that the right context is delivered to the right model in the right format, regardless of the underlying technology stack.

Operational Resilience: Managing Tools and Memory Through Protocols

A primary advantage of the MCP server is its ability to act as a centralized router for external tools and long-term memory management. In a production environment, an AI must often interact with diverse systems—ranging from CRM databases to real-time weather sensors—to provide a complete answer. The MCP server manages these tool definitions and access permissions, ensuring that the model has the right capabilities at its disposal without overcomplicating the prompt. It also handles the persistence of context across multiple turns of a conversation, allowing for a more natural and coherent user experience. By offloading these management tasks to a dedicated protocol, developers can implement sophisticated “memory” features that help the AI remember user preferences or previous session data, all while maintaining strict controls over how that information is stored and accessed.

This centralized management also facilitates the implementation of advanced AI firewalls and policy enforcement mechanisms that operate in real-time. The MCP server can inspect every piece of data entering the context window and every response generated by the model, applying automated filters to catch potential security threats or non-compliant content. This ensures that the AI’s behavior remains within the bounds of organizational policy, providing a layer of protection that is impossible to achieve with standard RAG pipelines. Moreover, because all context transitions pass through this single point, engineers gain unprecedented observability into the system’s reasoning process. They can track the lineage of a specific data point from the original source to the final generated response, making it significantly easier to debug hallucinations, optimize performance, and demonstrate compliance to internal and external auditors.

Strategic Gains for Enterprise AI

Economic Efficiency: Measuring Success in Production-Grade Systems

Adopting a protocol-driven approach to RAG delivers immediate and measurable improvements to the economic viability of AI projects. In 2026, the cost of tokens remains a significant concern for large-scale deployments, but organizations utilizing MCP report cost reductions of up to 60% through aggressive context compression and deduplication. By ensuring that only the most relevant text chunks are sent to the model, the protocol minimizes the “noise” that often leads to wasted processing power. Furthermore, smart caching mechanisms at the protocol level allow the system to reuse previously retrieved and processed context for similar queries, drastically reducing the number of expensive calls to both the vector database and the LLM. This leads to a much more sustainable financial model for AI initiatives, allowing companies to scale their services without a linear increase in operational expenditure.

Performance gains are equally impressive, as optimized retrieval pipelines managed by an MCP server can triple response speeds compared to unmanaged RAG systems. By parallelizing data fetching and using more efficient ranking algorithms, the protocol reduces the latency that often frustrates end-users. This speed is not just a matter of convenience; in many enterprise contexts, such as real-time customer support or financial trading, the difference of a few seconds can be the determining factor in the success of the application. The reduction in hallucinations is another critical metric, as the high-fidelity context provided by the MCP server ensures that the model is far less likely to “guess” when information is missing. This increased reliability builds user trust and allows organizations to deploy AI in more sensitive areas where the margin for error is minimal, ultimately driving higher adoption rates across the board.

Architectural Evolution: The Future of Reasoning Context Platforms

As the technology landscape continues to mature in 2026, the industry is rapidly moving toward the concept of “Reasoning Context Platforms,” or RAG++. This evolution represents a fundamental shift in focus from the data itself to the sophisticated management of how that data interacts with artificial intelligence. Building a robust MCP layer allows developers to move beyond the limitations of simple prompt engineering and start creating truly autonomous agents that can navigate complex information environments. The protocol provides the necessary framework for multi-model routing, where different queries can be directed to the most cost-effective or high-performing model for that specific task. This flexibility ensures that the AI stack remains agile and capable of incorporating the latest advancements in machine learning without requiring a total overhaul of the existing infrastructure.

The implementation of a dedicated context protocol was a pivotal step in maturing AI from a experimental novelty into a reliable component of the global technical stack. By providing the essential framework for observability, security, and scalability, the Model Context Protocol addressed the core vulnerabilities that previously hindered the production-grade deployment of RAG systems. Organizations that transitioned to this model found themselves better positioned to handle the complexities of data-driven decision-making, while those that clung to naive pipelines struggled with rising costs and declining accuracy. Ultimately, the shift toward standardized context orchestration allowed for the creation of more transparent, efficient, and intelligent systems. These advancements paved the way for a more integrated and secure future where AI could be trusted to handle the most critical functions of modern society with precision and accountability.