In the high-velocity landscape of modern distributed systems, a single corrupted message can silently paralyze an entire data pipeline and leave organizations grappling with cascading failures that compromise operational integrity. While Apache Kafka provides the robust plumbing for event streaming, the responsibility of ensuring that these events are processed reliably falls squarely on the shoulders of the consumer application. Within the ecosystem of Spring Boot 3, developers are finding that the gap between a basic “hello world” listener and a production-hardened consumer is filled with architectural nuances that determine whether a system thrives or buckles under pressure. Resilience is not merely a feature to be toggled on; it is an engineered state that requires a deep understanding of how messages flow, fail, and recover.

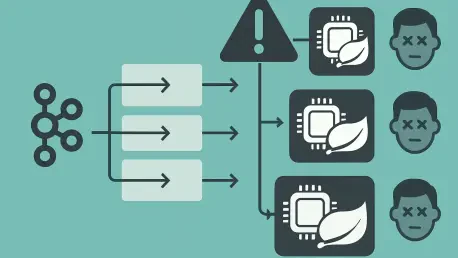

The modern enterprise depends on data that is both immediate and accurate, yet the distributed nature of these systems introduces a chaotic variable. When a service experiences a momentary flicker or a database undergoes a brief maintenance cycle, a naive consumer might crash or, worse, stop processing entirely while holding onto a failing record. This scenario, often referred to as a “poison pill” event, represents a significant risk to business continuity. In such environments, building fault-tolerant consumers in Spring Boot 3 becomes a mandatory exercise in defensive programming, ensuring that the pipeline remains fluid even when individual records prove problematic.

The High Stakes of Event-Driven Resilience

In a distributed architecture, the failure of a single microservice can ripple across the network, but an unhandled Kafka message can act like a digital roadblock that halts progress for all subsequent data. Because Kafka partitions are ordered sequences, a consumer that cannot successfully process the record at the front of the line will often refuse to move to the next. This creates a mounting lag that quickly escalates from a minor technical glitch to a full-scale operational crisis. The default “at-least-once” delivery guarantee provided by Kafka ensures that data is not lost, but it simultaneously shifts the burden of reliability onto the consumer, which must handle duplicates and retries without corrupting the underlying state of the application.

Spring Boot 3 provides a sophisticated framework for managing these challenges, but the implementation requires a deliberate, multi-layered defensive strategy. It is no longer sufficient to wrap logic in a simple try-catch block; developers must account for network volatility, malformed data, and the intricate dance of partition rebalancing. The stakes are particularly high for financial transactions or inventory management systems where a missed or double-processed event can lead to significant fiscal discrepancies. Consequently, resilience becomes a measure of how well a system survives the “unhappy path” without requiring manual intervention from an overworked engineering team.

Furthermore, the complexity of these systems is compounded by the fact that failures are rarely binary. A system might be “partially” failed, where it can reach some services but not others, or it might be experiencing extreme latency that mimics a total outage. Building for resilience means designing a consumer that can intelligently distinguish between these states and react accordingly. By utilizing the mature tools within the Spring Kafka ecosystem, teams can transform their consumers from fragile endpoints into self-healing components that preserve data integrity across the entire event-driven lifecycle.

The Architecture of Failure in Distributed Streaming

Understanding why Kafka consumers fail is the foundational step toward fortifying them against the inherent unpredictability of production environments. One of the most common points of confusion involves the reality of at-least-once delivery, where Kafka prioritizes durability over strict uniqueness. This means that during periods of network instability or during a consumer group rebalance, the same record might be handed to a consumer multiple times. If the consumer is not prepared for this repetition, it may execute side effects—such as sending an email or updating a ledger—twice, leading to inconsistent application states.

Beyond delivery mechanics, the distinction between transient and permanent roadblocks is vital for efficient resource management. A transient failure, such as a 503 Gateway Timeout from an external API, suggests that the system might recover if given a few seconds of breathing room. In contrast, a permanent failure, such as a malformed JSON payload that fails schema validation, will never succeed regardless of how many times it is retried. Treating these two scenarios identically leads to inefficient resource usage, as the system wastes CPU cycles and network bandwidth on futile retry loops for data that is fundamentally broken.

The “head-of-line blocking” problem represents perhaps the most significant architectural threat to streaming stability. Without a robust error-handling strategy, a single failing message prevents the consumer from committing its offset back to the Kafka broker. Since the broker does not see a successful commit, it continues to offer the same failed message to the consumer, effectively freezing processing for all subsequent messages in that partition. This results in an ever-growing consumer lag that can take hours or days to resolve if not addressed through automated recovery patterns like retries and dead letter routing.

Core Pillars of Resilient Consumer Design

To move beyond basic connectivity, developers must implement a tripartite framework that handles errors at different stages of the message lifecycle, starting with advanced retry logic. Spring Kafka 3.x utilizes the DefaultErrorHandler to manage immediate volatility, allowing developers to move away from brittle, localized error handling toward global, declarative retry policies. This component intercepts exceptions thrown by the listener and orchestrates a sequence of re-attempts based on pre-defined criteria. By centralizing this logic, organizations can ensure consistent behavior across dozens of microservices, simplifying both the development and the auditing process.

Strategic backoff and exception filtering provide the necessary nuance to these retry attempts. Implementing a FixedBackOff or an exponential backoff strategy gives downstream systems the time they need to recover from heavy load or temporary outages. Moreover, by identifying “non-retriable” exceptions—such as MethodArgumentNotValidException or IllegalArgumentException—the system can bypass useless retries. When the framework encounters an error it knows cannot be fixed by trying again, it can immediately divert the message to a recovery path, preserving the health of the consumer and maintaining the throughput of the partition.

Dead Letter Queues (DLQs) serve as the ultimate safety valve in a resilient architecture. When all retry attempts are exhausted, the DeadLetterPublishingRecoverer intercepts the failing record and routes it to a separate topic, usually suffixed with .DLT. This preserves the message along with its failure metadata, allowing the main traffic flow to continue without interruption. This approach ensures that the “poison pill” is sidelined for later forensic analysis or manual correction while preventing it from causing a system-wide standstill. The DLQ essentially acts as a quarantine zone, keeping the rest of the ecosystem safe from the contagion of a single bad event.

Expert Perspectives on Data Integrity

Industry consensus emphasizes that technical connectivity is only half the battle; maintaining a consistent application state is where true fault tolerance is proven. Experts highlight the diagnostic value of DLT headers as a primary tool for reducing the Mean Time to Recovery (MTTR). When Spring Boot 3 moves a message to a DLQ, it does not just move the raw bytes; it appends critical headers containing the original topic, the specific partition, the offset, and the full stack trace of the exception. This audit trail allows developers to pinpoint exactly why a message failed without having to scrape through massive log files, turning a potential disaster into a manageable debugging task.

However, architects often warn about the significant trade-off concerning message ordering when DLQs are introduced. While a DLQ prevents system crashes, it inherently disrupts the sequential integrity of the stream. If an application requires that Message A must be processed before Message B, and Message A is moved to a DLQ while Message B succeeds, the system enters an “out-of-order” state. For some domains, such as streaming video data, this might be acceptable; for others, such as banking transactions, it could be catastrophic. Systems requiring sequential integrity must be designed to handle these gaps, perhaps by pausing processing on a partition or implementing complex re-sequencing logic.

The consensus among seasoned distributed systems engineers is that resilience must be baked into the business logic, not just the infrastructure. This involves adopting a mindset where failure is expected rather than treated as an exceptional event. By leveraging the built-in capabilities of Spring Boot 3, teams can implement patterns that were once considered advanced, such as circuit breakers and rate limiters, directly into their Kafka consumers. This integration creates a more holistic defense, where the consumer is aware of its own health and the health of the systems it interacts with, leading to a much more stable and predictable production environment.

Implementation Roadmap for Production-Grade Consumers

Building a self-healing consumer required a disciplined approach that combined framework configuration with application-level patterns to ensure “effectively-once” processing. The first step in this journey involved configuring the ConcurrentKafkaListenerContainerFactory to explicitly set the DefaultErrorHandler. This configuration defined the boundary between transient errors and fatal ones, ensuring that only those messages with a chance of success were retried. By setting a clear retry limit and a backoff interval, the consumer was able to weather brief periods of instability without overwhelming downstream databases or APIs.

The second step focused on executing the Idempotent Consumer pattern, which is the most effective defense against the duplicate messages inherent in Kafka’s delivery model. To combat these duplicates, developers utilized a persistent state store, such as a relational database, to track unique message IDs. This created a “source of truth” that operated independently of Kafka’s offset management. Every incoming message was checked against this tracking table; if the ID already existed, the message was ignored as a duplicate, ensuring that the business logic was never executed more than once for the same event.

Thirdly, ensuring atomicity via transactional boundaries proved essential for maintaining data consistency. By wrapping the database tracking insert and the actual business logic within a single @Transactional block, the system achieved a high degree of reliability. If the business logic failed, the tracking record was rolled back along with it, allowing the Kafka consumer to safely retry the message later without leaving the system in an inconsistent state. This tight coupling between the message acknowledgment and the database state meant that the application could always recover to a known good point after a crash.

Finally, operational maintenance and the implementation of a Time-To-Live (TTL) strategy ensured that the tracking overhead remained minimal as the system scaled. Since the likelihood of receiving a duplicate message decreases significantly as time passes, the idempotency table did not need to store every ID forever. Periodic cleanup jobs or database-level TTL policies removed old records, preventing the tracking table from becoming a performance bottleneck. These combined steps transformed the Kafka consumer from a simple listener into a robust, enterprise-grade component capable of navigating the complexities of modern distributed streaming with confidence and precision.

The journey toward building fault-tolerant Kafka consumers in Spring Boot 3 was defined by a shift from reactive error handling to proactive resilience engineering. Developers learned to embrace the “at-least-once” reality by implementing multi-layered defenses, including intelligent retries, dead letter routing, and database-backed idempotency. These strategies ensured that transient failures remained invisible to the user and that permanent errors were handled with surgical precision. As event-driven architectures continue to evolve, the focus must now move toward automated recovery and real-time observability. Future considerations should involve the integration of machine learning to predict and mitigate consumer lag before it impacts the business, as well as the adoption of service mesh technologies to further decouple consumer logic from network concerns. By viewing resilience as an ongoing discipline rather than a one-time configuration, organizations can build systems that are not just durable, but truly unshakeable in the face of failure.