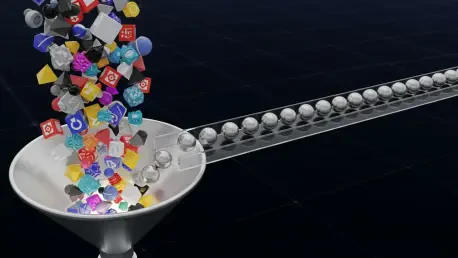

A seemingly minor configuration choice, like adding a favorite emoji or a descriptive space to a resource pool name, can trigger a cascade of invisible system failures that bring mission-critical data pipelines to a grinding halt in modern production environments. This specific vulnerability was recently brought to light through the identification and resolution of Issue #59935 within the Apache Airflow ecosystem, a platform that has become the standard for orchestrating complex computational workflows across the global tech landscape. The bug represented a classic architectural friction point where the user-facing interface offered a degree of flexibility that the underlying telemetry infrastructure could not support. By allowing unrestricted character sets in pool names, Airflow unintentionally created a path for runtime exceptions that bypassed initial configuration checks, leading to a “silent” but catastrophic failure of monitoring services and worker stability. This incident underscores a broader challenge in software engineering: the necessity of aligning diverse subsystems that have vastly different data validation requirements without sacrificing the user experience or system reliability.

The danger of such failures lies in their deferred nature, as they do not manifest when a user is actively configuring the system but rather when the system is under operational load and attempting to perform secondary tasks like reporting health metrics. For many organizations, observability is just as critical as the execution of the data tasks themselves, meaning that a break in the telemetry pipeline can lead to a complete loss of visibility into system performance. When worker processes crash or telemetry data stops flowing due to an unhandled exception in a reporting path, the resulting downtime can be difficult to diagnose because the root cause—a simple naming convention—is far removed from the symptomatic error. The resolution of this issue involved a deep dive into the trade-offs between strict data validation and the maintenance of legacy support, ultimately leading to a sophisticated normalization strategy that ensures system robustness across the entire orchestration lifecycle.

Architectural Mismatch and Silent Failures

Dissecting the Naming Discrepancy: The StatsD Constraint

The technical core of this production bug was found in the interaction between Apache Airflow and StatsD, a protocol widely adopted for gathering and aggregating system metrics in distributed environments. Airflow utilizes “pools” to manage the parallelism of task execution, ensuring that specific resources are not overwhelmed by too many concurrent operations. From a user perspective, naming these pools is a matter of organizational clarity, leading many to use descriptive titles such as “Cloud Sync ⚡” or “Internal Data Processing.” While the Airflow web interface and the underlying relational database were perfectly capable of storing these strings, the telemetry subsystem required a much higher level of data hygiene. The StatsD protocol mandates that all metric names conform to a strict ASCII-only format, limiting characters to letters, numbers, underscores, dots, or dashes. This fundamental incompatibility meant that any pool name containing a space, an emoji, or a special symbol became a ticking time bomb within the metrics pipeline.

When a scheduler or worker attempted to report the number of occupied slots or the remaining capacity of a pool with an “invalid” name, the system would immediately encounter an InvalidStatsNameException. Because this reporting logic was embedded deep within the execution cycle of the Airflow worker, the exception often resulted in the termination of the telemetry thread or, in some configurations, the instability of the entire worker process. The failure was particularly insidious because it was “silent” during the setup phase; a user could create an invalid pool name, test their DAGs, and see everything functioning correctly, only to have the system fail hours or days later when the monitoring service attempted to log a specific metric. This gap between input acceptance and output execution highlighted a significant architectural oversight where the constraints of downstream dependencies were not adequately communicated back to the upstream user interface, creating a trap for even the most experienced platform engineers.

The Impact: Operational Instability and Loss of Visibility

Beyond the immediate technical exception, the consequences of this naming discrepancy extended into the operational health of the data platforms relying on Airflow for their daily workloads. In an era where data-driven decision-making is paramount, the loss of telemetry data is not merely a technical inconvenience but a significant business risk. When the InvalidStatsNameException triggered, it effectively blinded the operations team to the performance of their resource pools, making it impossible to determine if tasks were being delayed due to genuine resource contention or if the reporting mechanism itself had simply ceased to function. In high-stakes environments, such as financial services or real-time logistics, this lack of visibility can lead to missed SLAs and cascading delays that are difficult to recover from without accurate real-time data. The instability introduced by these unhandled exceptions often forced manual interventions, where administrators had to scour logs to identify the specific pool name causing the conflict.

Furthermore, the nature of the failure made it difficult to implement automated recovery strategies, as the error would recur every time the system attempted to report metrics for the problematic pool. This created a cycle of crashes and restarts that could degrade the performance of the entire cluster. The incident served as a stark reminder that in complex, distributed systems, every component is only as reliable as its weakest integration point. The flexibility of the Airflow UI, while intended to be user-friendly, became a liability when it failed to account for the rigid requirements of the broader monitoring ecosystem. Addressing this required more than just a quick patch; it demanded a strategic rethinking of how data flows through the system, ensuring that user-provided strings are sanitized or transformed before they reach sensitive internal components that lack the same degree of flexibility as the front-end interface.

Shifting from Validation to Normalization

The Challenge: Why Backwards Compatibility Matters

When the engineering team first approached a solution for the naming bug, the most intuitive path seemed to be the implementation of a “fail-fast” validation mechanism at the point of pool creation. By introducing a validate_pool_name() function, the system could have simply rejected any names containing spaces, emojis, or non-ASCII characters, forcing the user to choose a compliant name from the start. This approach aligns with standard defensive programming principles, which suggest that the best way to handle invalid data is to prevent it from ever entering the system. However, as the proposal moved through the peer-review process within the Apache Airflow community, a significant problem emerged regarding the platform’s extensive legacy installations. Because Airflow had permitted unrestricted pool names for years, thousands of existing production databases were already populated with names that would be deemed “illegal” under the new validation rules.

If the community had moved forward with strict validation, any user attempting to upgrade to the latest version of Airflow in 2026 would have faced an immediate and potentially catastrophic breaking change. Their existing workflows, DAGs, and internal scripts that referenced these “invalid” pools would have suddenly become non-compliant, effectively locking them out of the upgrade path or requiring a massive, manual migration effort to rename pools and update all dependent code. The maintainers recognized that the burden of the software’s historical permissiveness should not be shifted onto the end-users, especially those managing large-scale, mission-critical deployments. This realization forced a pivot in the technical strategy, moving away from a restrictive validation model toward a more accommodating “translation” model. The goal shifted to finding a way to satisfy the technical requirements of StatsD while allowing users to keep their existing naming conventions, thereby ensuring a smooth upgrade path for the global user base.

Implementing the Translation Layer: The Power of Normalization

The eventual resolution of the issue involved moving the fix from the “creation boundary” to the “reporting boundary,” a strategy that prioritized system stability without breaking existing user configurations. The developer implemented a normalization layer through a new utility function specifically designed to sanitize pool names before they are passed to the telemetry system. This function, normalise_pool_name_for_stats(), utilizes regular expressions to scan the user-provided string and replace any non-compliant characters—such as whitespaces, emojis, or special symbols—with a standard underscore. By doing this, the system ensures that the string arriving at the StatsD client always adheres to the strict ASCII requirements, effectively eliminating the risk of an InvalidStatsNameException while leaving the original name untouched in the Airflow database and user interface. This approach allowed the system to remain “permissive on input” but “strict on output,” which is a hallmark of resilient distributed system design.

This normalization strategy also included a clever mechanism for maintaining transparency and informing the user of the behind-the-scenes modifications. Whenever a pool name is normalized for the sake of telemetry, the system triggers a warning log that alerts the administrator to the change. This provides a clear explanation for why a pool named “Data Processing 🚀” might appear in a Grafana dashboard as “Data_Processing__” or similar. By providing this feedback through logs rather than through a hard error in the UI, the system guides users toward better naming practices over time without forcing an immediate, disruptive change to their operational environment. This solution effectively decoupled the internal needs of the monitoring tools from the external needs of the human operators, providing a robust and flexible framework that can accommodate future changes in telemetry protocols without requiring further modifications to the core pool management logic.

Navigating the Open-Source Ecosystem

Procedural Rigor: CI Pipeline Hurdles

The process of merging this fix into the main Apache Airflow repository provided a vivid illustration of the administrative and procedural complexities involved in maintaining a world-class open-source project. Writing the actual normalization logic was only a small portion of the total effort required; the contributor had to navigate a gauntlet of automated testing and code quality tools that ensure the long-term maintainability of the codebase. For instance, the project utilizes the Ruff formatter and linter, which enforces an incredibly specific set of stylistic rules ranging from the order of import statements to the exact placement of whitespace. Multiple iterations of the pull request were dedicated solely to satisfying these automated quality gates, highlighting the high bar for entry that prevents “code rot” in a project with hundreds of active contributors. These tools, while sometimes perceived as a hurdle, are essential for ensuring that a global team can collaborate on a massive codebase without it becoming a tangled mess of conflicting styles.

Beyond stylistic consistency, the contributor had to deal with the intricacies of environment-specific configurations that often plague modern software development. One notable challenge occurred during the implementation of security protocols, specifically the requirement that all commits to the Apache Airflow repository be cryptographically signed via GPG. The developer encountered persistent issues on a Windows-based development environment where Git configuration mismatches led to silent signing failures, preventing the commits from being accepted by the project’s security filters. Resolving this required a deep dive into the local system’s environment variables and Git settings, a task that has little to do with the “logic” of Airflow but everything to do with the “process” of professional software delivery. This experience underscores the reality that being a successful contributor to a major open-source project requires a high degree of technical versatility and the patience to troubleshoot issues across the entire development stack, from the code itself to the infrastructure that supports it.

The Human Factor: Collaborative Resilience

The final stages of the normalization fix were marked by the unpredictable nature of collaborative software development, where multiple engineers are often working on overlapping parts of the same system simultaneously. At one point in the process, a separate cleanup pull request from another contributor inadvertently deleted the test files that the author had meticulously crafted to verify the naming normalization logic. This could have been a major setback, but it instead became a showcase for the resilience of the Git version control system and the collaborative spirit of the Airflow community. By tracing the commit history and coordinating with other maintainers, the developer was able to restore the deleted files and relocate them to the appropriate directory, ensuring that the fix was fully tested before it was merged. This highlights that the “human” and “procedural” elements of open-source—such as communication, version control management, and peer review—are just as critical to a successful deployment as the code itself.

Looking forward, the successful resolution of the telemetry naming bug serves as a blueprint for how other orchestration tools might handle the tension between user flexibility and backend constraints. The actionable takeaway for platform engineers in 2026 is to always consider the “boundary” where data transitions from a flexible user-controlled state to a rigid system-controlled state. By implementing normalization layers early in the architectural design, developers can prevent a wide range of downstream failures while maintaining the backwards compatibility that is so essential for enterprise-grade software. As Airflow continues to evolve, the lessons learned from Issue #59935 will likely inform future improvements to the telemetry subsystem, potentially leading to more generalized sanitization frameworks that can protect the system from a variety of “invalid” inputs across all its diverse modules. This proactive approach to system health ensures that Apache Airflow remains a reliable foundation for the next generation of data-driven innovation, providing both the flexibility users want and the stability that production environments demand.