The traditional bottleneck of machine learning development has long been the intricate and often repetitive manual labor required to transition from a raw dataset to a fine-tuned, production-ready model. For years, data scientists have navigated a fragmented landscape of disparate scripts, specialized libraries, and complex cloud configurations just to test a single hypothesis. This fragmented reality often leads to significant delays, where the actual innovation of model architecture is overshadowed by the sheer administrative burden of pipeline management. As the demand for bespoke generative AI solutions scales across diverse industries, the necessity for a more cohesive and automated approach has become undeniable. The introduction of agent-guided workflows within Amazon SageMaker AI represents a pivotal shift in this dynamic, moving away from manual orchestration toward an era of intent-based development. By allowing engineers to interact with the platform through natural language, the system effectively bridges the gap between high-level project goals and the low-level API calls required to execute them, fundamentally altering the developer experience in 2026.

This evolution into agentic development environments marks a departure from static templates and rigid automation scripts that previously defined cloud-based machine learning. Instead of following a predetermined path, the new agent-guided experience acts as a sophisticated digital navigator that understands the nuances of the model customization lifecycle. When a developer describes a specific objective, such as optimizing a large language model for legal document summarization, the AI agent does not simply provide a checklist; it actively steers the entire process. This includes intelligently selecting data preparation techniques, recommending specific fine-tuning methodologies like Low-Rank Adaptation, and managing the complexities of evaluation and deployment. By synthesizing these traditionally isolated steps into a continuous dialogue, the platform removes the friction of context switching. Consequently, teams can focus their intellectual energy on refining the data quality and the strategic implications of the model output, rather than troubleshooting the underlying infrastructure or manually stitching together various cloud services to form a working pipeline.

The Architecture of Modular Skills

At the heart of this technological advancement lies the implementation of modular skills, which function as the cognitive building blocks for the AI coding agent. These skills are not merely snippets of code; they are encapsulated instruction sets that incorporate AWS-specific operational knowledge and industry-standard data science practices. By modularizing these workflows, the system can dynamically invoke the specific capabilities needed for a given task, such as automated hyperparameter tuning or dataset augmentation. This design philosophy addresses a critical pain point for platform engineers who often struggle with the overhead of maintaining custom integration code for evolving APIs. The skills are designed to be reusable and adaptable, ensuring that as new techniques emerge, they can be integrated into the agent’s repertoire without overhauling the entire system. This structural modularity ensures that the agentic experience remains flexible enough to handle unique enterprise requirements while providing a standardized foundation that reduces the likelihood of configuration errors during the model lifecycle.

Beyond the technical convenience of pre-built modules, the ability to customize these skills offers organizations a powerful mechanism for enforcing internal governance and engineering standards. Companies can inject their own proprietary best practices, security protocols, and data handling requirements directly into the skills library that the agent utilizes. For example, a financial institution might modify a data preparation skill to ensure it automatically redacts personally identifiable information before any training begins. This level of control ensures that while the agent provides speed and automation, it does so within the guardrails established by the organization’s compliance teams. Furthermore, because the code generated by the agent is fully transparent and editable, it avoids the “black box” problem often associated with automated tools. Developers can inspect, refine, and version-control the output just as they would with manually written code, fostering a culture of accountability and precision. This balance between high-level automation and granular control is essential for scaling AI operations across large, regulated environments.

Integration Within the Developer Ecosystem

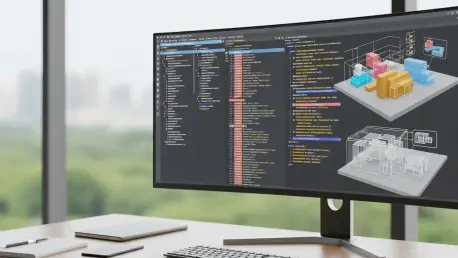

The practical application of these agent-guided workflows is most visible within the enhanced SageMaker AI Studio JupyterLab environment, specifically through the integration of Amazon Kiro. This tool serves as the primary interface where natural language intent is converted into executable logic, providing real-time AI-powered code completion and debugging support. As developers iterate on their models, Kiro assists by suggesting optimizations and identifying potential bottlenecks in the training scripts. This seamless integration within the familiar IDE allows practitioners to stay within their creative flow, reducing the time spent on mundane syntax corrections or searching for documentation. The interplay between the agent and the developer is collaborative; the agent handles the heavy lifting of infrastructure orchestration and boilerplate generation, while the human developer provides the critical oversight and domain expertise. This synergy significantly compresses the time-to-market for new models, enabling a more rapid experimentation cycle that is vital for maintaining a competitive edge in the fast-paced AI sector.

This shift toward productized agentic experiences reflects a broader industry trend where cloud providers are increasingly focused on abstracting the complexities of the underlying hardware and software stack. By unifying customization pipelines within a single managed environment, the platform effectively lowers the entry barrier for smaller teams and organizations that may lack deep specialized expertise in cloud orchestration. However, this transition also necessitates a change in how developers approach their work, placing a higher premium on the ability to clearly define problems and evaluate results rather than just writing code. The consolidation of evaluation notebooks, customization pipelines, and deployment artifacts into a single cohesive flow simplifies the audit trail and improves project visibility. As organizations move toward this more automated future, the role of the data scientist is evolving from a manual builder to an orchestrator of intelligent systems, where success is measured by the ability to guide an agent toward producing high-quality, reliable, and ethically sound machine learning models.

Strategic Implementation and Governance

As organizations begin to integrate agent-guided customization into their core operations, the focus must shift toward maintaining the integrity and reliability of the automated outputs. While the speed of iteration is a clear benefit, it introduces new challenges regarding data lineage and the correctness of the generated skills. To mitigate the risk of “hallucinations” in the code or the use of sub-optimal training techniques, teams should implement rigorous validation protocols, such as using LLM-as-a-judge metrics to evaluate model performance objectively. Establishing a dedicated internal repository for versioned skill libraries is a practical next step for maintaining consistency across different departments. This allows for a centralized audit of the instructions the agent follows, ensuring that every model produced aligns with corporate quality standards. Engineers should also prioritize integrating these agentic flows into existing Continuous Integration and Continuous Deployment (CI/CD) patterns, treating the agent’s output as an integral part of the software development lifecycle that requires standard testing and peer review.

The future of model customization will likely depend on how effectively teams can balance the efficiency of AI-led automation with the necessity of human-in-the-loop oversight. Moving forward, stakeholders should invest in training their technical staff to act as “agent supervisors” who are proficient in prompt engineering and the technical underpinnings of the skills they deploy. This proactive approach involves not only monitoring the immediate results of a fine-tuning job but also analyzing the long-term performance and drift of models deployed via agentic workflows. By fostering a deep understanding of how the agent makes decisions, organizations can build more resilient AI infrastructures that are capable of adapting to new data and changing business requirements without sacrificing transparency. Ultimately, the successful adoption of this technology will be defined by an organization’s ability to turn automated suggestions into verified, high-value assets. Transitioning to this model-centric approach requires a commitment to rigorous documentation and a willingness to iterate on the governance frameworks that manage these powerful new tools.