The transformation of the modern enterprise hinges no longer on the sheer volume of information collected but on the precision with which that data is curated and deployed by autonomous systems. The agentic data pipelines represent a significant advancement in the data engineering and artificial intelligence sector. This review explores the evolution of the technology, its key features, performance metrics, and the impact it has had on various applications. The purpose of this review is to provide a thorough understanding of the technology, its current capabilities, and its potential future development.

The Evolution of Active Data Orchestration

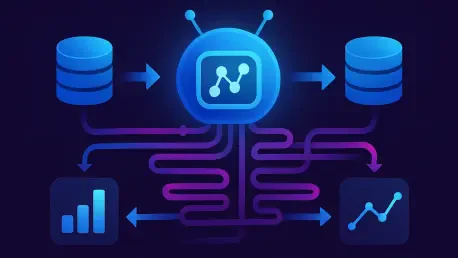

The shift from traditional “Big Data” frameworks to agentic architectures marks a fundamental change in how information is handled. Historical data engineering prioritized the passive movement of massive datasets for batch processing, focusing on storage and throughput. In contrast, agentic pipelines introduce intentionality and autonomy into the data flow. These systems are designed specifically for the needs of modern AI, acting as a “runtime context layer” that makes real-time decisions about what information is relevant.

Emerging from the necessity to support Large Language Models and Retrieval-Augmented Generation, this technology bridges the gap between static storage and active intelligence. It signifies a departure from volume-centric engineering toward context-aware systems. Instead of simply moving data from point A to point B, the pipeline now evaluates the data against a specific objective, ensuring that the AI receives the most pertinent context to perform its task accurately.

Core Architectural Components and Features

The effectiveness of agentic pipelines stems from their ability to process information with a specific objective in mind, moving beyond linear scripts to goal-oriented workflows. This approach recognizes that in an AI-driven environment, the relevance of data is highly dependent on the problem the system is attempting to solve. By embedding logic within the pipeline itself, organizations can ensure that their AI agents are not just processing data, but are doing so with a clear understanding of the desired outcome.

Goal-Aware Orchestration and Intentionality

Goal-aware orchestration allows the system to understand the underlying intent of a query. Unlike traditional ETL processes that follow rigid, pre-defined sequences, agentic pipelines can dynamically assemble data based on the specific requirements of a task, such as a legal discovery or a complex security analysis. This flexibility allows the system to bypass irrelevant data sources and prioritize those that offer the most value for the current objective.

This component ensures that the retrieved information is precisely aligned with the task at hand. By interpreting the intent, the pipeline reduces the noise often associated with broad data searches, leading to more accurate and reliable outcomes for AI-driven applications. This level of intentionality is what separates modern agentic systems from the passive automation tools of the past decade.

Adaptive Retrieval and Semantic Transformation

Another core feature is adaptive retrieval and semantic transformation. This capability enables the system to choose the most effective method for data gathering on the fly. Whether it requires a semantic vector search to find conceptually related information, a keyword match for specific terms, or a structured SQL query for precise data points, the pipeline adapts its strategy to the nature of the request.

Furthermore, the system manages the “chunking” and embedding of data, transforming raw text into AI-ready units. This adaptability is crucial because the quality of the context provided to an AI model is often more critical to its performance than the size of the model itself. By refining how data is segmented and represented, agentic pipelines ensure that the information is in a format that the model can interpret with maximum efficiency.

Feedback-Driven Improvement Mechanisms

Agentic pipelines incorporate closed-loop systems that learn from performance metrics and human interaction through feedback-driven improvement mechanisms. By monitoring retrieval misses and user feedback, the pipeline can autonomously tune its parameters to improve results. This self-optimizing nature allows the system to increase its accuracy and cost-efficiency over time without requiring constant manual reconfiguration from data engineers.

This iterative process creates a system that grows more intelligent with use. For instance, if the pipeline identifies that certain types of queries consistently fail to retrieve relevant information, it can adjust its embedding strategies or retrieval priorities. This continuous refinement cycle is essential for maintaining the long-term viability of AI systems in dynamic enterprise environments where data and user needs are constantly evolving.

Emerging Trends in AI-Driven Data Flows

The field is currently moving toward a hybrid approach that integrates disparate search methodologies into a single, unified stream. There is a growing emphasis on “metadata as a first-class citizen,” where the origin, security classification, and relationships of data are treated with the same importance as the data itself. This trend ensures that the pipeline is not just retrieving content but is also aware of the context and permissions surrounding that content.

Another significant trend is the rise of modular orchestration, where the decision-making logic is separated from the AI prompts. This separation allows for greater debuggability and version control, making it easier for developers to track how the system arrived at a particular result. These innovations reflect a broader shift toward making data pipelines more transparent, manageable, and resilient in the face of complex organizational requirements.

Real-World Applications and Sector Impact

Agentic data pipelines are seeing rapid deployment across industries that require high-precision information retrieval and automated action. In legal and compliance sectors, these pipelines autonomously navigate vast document stores to identify relevant evidence during e-discovery while adhering to strict security protocols. This automation significantly reduces the time and cost associated with manual document review while increasing the accuracy of the findings.

In the realm of customer support and experience, enterprises use agentic flows to power support bots that do more than just provide information. These systems can trigger API calls to ticketing platforms, classify complex issues, and resolve queries in real-time by pulling data from multiple internal sources. Similarly, in cybersecurity, Security Operations Centers utilize these systems to investigate anomalies by gathering context from various logs and automatically summarizing threats for human analysts.

Technical Challenges and Market Obstacles

Despite their potential, agentic pipelines face several hurdles that organizations must navigate. Technical challenges include the complexity of managing “hallucination” risks, where a pipeline might provide plausible but incorrect context to the AI model. Ensuring that the data delivered is both accurate and grounded in reality remains a primary concern for developers working with autonomous systems.

There are also significant concerns regarding data provenance and governance. Ensuring an autonomous system complies with privacy regulations like GDPR requires robust observability layers that can track every decision made by the pipeline. Furthermore, the high computational cost of continuous embedding and real-time reranking remains a market obstacle for smaller organizations. These costs can quickly escalate, making it difficult for some to adopt this technology at scale without careful resource management.

Future Outlook and Technological Trajectory

The future of agentic data pipelines lies in the evolution of the data engineer into a “context architect.” There is an expectation for breakthroughs in “small-to-large” data synthesis, where pipelines can reason across tiny fragments of proprietary data and massive public datasets simultaneously. This capability will allow organizations to leverage their unique internal knowledge more effectively alongside broader industry information.

Long-term, these systems will likely become the standard backbone for all enterprise AI, moving away from simple automation toward “autonomous agents” that can execute complex business processes with minimal oversight. This shift will fundamentally change how organizations interact with their information assets, turning data from a passive resource into an active participant in business operations. The focus will move from managing data to managing the logic and intent that drives the data’s use.

Summary of Findings and Assessment

The review of agentic data pipelines revealed a technology that acted as an essential bridge for the next generation of AI reliability. By shifting from passive storage to active orchestration, these pipelines solved the context gap that often plagued standard AI implementations. While challenges in governance and cost persisted, the move toward goal-aware, adaptive, and observable data flows represented a landmark shift in the industry. As organizations continued to move beyond the limitations of Big Data, agentic pipelines stood as the critical foundation for trustworthy and intelligent enterprise systems. Future strategies should prioritize the development of more granular metadata frameworks and the integration of diverse retrieval models to further enhance system autonomy and precision.