The architectural shift toward containerized microservices has reached a level of maturity where the primary challenge is no longer about running code, but rather managing the complex flow of assets that make those services functional. While Kubernetes provides a robust and standardized framework for orchestrating workloads through declarative APIs and GitOps workflows, a significant structural gap persists in the underlying software supply chain. This missing link is the absence of a centralized governance model for the delivery of critical artifacts, including container images, Helm charts, and OCI bundles. As organizations scale their operations, they frequently encounter a bottleneck that exists entirely outside the cluster boundaries, where the fragmented nature of artifact management begins to erode the reliability and velocity that cloud-native technologies were originally designed to deliver. Without a unified access plane, the sheer volume of disparate software assets creates a chaotic environment where security, performance, and operational consistency are increasingly difficult to maintain across multiple environments.

Navigating the Complexity of Modern Dependency Graphs

Modern cloud-native environments have evolved into intricate ecosystems that rely on a multi-faceted array of components sourced from a diverse set of locations. A single production application today is rarely a self-contained unit; instead, it often pulls container images from private corporate registries, fetches community-maintained Helm charts from public repositories, and requires specialized sidecar binaries or operators to function correctly. This structural “fan-out” effect means that the ultimate success of a deployment is tethered to the availability and integrity of half a dozen different sources, each governed by its own unique access rules, authentication methods, and trust models. When these dependencies are managed in a decentralized fashion, the resulting overhead becomes a significant burden for platform engineering teams who must ensure that every external pull adheres to strict corporate standards.

The lack of a unified management layer leads to a sprawl of conflicting policies that are nearly impossible to track or enforce consistently across different development teams. In a typical large-scale organization, one team might use a specific version of a base image while another uses a slightly different variation, creating a fragmented security posture that is difficult to audit. This inconsistency is not just a matter of administrative preference; it introduces real risks regarding software provenance and vulnerability management. Without a centralized point of entry for these artifacts, the dependency graph becomes a black box, making it challenging to verify the origin of code or to ensure that every component in the stack has been properly vetted against the latest security threats before it ever reaches a staging or production cluster.

Overcoming the Limitations of Registry-Centric Models

Traditional artifact management has historically relied on registry-level Access Control Lists to govern who can push or pull images, but these models are proving to be insufficient for the requirements of 2026. These legacy systems are fundamentally “context-blind,” meaning they lack the intelligence to distinguish between a pull request intended for a secure production environment and one meant for a temporary development sandbox. Because the registry only sees a request for a specific tag, it cannot apply granular logic based on the destination or the current state of the deployment pipeline. This forces security teams to manually replicate complex policies across CI/CD systems and cluster admission controllers, creating a massive amount of administrative toil and increasing the likelihood of human error or configuration drift between different environments.

This disconnected approach often results in late-stage deployment failures that significantly hamper developer productivity. When security checks are performed only at the point of execution within the cluster, a developer might spend hours perfecting a service only to find it blocked during the final rollout phase because of a policy violation that could have been caught much earlier. This “wall of friction” forces teams to backtrack, re-scanning and re-patching images in a reactive cycle that slows down the entire release process. By relying on a model that enforces rules at the very end of the supply chain, organizations lose the opportunity to provide proactive feedback to engineers, ultimately undermining the goal of achieving high-velocity, automated software delivery in a secure and predictable manner.

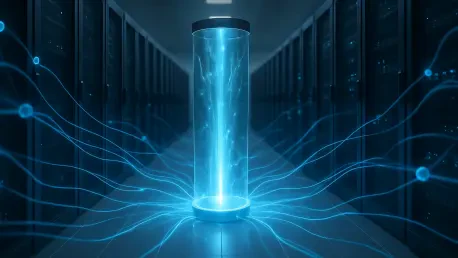

The Strategic Role of the Virtual Registry

The implementation of an artifact access plane, primarily through the use of a virtual registry, introduces a logical abstraction layer that sits strategically between consumers and physical storage backends. By functioning as a single, OCI-compliant endpoint, a virtual registry centralizes the orchestration of policy, allowing the platform to evaluate every request based on identity, intent, and organizational requirements before an artifact is even retrieved from the source. This architecture provides a powerful mediation point where security certificates can be verified, signatures can be checked, and vulnerability thresholds can be enforced in real-time. Instead of managing a dozen different registry connections, the Kubernetes cluster interacts with one trusted gateway that handles the complexities of upstream communication and authorization.

Beyond its role as a policy engine, the virtual registry serves as a critical buffer that protects the infrastructure from the inherent volatility of external providers. In the event of an upstream registry outage or a sudden enforcement of aggressive rate limits, the access plane ensures that internal clusters remain stable by serving cached versions of required artifacts. This decoupling of cluster operations from the availability of third-party services is essential for maintaining high availability in mission-critical systems. Furthermore, this model grants platform teams the flexibility to swap or diversify their storage backends—moving from one cloud provider to another or integrating specialized on-premises storage—without needing to modify a single deployment manifest or disrupt the established workflows of the development community.

Enhancing Performance and Operational Resilience

While security and governance are often the primary drivers for adopting an access plane, the technical performance benefits are equally compelling for large-scale Kubernetes ecosystems. By caching frequently accessed artifacts and localizing data flow within the internal network, these systems drastically reduce the load on upstream registries and minimize the substantial egress costs associated with cross-region data transfers. In a world where applications are distributed across multiple global clusters, the ability to serve images from a local cache rather than pulling them repeatedly over the public internet results in significantly faster pod startup times. This efficiency is not merely a convenience; it is a fundamental requirement for modern applications that must scale rapidly in response to fluctuating user demand.

The operational resilience provided by a centralized access plane becomes particularly evident during large-scale events, such as cluster-wide restarts or massive autoscaling spikes. These scenarios generate enormous volumes of registry traffic that can easily overwhelm standard infrastructure or trigger throttling mechanisms from external image providers. An artifact access plane absorbs these spikes, providing a controlled and optimized distribution mechanism that prevents the “thundering herd” problem from crashing the deployment pipeline. By ensuring that the infrastructure remains resilient under extreme pressure, the access plane allows organizations to maintain their service level objectives even when external conditions are suboptimal, effectively isolating the internal production environment from the unpredictability of the global software supply chain.

Integrating the Cloud Native Ecosystem

The essential building blocks for a sophisticated artifact access plane are already present within the Cloud Native Computing Foundation landscape, featuring mature tools for image signing, provenance tracking, and workload identity. However, these individual primitives often operate in silos, requiring complex, manual integration to be truly effective within a production environment. An artifact access plane functions as the critical orchestration layer that composes these independent tools into a unified, cohesive control system. By aligning industry standards like the OCI Distribution Specification with modern policy engines and identity providers, organizations can create a framework that ensures every single artifact entering the environment is verified and optimized without having to reinvent the underlying infrastructure.

This integration allows for a more sophisticated level of automation where security metadata, such as SBOMs and vulnerability reports, are automatically indexed and associated with the artifacts as they pass through the access plane. Instead of treating a container image as a simple binary blob, the access plane views it as a rich data object with an associated history and trust profile. This holistic view enables the system to make intelligent decisions—such as blocking a deployment if a new critical vulnerability is discovered in a base image, even if that image was previously approved. By serving as the connective tissue between the various security and observability tools in the CNCF stack, the access plane transforms a collection of disparate utilities into a proactive defense mechanism that scales alongside the organization’s growth.

Shifting Governance Left for Developer Velocity

A centralized access plane has a profound impact on the developer experience by transforming compliance from a manual hurdle into an implicit, automated part of the daily workflow. In traditional, fragmented systems, developers often struggle with opaque policy checks that vary wildly between development, staging, and production environments, leading to a “works on my machine” syndrome that is difficult to debug. By implementing a unified access layer, the organization can bake security and trust constraints directly into the retrieval mechanism. This provides a near-instant feedback loop for engineers, ensuring that any artifact pulled into a local development environment already meets the rigorous criteria required for a production rollout, thereby eliminating unpleasant surprises during the release cycle.

This shift in responsibility allows development teams to move faster and with greater confidence, as the artifact access plane handles the heavy lifting of governance and optimization in the background. Engineers are free to focus on writing code and building features, knowing that the platform infrastructure is automatically managing the complexities of artifact sourcing, security verification, and performance tuning. This model fosters a culture of “secure by design,” where the path of least resistance for the developer is also the most secure and efficient path for the organization. Ultimately, treating artifact access as a first-class control plane is the logical next step in the evolution of cloud-native infrastructure, providing the necessary coherence and trust required to navigate an increasingly complex and fragmented digital landscape.