The financial reality of modern enterprise computing has reached a point where the sheer volume of cloud billing data exceeds the cognitive capacity of even the most diligent human financial analysts. While the initial promise of a multi-cloud strategy focused on the freedom to choose the best-of-breed services from AWS, Azure, and Google Cloud, the operational aftermath has created a tangled web of overlapping expenses. Organizations often find themselves trapped in a paradoxical state where the very agility they sought to gain is now being drained by a “hidden tax” of unmanaged complexity. This fiscal erosion does not happen all at once; it occurs through thousands of tiny, invisible decisions made by automated scripts and distributed engineering teams. As these environments expand, the distance between a developer’s deployment command and the actual cost incurred on a monthly statement becomes an unbridgeable chasm for manual oversight.

The concept of accumulated invisibility has become the primary antagonist in the story of modern digital transformation. In a single-provider environment, a company might struggle with waste, but the billing language remains consistent. However, as soon as a second or third provider enters the ecosystem, the vocabulary of infrastructure changes entirely. One provider might bill for compute by the second, while another rounds up to the nearest hour; one might offer deep discounts for committed use that are incompatible with the ephemeral nature of another provider’s spot instances. This lack of a Rosetta Stone for cloud finance leads to a scenario where waste is not merely a mistake—it is an inherent property of the system. Without a reasoning layer capable of interpreting these disparate signals, the cloud remains a black box that consumes capital with relentless efficiency.

The Hidden Tax: Digital Agility

The migration toward a multi-cloud architecture is frequently championed as a safeguard against vendor lock-in, yet this strategic independence often carries a heavy, unforeseen price tag. When engineering teams are empowered to select the tools that best suit their immediate technical needs, the centralized visibility required for financial health usually suffers. This fragmentation creates a environment where the connection between a specific deployment and the corporate treasury is obscured. What was once a manageable monthly bill has transformed into a multi-million-row spreadsheet that no human can realistically audit. This disconnect is the “hidden tax,” a percentage of every IT budget that is lost to the friction of managing diverse platforms that were never designed to communicate with each other regarding their economic impact.

Furthermore, this tax manifests through the phenomenon of resource sprawl, which has become an epidemic in decentralized organizations. In the rush to deliver new features, test clusters are spun up and forgotten, unattached storage volumes continue to accrue charges long after their parent virtual machines are deleted, and over-provisioned instances sit idle during weekends and holidays. These resources are often referred to as “ghost infrastructure” because they do not cause system failures or performance bottlenecks; instead, they exist in a state of quiet decay, eroding budgets without providing a single cent of value. Because these resources do not trigger operational alerts, they remain active indefinitely, creating a persistent drain on capital that could otherwise be allocated to innovation or research.

The traditional approach to solving this problem involved monthly reviews and manual cleanup efforts, but these methods are fundamentally mismatched with the speed of the modern cloud. By the time a financial team identifies a spike in spending, the capital has already been spent, and the context of why those resources were created may have already vanished from the collective memory of the engineering team. This reactive posture ensures that organizations are always one step behind their own infrastructure. To reclaim control, the focus must shift away from periodic audits toward a model of continuous, real-time oversight that can keep pace with the millisecond-fast deployments that define contemporary software development.

Why Multi-Cloud Environments: Financial Minefields

Navigating the financial landscape of a multi-cloud strategy is akin to walking through a minefield where the rules of engagement change with every step. Each major cloud provider utilizes a proprietary set of billing increments and unique definitions for fundamental services. For example, the way network egress is calculated—the cost of moving data out of a cloud environment—varies wildly depending on the destination, the volume of data, and the specific routing used. These discrepancies make a “side-by-side” cost comparison nearly impossible for human analysts, as they are essentially trying to compare weights and measures in three different languages simultaneously. This complexity provides a fertile ground for financial leakage, as the cheapest compute option on one platform might be offset by exorbitant data transfer fees on another.

Fragmentation also extends into the realm of storage tiering and instance families. A high-memory instance on one cloud may have a completely different performance profile and price point than a seemingly equivalent instance on a competing platform. This lack of standardization prevents organizations from achieving true portability; they may move a workload to save on compute costs, only to find that the storage performance requirements necessitate a more expensive tier that negates the initial savings. Without a way to normalize this data into a single source of truth, organizations remain stuck in a perpetual cycle of guesswork. They pay for capacity they do not need while simultaneously struggling to maintain the performance levels required for critical user experiences, all while operating under the illusion of choice.

Moreover, the sheer volume of telemetry data generated by these environments creates a “signal-to-noise” problem that paralyzes decision-making. In a multi-cloud setup, an organization might be receiving millions of metrics per minute regarding CPU usage, memory pressure, disk I/O, and network latency across thousands of nodes. Identifying which of these metrics correlates with unnecessary spending requires a level of pattern recognition that exceeds human capability. The result is a defensive posture where departments over-provision resources out of a fear of downtime, essentially paying a “safety premium” to avoid the risk of underperformance. This cultural tendency toward over-provisioning, combined with the structural complexity of multi-cloud billing, ensures that the cloud remains one of the most significant and least understood line items on the balance sheet.

Transforming Infrastructure: AI-Driven Intelligence

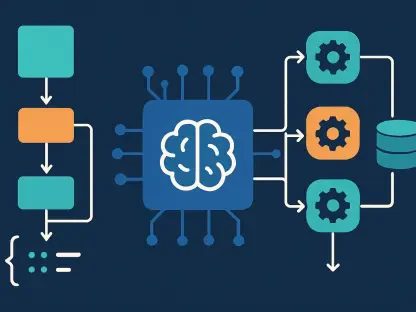

Artificial Intelligence serves as the essential reasoning layer that bridges the gap between complex engineering actions and their financial outcomes. By moving beyond static spreadsheets and manual oversight, AI transforms cloud infrastructure into a dynamic, self-optimizing asset that can reason about its own costs. The primary role of AI in this context is to ingest the massive streams of telemetry and billing data that have historically overwhelmed human teams. Once this data is ingested, AI models can identify patterns and anomalies that would be impossible to spot manually. This shift represents a move from passive reporting to active intelligence, where the system is capable of not only seeing what is happening but also understanding the “why” behind the numbers.

The first major breakthrough provided by AI is the solution to the data normalization crisis. AI models are uniquely suited to aggregate and normalize disparate billing streams into a standardized language. By calculating universal metrics such as cost per vCPU hour or cost per gigabyte across every provider, AI creates the visibility required to identify spending anomalies in real-time. Instead of waiting for a monthly invoice to discover a configuration error, an AI-driven system can flag a sudden spike in egress fees within minutes of its occurrence. This immediate feedback loop allows organizations to treat cost as a functional requirement, similar to how they treat uptime or security, ensuring that financial health is baked into the operational fabric of the company.

Beyond simple visibility, AI introduces the capability for predictive capacity management. Traditional auto-scaling mechanisms are inherently flawed because they are reactive; they wait for a threshold to be breached before taking action. By the time a system scales up to meet a traffic spike, the user experience has already suffered. AI, however, utilizes Long Short-Term Memory networks to forecast demand based on historical patterns, seasonal trends, and even external events. This allows the infrastructure to expand in anticipation of traffic and, perhaps more importantly, to contract the moment it wanes. This predictive approach eliminates the need for expensive “buffer” capacity, allowing organizations to run their systems at peak efficiency without sacrificing the stability or responsiveness that customers expect.

Expert Perspectives: The Evolution of FinOps

Industry consensus among technology leaders suggests that Cloud Financial Operations, or FinOps, must evolve from a practice of manual auditing to one centered on an automated waste detection loop. Experts frequently point out that the era of the “cloud accountant” who analyzes past spending is coming to a close, replaced by the era of the “cloud economist” who leverages AI to manage future resources. Research into reinforcement learning has shown that AI can act as a sophisticated placement engine, making autonomous decisions about where a workload should live by weighing regional price differences against network egress fees and latency requirements. This transforms multi-cloud from a passive architecture that simply exists into a proactive economic strategy that actively seeks the most efficient path for every bit of data.

Leading practitioners emphasize that “ghost” infrastructure is no longer a problem that can be solved with a once-a-quarter cleanup script. Instead, it requires a continuous scanning environment where AI agents evaluate the “shape” of every workload. This involves analyzing peak usage, burst patterns, and memory-to-CPU ratios over long periods. This risk-aware approach ensures that a server with low average usage but critical hourly spikes is not downsized to the point of failure. By balancing aggressive savings with the necessity of system stability, AI helps build a bridge of trust between the finance department, which wants to cut costs, and the engineering department, which wants to ensure uptime. This alignment of interests is perhaps the most significant cultural benefit of AI integration.

Furthermore, the integration of AI into FinOps is beginning to incorporate broader corporate goals, such as sustainability and carbon footprint reduction. Experts are now seeing models that can prioritize workload placement not only based on cost but also on the availability of renewable energy in a specific region. This means that a non-critical batch processing job might be moved to a data center in a region where wind or solar power is currently at its peak, even if the compute cost is slightly higher than a fossil-fuel-powered alternative. This multi-dimensional optimization highlights the future of the cloud: a highly intelligent, self-regulating system that aligns IT operations with the economic and ethical objectives of the entire organization.

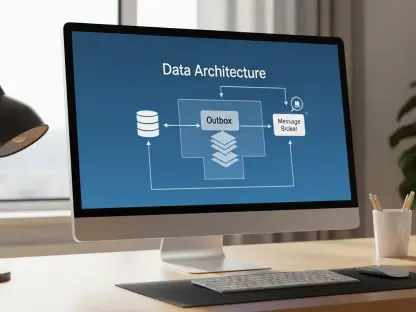

Frameworks: Implementing Autonomous Cloud Governance

To successfully integrate AI into cloud operations, organizations are encouraged to adopt a structured framework that moves toward a state of “Continuous Intelligence.” This transition begins with the establishment of automated guardrails that replace traditional, manual approval processes. By integrating AI-driven recommendations directly with Infrastructure as Code tools like Terraform or Pulumi, organizations can implement what are known as “canary rollouts” for cost-saving changes. This allows the system to test a specific optimization—such as a different instance type or a new storage tier—on a small, non-critical scale before applying it across the entire global environment. This ensures that performance remains intact and provides a safety net that encourages experimentation with more aggressive optimization strategies.

A robust governance strategy also involves defining specific “zones of trust” where different levels of autonomy are permitted. For example, development and staging environments might be designated for 100% autonomous optimization, allowing the AI to shut down unused resources or resize instances without any human intervention. In contrast, production databases or customer-facing APIs might require a “human-in-the-loop” model, where the AI provides a data-backed recommendation that must be approved by a senior engineer. This tiered approach allows the organization to capture the majority of easy savings through automation while maintaining high-touch control over the most sensitive parts of the business. It respects the complexity of mission-critical systems while ruthlessly eliminating waste in the background.

Finally, the success of these frameworks must be measured through specific, quantifiable Key Performance Indicators that go beyond simple “total spend” metrics. Organizations are focusing on unit cost reduction—decreasing the cost per user transaction or per gigabyte of data processed—which proves that the infrastructure is becoming more efficient even as the business grows. Other critical metrics include prediction accuracy, which compares forecasted demand against actual usage, and stability metrics to ensure that optimization efforts are not inadvertently increasing latency. By tracking these data points, leadership can see the tangible impact of AI on the bottom line, proving that cloud optimization is not just a technical exercise but a core driver of business profitability and competitive advantage.

The shift toward AI-driven multi-cloud optimization represented a fundamental change in how the relationship between technology and finance was managed. Organizations that moved away from reactive, manual auditing toward proactive, autonomous systems found themselves in a much stronger position to handle the volatility of the digital market. By treating cost as a real-time stream of data rather than a monthly post-mortem, these companies successfully turned their cloud infrastructure from a growing liability into a precisely tuned economic engine. The implementation of predictive scaling and intelligent rightsizing eliminated the massive buffers of idle capacity that once characterized enterprise IT.

Moreover, the transition to autonomous governance models allowed engineering teams to focus on innovation rather than infrastructure maintenance. The AI-driven guardrails ensured that every deployment was optimized for both performance and cost from the moment it went live, effectively removing the human error that had previously driven fiscal waste. This era of “Continuous Intelligence” proved that the complexity of the multi-cloud was not an insurmountable problem, but rather a data challenge that required a machine-learning solution. As a result, the businesses that embraced these advanced FinOps strategies secured a lasting advantage, operating with a level of agility and efficiency that was simply unattainable through traditional methods.