The modern enterprise infrastructure has reached a level of complexity where maintaining a perfectly sealed perimeter is no longer a realistic expectation for security professionals. Recent telemetry data suggests that a staggering 87% of organizations currently harbor at least one exploitable vulnerability within their production environments, a figure that highlights a deep-seated fragility in contemporary software supply chains. This reality means that roughly 40% of all active services are running with known flaws that could, under the right conditions, be leveraged by malicious actors to gain unauthorized access or disrupt operations. While the sheer volume of vulnerabilities detected in scanning tools often leads to a sense of “alert fatigue,” the data clarifies that the problem is not just about the existence of bugs, but rather about their reachability and potential impact. Organizations must now grapple with the fact that security is less about total prevention and more about surgical prioritization in a landscape where exposure is common. This shift requires a deep understanding of how specific technologies behave under real-world conditions.

Analyzing the Disparity Across Development Frameworks

Vulnerability Distributions Across Modern Programming Languages

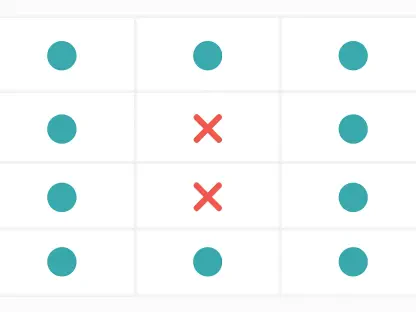

The risk profile of an organization is heavily influenced by its choice of programming language, with specific ecosystems showing much higher rates of exposure than others. Data indicates that Java services are the most frequently affected, with approximately 59% of these services containing at least one exploitable flaw in a production setting. This is followed by .NET environments at 47%, while even languages known for memory safety, such as Rust, still see a 40% vulnerability rate. These figures do not necessarily suggest that one language is inherently more “insecure” than another, but rather reflect how libraries and dependencies are managed within those communities. For instance, the extensive use of legacy frameworks in Java often keeps old vulnerabilities active far longer than in newer ecosystems. Consequently, security teams must move beyond generic scanning and begin to tailor their defense strategies to the specific architectural quirks and dependency management habits inherent to the development languages they utilize across their fleet.

The Necessity of Runtime Context in Threat Prioritization

A significant portion of the security burden stems from the massive influx of “critical” alerts that do not actually pose an immediate threat in a specific environment. Research shows that while many vulnerabilities are initially flagged with high severity scores, only about 18% of them retain that status once the runtime environment and active threat data are fully analyzed. This discrepancy is particularly striking in .NET environments, where a remarkable 98% of dependency-related vulnerabilities were effectively downgraded after closer inspection revealed they were not reachable or exploitable in practice. Without this contextual layer, security operations centers become overwhelmed by a constant stream of noise, making it nearly impossible to identify the genuine threats that require immediate intervention. By integrating runtime visibility, organizations can filter out non-exploitable flaws and focus their limited resources on the small fraction of issues that truly endanger the integrity of their systems. This approach transforms security from a reactive checklist into a data-driven strategy.

Navigating the Trade-Offs of Deployment Speed

The Growing Burden of Technical Debt and Outdated Libraries

Maintaining the current version of software dependencies has become a secondary priority for many rapid-release teams, leading to a significant increase in technical debt. The median software dependency is now approximately 278 days out of date, marking a notable increase in the lag time compared to recent cycles. This delay is far from benign, as older libraries are statistically far more likely to contain unpatched flaws that have been documented and cataloged by researchers over time. In fact, library versions released in 2024 harbor nearly three times as many vulnerabilities as those released in 2026, illustrating a clear correlation between library age and risk level. However, simply updating everything as fast as possible is not always the solution, as it can introduce stability issues or unvetted code. Finding a middle ground requires a disciplined approach to version control where teams regularly schedule maintenance windows to prune outdated components before they become a liability to the entire organization.

Supply Chain Integrity: Protecting Pipelines from Silent Changes

The pressure to innovate quickly has paradoxically created a new set of supply chain risks related to the haste of adopting new software versions. Approximately 50% of organizations were found to adopt new library versions within 24 hours of their release, often failing to implement basic security measures like pinning GitHub Actions to specific commit hashes. This behavior exposed pipelines to “silent changes” where malicious code could be injected into a dependency after its initial release without the user’s knowledge. To mitigate these risks, it was established that organizations must transition toward AI-assisted workflows for better prioritization and utilize Secure Hash Algorithms (SHA) for pinning critical dependencies. By focusing on the actual exploitability of threats rather than just their existence, security practices successfully evolved beyond simple patching. These steps ensured that development teams maintained high velocity without sacrificing the fundamental integrity of their production environments or leaving doors open for sophisticated supply chain attacks.