The days of a software engineer staring at a blinking cursor and manually typing out every bracket and semicolon have largely vanished, replaced by a sophisticated dance between human intent and machine execution. As we navigate the landscape of 2026, the primary role of the developer has undergone a radical transformation, moving from the “artisanal” creation of logic to the high-level orchestration of autonomous agents. This integration of artificial intelligence into the software development lifecycle has moved beyond simple code completion to full-scale agentic collaboration. The central challenge now lies in establishing standardized coding guidelines designed to bridge the gap between human intuition and algorithmic execution, ensuring that AI-driven development remains maintainable, secure, and scalable over the long term.

The Rise of Agentic Coding and Market Adoption

Current Growth Trends and Industry Adoption Statistics

Recent reports indicate that a vast majority of enterprise-level organizations have integrated AI coding assistants into their daily workflows as a standard operating procedure. Statistical data shows a significant increase in the volume of AI-generated code entering production environments, with some firms reporting that up to 40% of their new codebases are initiated by AI agents. This rapid adoption is fueled by the need to manage increasingly complex service-oriented architectures while maintaining lean engineering teams. Rather than replacing the worker, these tools have become a “force multiplier,” allowing smaller groups to handle infrastructure that previously required dozens of specialized developers.

Real-World Applications and Industry Leaders

Major technology firms and innovative startups are already formalizing “agentic style guides” to govern their collaborative environments and maintain architectural integrity. For instance, companies utilizing specialized tools like GitHub Copilot, Claude, and internal “agentic skills” are implementing standardized documentation formats like agents.md to feed specific constraints into large language models. These frameworks are being used to automate repetitive backend tasks, standardize frontend components in frameworks like Express or React, and ensure that AI-generated telemetry and error handling meet strict compliance standards. This shift ensures that the output is not just functional, but also fits the specific stylistic and security requirements of the enterprise.

Insights from Industry Experts and Engineering Leaders

The Migration of Cognitive Load

The consensus among thought leaders suggests that the cognitive load of engineering has migrated from syntax creation to architectural verification and rigorous oversight. Experts emphasize that the greatest challenge is translating “tacit knowledge”—the intuitive sense of a “good” code smell—into explicit, deterministic instructions that an AI can follow. Leading architects argue that without these standardized guidelines, AI agents risk producing “unmaintainable messes” that function in isolation but fail to integrate into broader enterprise ecosystems. This evolution requires engineers to think more like educators or conductors, providing the “why” behind every rule to ground the AI’s logic in real-world constraints.

Bridging the Gap: Tacit Knowledge vs. Explicit Documentation

One of the most profound realizations in this new era is the fundamental difference between onboarding a human junior developer and “onboarding” an AI agent. Humans learn through observation and cultural immersion, slowly absorbing stylistic nuances through exposure to the team. Agents, conversely, operate without this contextual intuition; they are incredibly fast but lack the ability to “read between the lines.” Consequently, coding guidelines must move from being aspirational or vague to being highly explicit. This transformation involves turning tribal knowledge into documented facts, ensuring that the AI has no room to deviate from the established path.

Future Outlook and Implications for the Development Lifecycle

Evolution of the Human-AI Feedback Loop

The future of coding standards lies in a continuous, iterative feedback flywheel that operates in real time. Rather than remaining static documents buried in a repository, guidelines will evolve into living datasets that update automatically whenever an agent fails a code review or triggers a linting error. This proactive refinement will turn every AI mistake into a permanent improvement of the organizational standard. As these systems become more self-aware, the documentation itself may be refined by the AI to better explain rules to future iterations of the model, creating a self-optimizing development environment.

Long-Term Benefits and Potential Challenges

While the potential for massive productivity gains is clear, the industry faces the challenge of “architectural chaos” if standards are not strictly enforced from the beginning. The positive outcome is an environment where small teams can manage massive codebases with the precision of a large enterprise, focusing on innovation rather than maintenance. Conversely, the risk remains that over-reliance on AI without robust, example-rich guidelines could lead to technical debt that human reviewers can no longer untangle. Organizations that prioritize the rigorous documentation of human expertise today will hold a significant competitive advantage as the pace of development continues to accelerate.

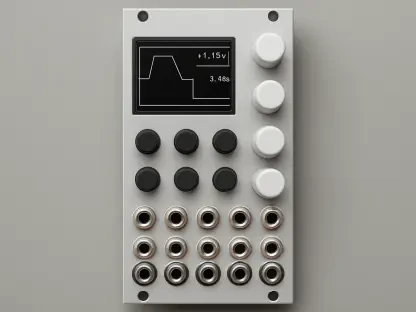

Securing the Enterprise Advantage

Standardizing human-AI collaboration was no longer an optional preference but a foundational requirement for modern software engineering. By transforming intuitive “vibes” into explicit, example-driven guidelines, organizations ensured that AI agents acted as reliable partners rather than unpredictable variables. Companies began implementing “Gold Standard” files—end-to-end templates that served as a blueprint for the AI—effectively providing a north star for every generated line of code. This shift required a move toward “boring,” objective language in documentation to eliminate ambiguity for non-human collaborators.

To maintain this momentum, engineering leaders started integrating agentic context files directly into their root directories, ensuring that the AI’s “memory” was always tethered to current standards. The focus shifted toward building robust automated safety nets, where deterministic tools like linters and static analysis worked in tandem with generative models to catch errors before they reached production. Ultimately, the successful teams were those that viewed their coding standards as a living dialogue, where every human intervention was used to train the system to be more autonomous and accurate in the next sprint. Moving forward, the industry prepared for a landscape where the primary skill of a developer was the ability to articulate complex logic into clear, machine-executable directives.