The digital economy now rests upon a sprawling web of microservices so intricate that even a five-minute lapse in availability can wipe out millions in revenue for small businesses. Maintaining this infrastructure has moved beyond the capacity of traditional manual monitoring, leading to a fundamental shift in how global production systems are managed. This transition marks the move from classic Site Reliability Engineering (SRE) and DevOps—characterized by human intervention and static scripts—to sophisticated AI-augmented models capable of navigating hyperscale architectures. As telemetry data explodes, organizations are forced to rethink the human role in the Operating Contract that keeps digital platforms alive.

Evolution of Reliability Frameworks in Global Production Systems

At the heart of this evolution remain the foundational pillars of reliability: Service-Level Indicators (SLIs), Service-Level Objectives (SLOs), and Service-Level Agreements (SLAs). SLIs provide the raw data—the percentage of successful queries or the 95th-percentile latency—while SLOs define the internal targets for these metrics over time. SLAs represent the legal promises made to clients, often revolving around the “five nines” benchmark, which permits only five minutes of downtime per year. Traditionally, these were managed via Error Budgets, a concept that allows teams to spend a specific amount of failure to facilitate rapid feature development. However, the manual application of these budgets is increasingly becoming a bottleneck in modern distributed systems.

The transition from human-centric SRE and DevOps to AI-augmented models is driven by the sheer surge in telemetry data and architectural complexity. In older frameworks, engineers relied on static automation to manage deployments, but the current state of hyperscale microservices makes it nearly impossible for a human to track every dependency. This necessitated the integration of intelligent systems designed to maintain global digital infrastructure without the constant need for manual intervention. The goal is to evolve from a state where reliability is a reactive struggle to one where it is an inherent, automated feature of the system architecture itself.

Comparative Functional Analysis: Human-Centric vs. AI-Driven Operations

Operational Efficiency and Incident Metrics

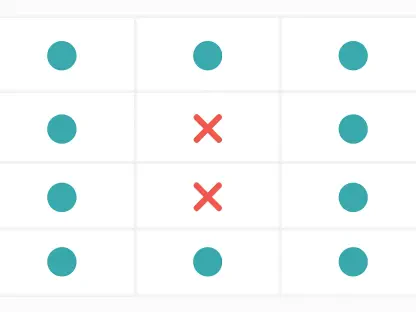

Comparing operational efficiency reveals a stark contrast in how incidents are handled under pressure. In traditional models, human judgment is the primary filter for incoming alerts, which frequently results in debilitating alert fatigue. Engineers must manually sort through thousands of signals to find the root cause of a failure, a process that naturally slows down the Mean Time To Detect (MTTD). When a critical service fails, the reliance on human-centric triage creates a lag that threatens the rigorous 99.999% availability standard, as manual reasoning under stress is inherently prone to error and inconsistency.

Conversely, AI-augmented DevOps leverages intelligent anomaly detection to separate signal from noise with unprecedented speed. By utilizing machine learning models that understand the “normal” state of a global system, these platforms can identify subtle failures long before they trigger a human-defined threshold. This shift significantly reduces MTTD and the Mean Time To Mitigate (MTTM) by automatically shifting traffic or triggering failovers. Consequently, the Mean Time To Resolve (MTTR) is minimized, protecting revenue-critical services from the financial fallout of prolonged outages that human-only teams might struggle to contain.

Scalability and Handling System Complexity

System complexity presents another major point of differentiation, particularly as organizations scale to thousands of interconnected microservices. Traditional SRE is often bogged down by “toil”—the repetitive, manual tasks involved in correlating failures across disparate services. As the volume of telemetry data grows, human operators find it impossible to track every dependency, creating a scaling bottleneck where more services require more engineers in a linear, unsustainable fashion. This model eventually breaks when the speed of system change outpaces the speed of human comprehension.

AI-augmented systems overcome this by employing “AI DevOps agents” that function as vendor-agnostic operational participants. These agents interpret complex telemetry in real-time, recognizing failure patterns across hundreds of microservices that would overwhelm a human observer. By identifying these patterns autonomously, the system can provide a high-level overview of health without requiring an engineer to manually link every component. This allows for a more fluid management of global-scale infrastructure, where the intelligence of the system scales alongside the architecture itself, ensuring that operational overhead does not grow exponentially with every new feature.

Risk Management and Innovation Balance

When managing risk and innovation, traditional SRE uses Error Budgets primarily as a manual signal to halt deployments when stability is threatened. While effective for smaller systems, this reactive approach often slows down innovation, as engineers spend a significant portion of their time “firefighting” instead of improving the system. The balance between shipping new features and maintaining stability remains a constant struggle, often mediated by tense negotiations between development and operations teams over manual interpretations of data and risk tolerance.

AI-augmented DevOps transforms these Error Budgets from passive signals into automated control mechanisms. By operationalizing the budget, the system can automatically throttle or rollback deployments based on real-time performance data, removing the need for human intervention in routine risk management. This liberation allows human engineers to pivot toward high-level architectural resilience and systemic risk prevention. Instead of reactive patching, the focus shifts toward designing self-healing architectures, effectively balancing rapid feature releases with the high-performance stability required in the modern market.

Challenges and Considerations in the AI Transition

Transitioning to these advanced systems is not without its difficulties, as human-centric models are often constrained by static threshold monitoring that lacks context. For many organizations, the shift from predefined scripts to interpretative systems requires a massive cultural and technical overhaul. AI-augmented systems demand full-lifecycle integration—stretching from initial deployment to emergency response—to be truly effective. Furthermore, the economic consequences of relying on automated mitigation are significant, as an incorrect automated decision at global scale can have repercussions as severe as the failure it was meant to prevent.

There is also the challenge of trust and transparency when moving away from manual reasoning. While an engineer can explain their thought process during an incident, AI systems can sometimes act as “black boxes,” making it difficult to understand why a specific mitigation path was chosen. Organizations must ensure that their AI DevOps agents are designed with interpretability in mind, allowing humans to audit and refine the system decision-making logic. Balancing the speed of AI with the oversight of experienced engineers remains a critical consideration for any team managing mission-critical infrastructure.

Strategic Recommendations for Modern Infrastructure

For organizations currently managing high-volume microservices where human intervention has reached a breaking point, the adoption of AI-augmented agents is no longer optional but a strategic necessity. It is recommended to maintain core SRE principles—specifically SLIs and SLOs—as the foundational Operating Contract while utilizing AI as the primary execution engine. This combination ensures that human intent remains at the center of the operation, while AI provides the speed and scalability required to handle the data-heavy realities of the current technological landscape.

Choosing between specific platforms often comes down to how well they integrate with existing telemetry stacks and their ability to provide vendor-agnostic insights. The ultimate goal is to create a frictionless operational environment where systems are engineered to survive subsystem failures without human intervention. By prioritizing AI innovation across the entire stack, organizations can protect their availability and revenue, ensuring that their digital infrastructure remains a competitive advantage rather than a liability in an increasingly complex global economy.

The transition from traditional human-led operations to AI-augmented resilience became a defining moment for global digital infrastructure. By shortening the duration of outages through automated detection and remediation, these systems moved beyond the limitations of manual reasoning. Future implementations prioritized the development of causal AI that explained its actions, allowing engineers to refine automated responses with greater precision. This evolution shifted the focus toward long-term systemic health, ensuring that infrastructure supported continuous innovation without sacrificing the stability that users demanded.