We’re thrilled to sit down with Vijay Raina, a renowned expert in enterprise SaaS technology and software architecture. With years of experience guiding organizations through complex cloud migrations and providing thought leadership in software design, Vijay brings a wealth of insight to today’s discussion. In this interview, we dive into the intricate journey of moving large-scale platforms like Stack Overflow to the cloud, exploring the motivations behind such transitions, the hurdles faced, and the strategies that ensure success. From overcoming legacy infrastructure challenges to embracing modern tools like Kubernetes, Vijay sheds light on what it takes to navigate these transformative projects. Let’s get started!

What inspired the decision to migrate a major public platform like Stack Overflow to the cloud, and what specific pain points with traditional data centers drove this shift?

The decision to move to the cloud often stems from the limitations of physical data centers. For a platform like Stack Overflow, which historically ran on servers in specific US locations, the infrastructure was incredibly optimized for performance but lacked flexibility. We hit a wall with scalability and maintenance—managing hardware directly meant constant hands-on intervention, like physically rebooting servers. More importantly, the setup hindered modern software practices, such as enabling team autonomy and deploying standalone services. The cloud offered a way to break free from those constraints, providing the scalability and agility needed to keep pace with growth and innovation.

Can you share some of the key experiences from moving a product like Stack Overflow for Teams to Azure, especially the challenges you encountered early on?

Moving Stack Overflow for Teams to Azure between 2021 and 2023 was a learning curve. We faced several false starts, primarily because Teams was deeply entangled with the broader codebase. Disentangling it required meticulous effort to isolate components without breaking functionality. There were also unexpected hiccups in adapting to the cloud environment, as our initial assumptions about performance didn’t always hold up. However, one silver lining was containerizing core components during this process—it made deployments smoother and gave us a deployable foundation to build on for future migrations.

What were some of the biggest apprehensions when planning to migrate the public Stack Overflow site to the cloud?

The public site migration came with significant concerns, largely because it was architected around data center assumptions. Latency and performance were huge worries—our code was built with expectations of tight server response times, often under 30 milliseconds, and we weren’t sure if the cloud could match that. There was also a lingering fear about whether a platform designed for bare metal servers could even function effectively in a cloud environment. It wasn’t just about moving code; it was about whether the entire system could adapt without compromising the experience for millions of users.

How did the hard deadline for exiting the data center shape your approach to the migration project?

Having a firm deadline—July 31, 2025, when the data center contract ended with no renewal option—was both a motivator and a challenge. It forced us to prioritize ruthlessly and set internal milestones well ahead of that date to account for inevitable delays. We also made a conscious choice to keep those internal deadlines private, avoiding unnecessary stress on the team and community. Past experiences taught us that publicizing every milestone can amplify minor setbacks into major headaches, so we focused on flexibility while maintaining urgency internally.

What valuable lessons from the Stack Overflow for Teams migration informed your strategy for the public site, particularly around technology choices?

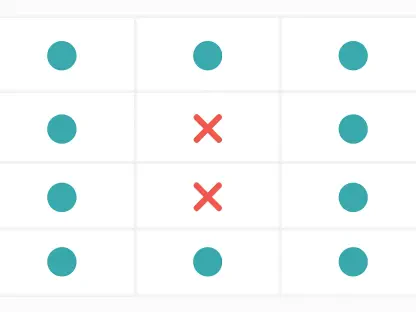

The Teams migration gave us critical insights. Initially, we used virtual machines running Windows, but we quickly saw their limitations—immutability issues and clunky rolling deployments forced us to pivot. Moving to Kubernetes was a game-changer; it offered better scalability and consistency for a high-traffic platform like the public site. We also learned to avoid a “big bang” approach after it caused chaos with Teams. Instead, for the public site, we adopted smaller, iterative steps, migrating services incrementally to learn and adjust along the way.

Why was it so important to assign a dedicated full-time team to the public site migration from the very beginning?

With the Teams migration, we initially had engineers splitting their focus, working on it alongside other responsibilities, and progress crawled. It wasn’t until we dedicated full-time staff that we saw real movement. For the public site, we knew from day one that this was a massive undertaking requiring undivided attention. A focused team drastically improved efficiency, decision-making, and accountability—there was no room for half-measures with a project of this scale and a looming deadline.

How do you see the future of cloud migrations evolving for large-scale platforms over the next few years?

I believe cloud migrations will become even more streamlined as tools and practices mature, especially with the rise of hybrid and multi-cloud strategies. We’ll see greater adoption of Kubernetes and containerization as standard practices, making portability across providers easier. Automation will play a bigger role, reducing human error in complex migrations. Additionally, I expect a stronger focus on security and compliance from the outset, as data privacy regulations tighten. For large platforms, the challenge will be balancing innovation with stability—ensuring migrations don’t disrupt users while leveraging cloud-native features to stay competitive.