The rise of generative AI has fundamentally disrupted the long-standing “handshake” of the open web, where creators traded content for human traffic and attribution. As large language models reach an insatiable demand for high-quality training data, the traditional binary of simply blocking or allowing bots has become a losing battle for digital platforms. To navigate this shift, experts are looking toward “pay-per-crawl,” a programmatic monetization layer that transforms the HTTP 402 “Payment Required” status code from a historical curiosity into a functional economic tool. This approach allows organizations to move beyond reactive blocklists and toward a “yes, if” framework that captures the commercial value of machine-to-machine interactions.

The following discussion explores how this model integrates into modern web infrastructure, the technical challenges of managing sophisticated AI crawlers, and the potential for usage-based payments to redefine the relationship between content owners and AI developers.

Modern AI crawlers use headless browsers to mimic human behavior and consume ad impressions. How does this shift the burden onto site reliability teams, and what specific technical hurdles make traditional blocklists ineffective for protecting high-value data at scale?

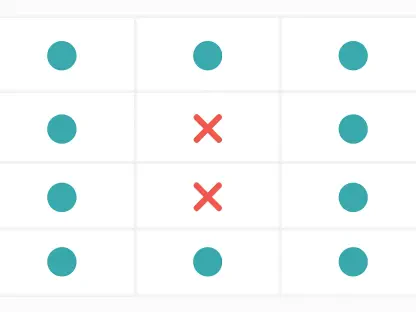

The technical burden has shifted from simple traffic filtering to a high-stakes game of “whack-a-mole” that drains engineering resources. Modern bots have evolved far beyond basic scripts; by using headless browsers, they can execute JavaScript and mimic human navigation patterns with enough precision to fool standard verification systems. This creates a double-hit for site reliability teams because these bots aren’t just scraping data—they are actively consuming ad impressions that advertisers believe are being served to humans. When you consider that some platforms have over 15 years of high-value archival data, maintaining a manual blocklist becomes an unwieldy, losing battle. The sheer sophistication of these AI agents means that as soon as one IP or fingerprint is blocked, the operators adapt their tactics, forcing teams to choose between over-blocking legitimate users or allowing commercial entities to extract value for free.

The HTTP 402 status code creates a “yes, if” framework for programmatic content access. How does this protocol facilitate machine-to-machine transactions, and what are the primary steps for integrating this into a web application firewall without disrupting legitimate human traffic?

The HTTP 402 code acts as a specialized signal that tells a bot the content is available, but only upon fulfillment of a real-time payment or identity requirement. Unlike a 403 Forbidden error, which is a hard “no,” the 402 response facilitates a programmatic negotiation where the machine can resolve the access requirement without human intervention. To integrate this into a Web Application Firewall (WAF) without affecting humans, you must first leverage robust bot management infrastructure to categorize incoming traffic. We use bot scoring and pre-populated lists of known crawlers to apply the 402 rule specifically to automated agents while allowing human browsers to pass through unimpeded. By wrapping these rules into the WAF UI and using dashboarding to monitor the results, an organization can effectively gate their intellectual property while keeping the front door wide open for their community.

Pay-per-crawl operates differently than traditional paywalls or fixed API subscriptions. In what ways does this granular, usage-based approach help surface potential long-term licensing partners, and how does it specifically capture revenue from high-volume training traffic that otherwise circumvents formal contracts?

Traditional licensing deals often involve massive friction, including lengthy procurement cycles and human-negotiated contracts that many smaller AI developers or experimental projects aren’t ready for. Pay-per-crawl lowers the barrier to entry by allowing crawlers to pay only for the specific data they consume in the moment, which serves as a “pull mechanism” to identify who is actually interested in your data. When a bot encounters a 402 requirement, it forces the operator to acknowledge the commercial value of the content, often leading to a phone call for a more comprehensive licensing deal. This model is particularly effective at capturing revenue from high-volume training traffic because it monetizes the activity at the source, ensuring that even those who aren’t ready for a $4.4 trillion global AI economy contribution still pay their fair share for the server load and data they utilize. It transforms what was once purely an infrastructure cost into a transactional revenue stream.

Implementing a monetization layer often requires significant engineering. How can organizations utilize existing bot management infrastructure and dashboarding to categorize crawlers, and what specific metrics should they monitor to ensure their intellectual property policies align with their actual server load costs?

By utilizing established platforms like Cloudflare, the engineering lift is significantly reduced because the heavy lifting of bot identification is already handled at a massive scale. Organizations can use existing UIs to wrap 402 response rules around specific categories of bots—distinguishing, for instance, between helpful search engines and aggressive AI training crawlers. Key metrics to monitor include the ratio of 402 challenges issued versus those successfully completed, as well as the specific volume of requests coming from different AI agents. By analyzing these dashboards, teams can adjust their charge rates to ensure the revenue generated at least offsets the server load costs. We have seen that simply implementing the 402 signal can even reduce uncontrolled scraping, as some bots stop sending traffic once they realize the content is no longer a “free lunch,” thereby improving overall site health.

Emerging payment protocols aim to support transactions from anonymous bot traffic without requiring prior registration. What infrastructure is needed to support these standards, and how does removing the registration barrier change the economic relationship between independent content creators and AI developers?

To support anonymous transactions, we are looking toward protocols like X402, which would allow payments to flow seamlessly without the bot needing to have a pre-existing account or registration with the site owner. This requires a sophisticated intermediary layer that can verify payments in real-time and communicate that success back to the content platform’s infrastructure. By removing the registration barrier, the economic relationship shifts from a closed, “invitation-only” model to a truly open, market-driven ecosystem. This is a game-changer for independent creators who may not have the legal teams to strike massive licensing deals; it allows them to receive micro-payments from any AI developer in the world automatically. It effectively restores the “implicit deal” of the internet—that content has value—but adapts it for a world where the primary consumers of data are now machines rather than people.

What is your forecast for pay-per-crawl?

I believe pay-per-crawl will become the standard “middle-ware” layer for the high-value web within the next three to five years. As the AI industry continues to grow toward that projected $4.4 trillion annual impact, the demand for structured, authoritative datasets will outpace the ability of humans to negotiate individual contracts. We will see a shift where the robots.txt file—which is currently a voluntary “handshake” often ignored by commercial crawlers—is replaced by these programmatic “yes, if” gateways. This won’t just be for the giants like Stack Overflow; even mid-sized niche publishers will use this infrastructure to protect their archives. Eventually, the friction of machine-to-machine commerce will vanish, creating a continuous, real-time revenue loop that finally compensates creators for the training data that makes modern AI possible.