The rapid integration of sophisticated biometric scanning into urban law enforcement strategies has sparked a profound debate regarding the fundamental balance between collective security and individual privacy. In Milwaukee, Wisconsin, this tension reached a breaking point when the local police department began utilizing facial recognition software before any formal usage policies were established or presented for public review. This lack of transparency created an immediate atmosphere of suspicion, suggesting that the drive for technological advancement had outpaced the commitment to democratic oversight. When the Fire and Police Commission finally convened to address the matter, they were met with a wall of community resistance that extended far beyond simple policy disagreements. Residents and activists argued that the deployment of such invasive tools without prior consent represented a breach of the social contract, undermining the very trust necessary for effective policing. This scenario illustrates that when institutions prioritize high-tech efficiency over open communication, they risk alienating the public and losing the cooperation required to maintain actual safety.

The Social Cost of Institutional Deception

The unauthorized implementation of surveillance tools by the Milwaukee Police Department served as a catalyst for a broader national conversation about accountability in the digital age. By the time the public became aware of the department’s activities, the software had already been integrated into various investigative workflows, creating a “fait accompli” that many residents found unacceptable. This approach ignored the historical context of surveillance in marginalized communities, where advanced monitoring is often viewed as a tool for systemic harassment rather than a genuine safety measure. The resulting outcry was not just a reaction to the technology itself, but a response to the deceptive manner in which it was introduced. Experts in civil liberties emphasized that transparency is not a secondary requirement but a foundational element of legitimate governance. When a law enforcement agency operates in secret, it sends a message that it does not answer to the citizenry, which can lead to a total breakdown in community relations that takes years or even decades to repair.

Building on this atmosphere of distrust, the subsequent public hearings revealed a community that was well-informed about the limitations and dangers of biometric tools. Activists presented evidence suggesting that the reliance on automated systems often replaces traditional, community-oriented policing with a detached and data-driven approach that ignores human nuance. The feedback provided during these sessions was overwhelmingly negative, with many speakers highlighting the potential for these systems to be used for political suppression or the monitoring of legal protests. This collective pushback ultimately forced a dramatic reversal in policy, leading to an immediate ban on the use of facial recognition technology within the city. This outcome proved that even the most advanced technological solutions cannot survive a sustained lack of public legitimacy. The Milwaukee case demonstrates that the “efficiency” gained through high-tech surveillance is often an illusion if it comes at the expense of the democratic processes that ensure law enforcement remains a servant of the people.

Technical Vulnerabilities and the Bias in Biometrics

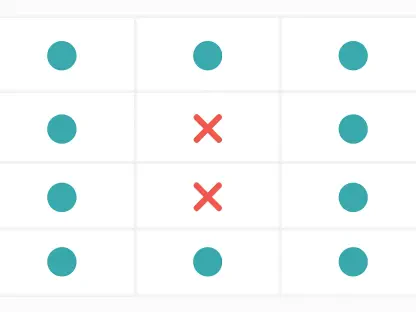

A significant portion of the opposition to facial recognition technology stems from its documented technical failures, particularly regarding demographic disparities and accuracy rates. Scientific analysis has consistently shown that these algorithms are frequently trained on datasets that lack diversity, leading to a phenomenon where people of color are misidentified at significantly higher rates than white subjects. In some instances, the probability of a false positive for Black or Asian individuals can be up to one hundred times higher than for their white counterparts. This inherent bias transforms a supposedly objective investigative tool into a source of systemic injustice, as misidentifications can lead to wrongful arrests, traumatic police encounters, and a permanent “presumption of guilt” for innocent citizens. For women and children, the error rates are also notably higher, further complicating the claim that these systems provide a reliable method for identifying violent criminals. These flaws are not merely technical glitches but are fundamental issues rooted in the data architecture of the software itself.

Beyond the problems of bias, the large-scale collection of biometric data introduces unprecedented security risks that threaten the personal safety of the entire population. Recent security failures at major data providers like Persona and Flock have exposed the vulnerability of sensitive information, ranging from live video feeds to license plate databases. When these systems are breached, the resulting exposure of personal identities and movement patterns provides a goldmine for cybercriminals and malicious actors. The centralization of such intimate data creates a single point of failure that can compromise the privacy of millions of people simultaneously. Furthermore, the existence of these vast databases creates a permanent digital trail that can be exploited long after a specific investigation has concluded. The risk of data leaks, combined with the lack of robust federal regulations regarding data retention and sharing, suggests that the infrastructure of mass surveillance may pose a greater threat to public stability than the crimes it is intended to prevent or solve.

The Future of Community-Based Safety Models

The resolution of the conflict in Milwaukee provided a clear blueprint for how cities might navigate the complex intersection of technology and civil rights. The decision to implement a total ban on facial recognition software highlighted the fact that community trust was viewed as a more valuable asset to the police force than the technological expediency of an unproven and biased tool. To move forward, it was suggested that law enforcement agencies must prioritize the establishment of clear, enforceable frameworks that include mandatory public consultation periods before any new surveillance technology is even considered for purchase. These frameworks should ideally include independent audits conducted by third-party experts to verify the accuracy and fairness of algorithms in real-world urban environments. By moving away from a model of secrecy and toward one of radical transparency, institutions might begin to rebuild the bridges that were damaged by previous unauthorized actions. The focus shifted from the mere collection of data to the meaningful engagement with the people the data represents.

In the aftermath of this administrative struggle, the focus turned toward the development of alternative safety strategies that do not rely on invasive monitoring. This involved investing in social programs, mental health resources, and localized community oversight boards that provide a more holistic approach to urban security. The actionable takeaway from this period was that technology should serve as a secondary support system rather than the primary driver of public policy. Future considerations must include the creation of “privacy by design” standards, where data protection is an integral part of any technical implementation from the start. Lawmakers and municipal leaders were encouraged to view public consent not as an obstacle to be bypassed, but as a necessary validation of the state’s authority. Ultimately, the lessons learned from the Milwaukee ban underscored a vital principle: the long-term health of a society depends more on the strength of its internal trust than on the sophistication of the cameras watching its streets. Professional standards now demand a higher level of ethical rigor in all future deployments.