The transition from static large language models toward autonomous agents capable of navigating unpredictable environments represents the most significant architectural pivot in artificial intelligence since the transformer architecture first debuted. While traditional models provide the cognitive foundation, the actual deployment of these agents has long been restricted by a persistent manual tuning bottleneck that forces engineers to spend countless hours refining system prompts and hard-coding logic through grueling trial and error. To address this friction, researchers associated with Amazon have introduced A-Evolve, a universal infrastructure designed to automate the creation, refinement, and optimization of these agentic systems. By treating an agent not as a static script but as a dynamic entity capable of self-correction, A-Evolve effectively moves the industry toward a systematic process of automated state mutation. This shift is being heralded as a vital moment for the field, replacing human intuition with empirical growth that allows agents to adapt their own operational strategies through iterative environmental feedback loops.

The Foundations of Automated Refinement

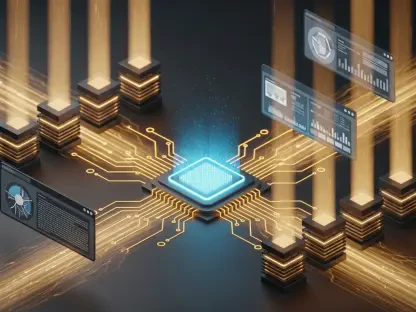

Architectural Components: The Agent Workspace

A-Evolve operates through a standardized directory structure known as the Agent Workspace, which functions as the fundamental genetic blueprint for an autonomous system. This organization ensures that every facet of an agent’s reasoning, from its high-level objectives to its low-level execution scripts, remains accessible for automated modification by the underlying engine. Within this workspace, the framework establishes five critical pillars that define the agent’s capabilities and constraints. The manifest file acts as the central brain, containing the metadata and entry points required for execution. Nearby, a dedicated prompts directory houses the instructional logic that guides the language model’s reasoning process. By isolating these components into a structured format, the framework enables a mutation engine to surgically target specific files for refinement. This architectural clarity allows developers to maintain oversight while the system independently identifies which logical structures require adjustment to meet complex task requirements.

The sophistication of the Agent Workspace extends into the skills and tools directories, which provide the agent with the functional capacity to interact with external software environments. Unlike traditional setups where skills are fixed at the time of deployment, A-Evolve allows the agent to author and store new code snippets as it encounters novel challenges. This means the workspace is a living repository that expands in utility as the agent gains experience. Furthermore, a dedicated memory component stores historical context and episodic data, which the mutation engine uses to refine how the agent perceives past successes or failures. Because the engine operates directly on these persistent files rather than temporary memory buffers, every optimization becomes a permanent part of the agent’s codebase. This approach ensures that the resulting improvements are both inspectable by human developers and durable across multiple execution cycles, effectively creating a self-improving software entity that evolves without manual intervention.

The Iterative Cycle: The Five-Stage Evolution Loop

The operational core of this framework is a rigorous five-stage evolution loop that transforms raw performance data into actionable software improvements. The cycle begins with the Solve stage, where the agent is deployed into a target environment, such as a specialized coding sandbox or a cloud-based command-line interface, to attempt a series of benchmarked tasks. As the agent interacts with its environment, the system transitions into the Observe stage, where it captures highly structured logs and granular performance feedback. This stage is critical because it identifies not just whether an agent failed, but exactly where the logic deviated from the desired outcome. By collecting these detailed execution traces, the framework builds a comprehensive map of the agent’s current limitations. This data-driven observation removes the guesswork that usually plagues manual debugging, providing a clear evidentiary trail for the next phase of the optimization process.

Once the performance data is gathered, the framework enters the Evolve and Gate stages to implement and verify potential solutions. During the Evolve stage, the mutation engine analyzes the failure points and generates specific code or prompt modifications designed to rectify the observed issues. This is not a random process; it is a targeted engineering effort where the system proposes a new “mutation” of the agent’s DNA. To ensure stability, the Gate stage subjects these changes to strict fitness functions that validate the new logic against pre-defined safety and quality standards. If the mutation successfully improves performance without causing regressions, the system moves to the Reload stage, re-initializing the agent with its updated workspace. To maintain professional standards, every successful iteration is automatically tagged within a version control system, allowing for seamless rollbacks if subsequent mutations prove detrimental. This loop ensures that the agent’s growth is both rapid and fundamentally stable.

Scalability, Versatility, and Performance Results

Modularity: The Philosophy of Flexible Integration

A-Evolve distinguishes itself through a modular design philosophy that prioritizes flexibility and cross-domain compatibility over rigid, model-specific constraints. The framework is built on a “Bring Your Own” principle, which empowers developers to integrate their preferred agent architectures, environments, and optimization algorithms. This modularity means that whether a team is working with a simple reasoning loop or a highly complex, multi-agent hierarchical system, the infrastructure can accommodate the specific needs of the project. This adaptability is particularly relevant in 2026, as the variety of specialized AI models continues to expand. By remaining agnostic to the underlying model, the framework ensures that as better base models are released through 2027 and 2028, the evolution infrastructure remains just as effective. This future-proof design allows organizations to invest in a single optimization pipeline that serves a wide range of internal use cases across different departments and technical stacks.

The versatility of the system is further demonstrated by its ability to function across diverse target environments, from isolated Dockerized containers to live cloud service interfaces. This environmental flexibility allows the framework to be applied to a broad spectrum of challenges, including software engineering, cybersecurity, and data analysis. Developers can also choose from various evolution algorithms, such as large language model-driven mutations or more traditional reinforcement learning techniques, depending on the complexity of the task at hand. By providing this level of customization, the framework moves away from the “one-size-fits-all” approach that has limited the effectiveness of previous automation attempts. This flexibility ensures that the evolution strategy can be precisely tuned to the nuances of the specific problem domain, allowing for more efficient resource allocation and faster convergence on high-performance agentic solutions that meet real-world operational demands.

Performance Benchmarks: Quantifying Success

The empirical effectiveness of this evolutionary approach has been confirmed through extensive testing against the most demanding benchmarks in the current AI landscape. In recent evaluations, a base agent evolved using this framework achieved the top ranking on the MCP-Atlas benchmark, securing a 79.4 percent success rate. This was particularly notable because the system started with a simple twenty-line prompt and independently evolved five complex, targeted skills to solve the tasks. Similar success was observed in software engineering domains, where the framework reached a 76.8 percent score on the SWE-bench Verified leaderboard. These results place the system among the top five globally for autonomous bug resolution, proving that automated evolution can match or exceed the performance of manually tuned agents. The ability to resolve real-world software issues at this scale suggests that the bottleneck of human-led agent development is finally being dismantled by systematic automation.

Beyond general reasoning and software engineering, the framework demonstrated significant improvements in specialized technical areas such as terminal proficiency and skill discovery. On Terminal-Bench 2.0, the evolved agents showed a thirteen percentage point increase in command-line accuracy, reaching a 76.5 percent success rate. Even more impressive was the performance on SkillsBench, where the framework achieved a 34.9 percent success rate, representing a fifteen percentage point gain over baseline models. This specific benchmark highlights the agent’s ability to autonomously discover and master new functions without human guidance. These metrics collectively illustrate that the evolutionary process is not just making minor adjustments but is fundamentally expanding the capability set of the agents. The data indicates that agents evolved through this infrastructure consistently outperform their static counterparts across every major category of autonomous operation, establishing a new standard for performance in the agentic AI sector.

Strategic Implementation: The Future of Agentic Workflows

The introduction of A-Evolve significantly lowered the barrier to entry for developing high-performance autonomous systems during the first half of 2026. By simplifying the integration process, the framework allowed engineers to initialize complex evolution pipelines with minimal code, shifting the focus from manual prompt tweaking to higher-level system design. This transition proved that the heavy lifting of optimization could be delegated to an automated engine, effectively returning state-of-the-art agents that were ready for deployment in production environments. Organizations that adopted this evolutionary approach found that they could scale their agentic fleets without a proportional increase in engineering headcount. The systematic nature of the mutation and gate cycles provided a level of predictability and auditability that was previously unattainable in agent development. This progress confirmed that the era of “hand-crafted” AI agents had transitioned into a new phase defined by industrial-scale, automated refinement.

Moving forward into 2027 and 2028, professionals should prioritize the adoption of evolutionary infrastructures to remain competitive in the rapidly advancing landscape of autonomous software. The most immediate actionable step for development teams is to move away from static prompts and instead focus on defining robust fitness functions that can guide automated mutation engines. By establishing clear success metrics and secure sandbox environments, organizations can allow their agents to evolve safely and continuously in the background. Furthermore, the integration of version-controlled agent workspaces will be essential for maintaining a clear audit trail of how an agent’s logic has changed over time. As the complexity of tasks assigned to AI agents grows, the reliance on human-driven tuning will become a liability. Embracing a framework that treats agent development as a continuous evolutionary process will be the key to unlocking the full potential of autonomous technology across all sectors of the modern digital economy.