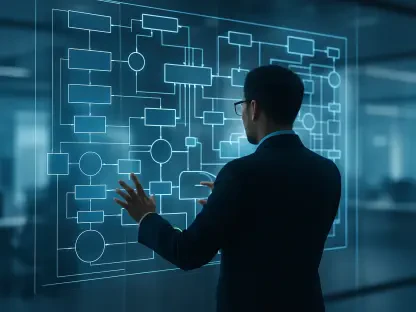

The rapid transition from manual server racking to programmable cloud environments has reached a critical inflection point where human oversight alone can no longer keep pace with the velocity of deployment. While the initial wave of Infrastructure as Code (IaC) revolutionized consistency through tools like Terraform and CloudFormation, it also created a digital “blast radius” where a single misplaced character in a script can inadvertently expose millions of sensitive records to the public internet. Today, a new paradigm is emerging: the integration of Large Language Models (LLMs) directly into the orchestration pipeline, promising to transform static configuration files into intelligent, self-auditing ecosystems.

This evolution represents more than a mere upgrade in tooling; it is a fundamental shift in how engineers interact with cloud resources. By leveraging generative AI, organizations are moving away from the rigid constraints of traditional templates and toward a dynamic model where intent drives execution. This review explores the mechanics of this integration, examining how reasoning models are being utilized to mitigate the historical risks of manual provisioning while introducing a layer of proactive security that was previously impossible to achieve at scale.

Evolution of Infrastructure as Code and the Rise of AI

The journey of infrastructure management has traveled from the era of “snowflake” servers—where every machine was manually configured and unique—to the modern standard of programmatic automation. In this landscape, resources are treated as disposable software components rather than permanent physical assets. This shift allowed for unprecedented speed, but it also stripped away the intuitive safety checks that human operators once provided. As environments grew in complexity, the limitations of static scripts became apparent, often leading to configuration drift where the actual state of the cloud diverged dangerously from the original code.

The emergence of LLMs in this space addresses the cognitive gap between high-level architectural intent and the granular, often esoteric syntax required by cloud providers. Unlike traditional linters that check for syntax errors, AI-driven controls understand the “why” behind a configuration. This transition marks the end of the era of reactive troubleshooting, replacing it with an era of generative synthesis. In this context, AI acts as a bridge, ensuring that the programmatic agility gained over the last decade does not come at the expense of structural integrity or operational security.

Core Capabilities of LLM-Integrated IaC

Natural Language Synthesis and Code Generation

The most immediate impact of LLM integration is the democratization of complex cloud orchestration through natural language synthesis. Engineers can now describe a desired state—such as a resilient, multi-region database cluster with specific latency requirements—and the model translates this human intent into production-ready CDK or Terraform code. This capability significantly lowers the barrier to entry for complex deployments, allowing teams to focus on architectural design rather than debugging the specific indentation of a YAML file.

Beyond simple generation, these models act as sophisticated translators that adhere to organizational best practices by default. When an LLM generates a Lambda function or an S3 bucket, it can automatically inject necessary boilerplate for logging, monitoring, and tagging based on the company’s internal standards. This ensures that even the most junior developers produce code that aligns with the senior-most architectural vision, effectively codifying institutional knowledge into the very process of resource creation.

Context-Aware Security and Policy Reasoning

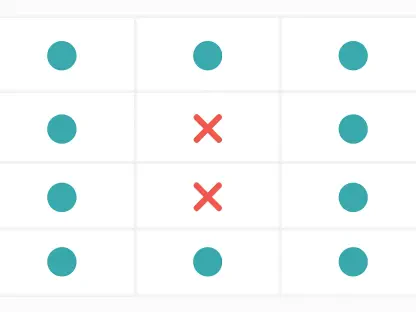

Traditional security tools often operate on a “pass/fail” basis, flagging any open port or unencrypted volume regardless of its purpose. In contrast, LLMs bring policy reasoning to the table, allowing for a nuanced analysis of infrastructure context. For instance, a model can distinguish between a public-facing web server that requires an open port 80 and an internal database that must remain isolated. By analyzing the naming conventions, tags, and surrounding architecture, the AI makes informed decisions about whether a configuration violates the principle of least privilege.

This reasoning capability extends to the automated generation of IAM policies, which are notoriously difficult to get right. Instead of granting broad permissions that invite security breaches, an LLM-driven control can analyze the specific actions a service needs to perform and generate a scoped-down policy that limits access to the absolute minimum. This move toward intent-based security reduces the likelihood of “over-permissioning,” which remains one of the primary drivers of cloud-based data breaches today.

Emerging Trends in Intelligent Cloud Orchestration

The industry is currently witnessing a move toward “Intent-Based Networking” and orchestration, where the focus shifts entirely from the “how” to the “what.” Models equipped with massive context windows are now capable of ingesting entire architectural repositories, providing a holistic view of a company’s digital footprint. This allows the AI to identify cross-resource vulnerabilities—such as a specific security group in one VPC that creates a backdoor into a private subnet in another—that would be invisible to tools looking at individual files in isolation.

Moreover, the trend is moving toward the continuous integration of these models within the deployment pipeline. Instead of a one-time check during the initial commit, AI agents are beginning to monitor live environments to detect and remediate drift in real-time. This creates a feedback loop where the infrastructure not only stays compliant but actually learns from operational data to optimize its own configuration, signaling a shift toward more resilient and adaptive cloud ecosystems.

Real-World Applications and Sector Deployment

In highly regulated sectors like FinTech and Healthcare, the application of LLM-driven controls has become a prerequisite for maintaining compliance at speed. These industries require rigorous auditing of every infrastructure change to ensure that patient data or financial records remain protected under frameworks like HIPAA or PCI-DSS. LLMs facilitate this by automatically generating compliance documentation and verifying that every new resource meets specific regulatory requirements before it is even provisioned, drastically reducing the time spent on manual audits.

Beyond security, these controls are being utilized for sophisticated cost optimization. By analyzing usage patterns and resource definitions, LLMs can identify over-provisioned assets—such as an oversized EC2 instance in a non-production environment—and suggest more economical alternatives. This proactive approach to “FinOps” ensures that organizations do not overspend on idle capacity, allowing them to redirect those resources toward innovation rather than maintaining inefficient legacy configurations.

Technical Hurdles and Implementation Challenges

Despite the clear benefits, the integration of LLMs into critical infrastructure carries inherent risks, most notably the “blast radius” of AI-generated errors. If a model hallucinates a networking configuration or suggests a syntactically correct but logically flawed routing table, the error can be propagated across an entire global infrastructure in seconds. The speed of automation, which is the technology’s greatest strength, can also become its greatest liability if the underlying AI lacks the necessary precision for complex, interconnected systems.

To mitigate these risks, industry leaders are increasingly adopting Human-in-the-Loop (HITL) workflows, where AI-generated templates must be reviewed and approved by a human engineer before execution. Additionally, there is a technical hurdle in ensuring that LLMs stay updated with the rapidly changing APIs of cloud providers like AWS, Azure, and Google Cloud. Continuous fine-tuning and the use of Retrieval-Augmented Generation (RAG) are being explored to ensure that the AI has access to the latest documentation, preventing it from suggesting deprecated or insecure methods of resource management.

Future of Autonomous Infrastructure

The horizon of cloud engineering points toward a state of fully autonomous, self-healing infrastructure. In this future, AI models will not just respond to human prompts but will predict potential outages by analyzing telemetry data and automatically reconfiguring the environment to prevent downtime. This predictive maintenance, powered by specialized small language models (SLMs) trained specifically on cloud documentation, will likely reach a level of accuracy that surpasses human capability in managing multi-cloud complexities.

Furthermore, we can expect the rise of specialized AI agents that act as dedicated “site reliability engineers” for specific applications. These agents will possess a deep understanding of the application’s unique requirements, allowing them to scale resources up or down with surgical precision. This will move the industry away from generic, one-size-fits-all infrastructure toward highly customized, liquid environments that morph in real-time to meet the shifting demands of the global digital economy.

Summary and Final Assessment

The integration of LLMs into Infrastructure as Code represents a profound shift from reactive, manual oversight to a proactive, intelligent governance model. By automating the synthesis of complex code and applying context-aware security reasoning, this technology has effectively bridged the gap between rapid innovation and architectural stability. The transition from static rule-checking to dynamic policy enforcement has proven essential for managing the scale and complexity of modern cloud operations, providing a level of protection that manual processes could never achieve.

Organizations should have prioritized the implementation of these intelligent controls as a foundational element of their DevOps strategy. While the challenges of model hallucinations and automated error scaling required careful management through human-centric validation, the overall impact on reliability and security was undeniable. Moving forward, the focus shifted toward refining these models and integrating them more deeply into the operational fabric of the enterprise, ensuring that infrastructure remained not just a utility, but a strategic asset that evolved alongside the business.