Modern infrastructure management frequently demands a delicate balance between local control and cloud-scale efficiency, leading many technical professionals to seek sandboxed environments that mimic production settings without the massive overhead of specialized hardware. The emergence of LocalBox, developed by the Azure Jumpstart team, provides a streamlined path for engineers to explore Azure Local capabilities using either existing cloud subscriptions or high-performance personal workstations. By virtualizing the complex interplay of software-defined networking and storage, this tool democratizes access to sophisticated hybrid cloud features that were previously restricted to those with physical data center access. Understanding the nuances of this environment is essential for anyone looking to master the deployment of Arc-enabled services, as it offers a risk-free playground for testing automation scripts, security policies, and resource management workflows. This capability is particularly relevant in 2026, as the integration between edge computing and centralized management continues to tighten across various industries worldwide.

Leveraging the power of Azure Bicep and the Azure CLI, users can rapidly provision a fully functional Azure Local instance that behaves identically to a multi-node physical cluster. This shift toward infrastructure-as-code ensures that the deployment remains repeatable and consistent, reducing the manual errors often associated with complex networking configurations. Whether the goal is to validate a new application architecture or to train a team on the intricacies of the Azure portal, LocalBox serves as the foundational bridge. The following sections provide a detailed, technical walkthrough of the transition from initial environment preparation to the successful orchestration of virtualized workloads. By focusing on the specific commands and configuration steps required, this guide aims to eliminate the ambiguity often found in high-level documentation, providing a concrete roadmap for technical success. Each phase of the process is designed to build upon the last, ensuring that the resulting infrastructure is robust, secure, and ready for advanced cloud-native experimentation.

1. Establishing the Foundation: Key Technical Components

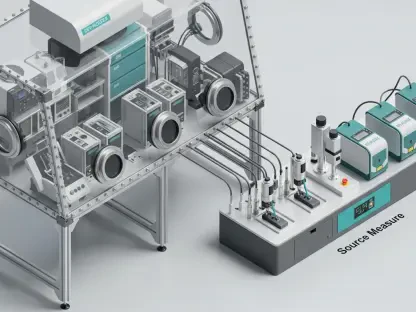

Before initiating any deployment, one must grasp the core technologies that allow LocalBox to function as a seamless proxy for physical Azure Local hardware. LocalBox itself is a specialized lab environment designed to bypass the need for expensive vendor nodes from providers like Dell or HPE, making it an ideal choice for development and testing. It operates in two primary modes: either as a large, nested virtual machine within an Azure subscription—typically utilizing 16 or 32 vCPUs—or as a local deployment on a workstation running Windows 11. For a local setup, the hardware requirements are specific, demanding at least 32GB to 64GB of RAM and a high-speed SSD to handle the intensive I/O operations of a virtualized cluster. This flexibility allows developers to choose the most cost-effective hosting method based on their current project needs and available resources, ensuring that the lab remains accessible regardless of physical location or budget constraints.

The orchestration of this environment relies heavily on Azure Bicep, a domain-specific language that simplifies the authoring of Azure Resource Manager templates. By using Bicep, the deployment process becomes declarative, allowing the system to handle the complex dependencies between virtual networks, storage accounts, and compute resources automatically. This approach is superior to manual portal configurations because it provides a version-controlled history of the infrastructure, making it easier to troubleshoot or replicate successful builds. In the context of Azure Local, Bicep handles the intricate tasks of registering resource providers and setting up the necessary service principal permissions. Consequently, the user spends less time navigating through disparate menus and more time interacting with the actual cluster features. This foundational understanding sets the stage for the practical steps involved in provisioning the environment through the Azure CLI and the cloud-native interface.

2. Infrastructure Deployment: Provisioning With Azure Bicep

The journey toward a functional LocalBox environment begins with the systematic registration of resource providers and the configuration of the Azure CLI environment. After logging into the Azure portal, the initial task involves opening the Cloud Shell to register critical providers such as Microsoft.AzureStackHCI, Microsoft.HybridCompute, and Microsoft.GuestConfiguration. It is vital to confirm that the account being used holds either Owner or Contributor permissions, as these roles are necessary for making structural changes to the subscription. Once the providers are active, the Arc Jumpstart GitHub repository must be cloned to gain access to the required Bicep templates and parameter files. Ensuring the Azure CLI is updated to version 2.65.0 or later is a prerequisite for compatibility with the latest resource types. This preparation phase is crucial because any missing provider or outdated tool can lead to deployment failures that are often difficult to diagnose once the provisioning process has been initiated.

After the environment is prepared, the focus shifts to the modification of the main.bicepparam file, which acts as the configuration blueprint for the entire LocalBox instance. Users must retrieve the unique object ID for the Azure Local resource provider and the specific Service Principal Name provider ID from the portal to ensure correct identity mapping. With these values in place, a new resource group is established to house all associated assets, providing a clean boundary for management and eventual cleanup. Executing the Bicep deployment command triggers the creation of the underlying VM and its nested components, a process that can take several minutes depending on the chosen region’s capacity. It is important to select a region with sufficient vCPU quota, as the Standard E32s v5 or v6 SKUs are compute-intensive. Monitoring the deployment progress through the portal provides real-time feedback, allowing for immediate intervention if resource limits are reached or if network constraints interfere with the provisioning logic.

3. Remote Connectivity: Accessing the LocalBox Client

Once the infrastructure deployment is finalized, the next logical step involves establishing a secure connection to the LocalBox-Client virtual machine to perform internal configurations. By default, security protocols leave management ports like RDP (3389) and SSH (22) closed to prevent unauthorized access from the public internet. To gain entry, one must navigate to the network settings of the newly created client VM within the Azure portal and manually define an inbound security rule. This rule should specifically allow traffic on port 3389, assigned with a high priority—such as 100—to ensure it overrides any existing restrictive policies. This step is a critical security gate, emphasizing the principle of least privilege while still providing the necessary access for administrative tasks. Only after this network security group rule is successfully applied can the user proceed to the overview tab to initiate a connection.

The actual connection process is handled through the RDP protocol, which requires downloading a connection file directly from the Azure portal interface. Upon launching this file, the administrator must provide the credentials that were previously defined in the Bicep parameter file during the initial setup phase. Successfully logging into the LocalBox-Client reveals a pre-configured Windows environment tailored for cluster management. This machine acts as the primary workstation for interacting with the local cluster nodes and executing the PowerShell scripts required for final networking adjustments. It is within this environment that the bridge between the Azure cloud control plane and the local virtualized hardware is truly solidified. Maintaining this connection allows for a seamless transition into the creation of virtual machine images and the definition of the logical networks that will eventually host active workloads.

4. Workload Orchestration: Building Virtual Machines

With the management client accessible, the focus transitions to the actual creation of virtual machines within the LocalBox environment, a process that mirrors production workflows on physical Azure Local clusters. The first requirement is the generation of a base VM image, which is most efficiently accomplished by importing a standard image from the Azure Marketplace directly into the local cluster storage. In the portal, users navigate to the cluster resource, select the VM Images menu, and define the parameters for the new image, including its name and the specific storage path. This image serves as the template for all subsequent virtual machine deployments, ensuring that every instance starts from a known, secure configuration. This centralized image management is a hallmark of the Azure Local experience, allowing administrators to maintain consistency across a distributed environment while leveraging the vast library of pre-configured OS options available in the cloud.

The final architectural component involves the establishment of a logical network to provide connectivity for the virtualized workloads. This is achieved by running the Configure-VMLogicalNetwork.ps1 script located on the LocalBox-Client machine, which maps the internal VLAN and subnet structure to a resource that the Azure portal can recognize. Once this network is visible in the resource group, the user can proceed to the Virtual Machines blade to create a new instance, such as a Windows Server VM. During the creation wizard, it is essential to attach the previously defined network interface and select the custom VM image created in the earlier step. This end-to-end integration—from marketplace image to logical network attachment—demonstrates the power of the Azure control plane in managing edge resources. Upon completion, the new virtual machine becomes a functional part of the LocalBox ecosystem, ready for application deployment, testing, or further infrastructure scaling.

5. Implementation Summary: Future Considerations

The deployment of a virtualized environment via LocalBox successfully demonstrates how modern cloud tools can replicate complex hardware configurations for testing and development purposes. By initiating the setup through Azure Bicep, the process remains automated and scalable, providing a reliable foundation for any professional looking to deepen their expertise in hybrid cloud management. The subsequent steps of cloning necessary repositories and generating VM images from the marketplace ensured that the environment was not only functional but also aligned with current industry standards for image management. This methodical approach allowed for the creation of a sophisticated infrastructure that supports the deployment of active workloads without the traditional barriers of physical hardware procurement or complex manual networking. The final successful launch of a virtual machine within this sandbox confirms the viability of the LocalBox solution as a premier tool for technical validation.

Looking ahead, those who have mastered this setup should consider exploring the integration of Azure Arc-enabled data services or advanced monitoring via Azure Monitor to further enhance their lab environments. The ability to manage these local resources with the same policy and security frameworks used for native cloud assets provides a significant advantage in maintaining a unified posture across diverse environments. As organizational needs evolve, the skills gained from configuring these logical networks and custom images will be directly transferable to large-scale production deployments. Continued experimentation with automation scripts and security rules will ensure that the infrastructure remains resilient against emerging threats and operational challenges. Ultimately, the successful implementation of LocalBox serves as a critical first step in a broader journey toward mastering the intersection of local compute and global cloud management.