Infrastructure engineering is no longer just about keeping servers running; it is about building resilient, automated systems that secure data at every hop. In this interview, we speak with Vijay Raina, a SaaS and software expert with deep experience in enterprise architecture. He sheds light on the intricacies of building a high-scale TLS termination layer, a project that redefined how his team managed thousands of custom domains while adhering to the rigorous demands of modern security.

The discussion explores the transition to Zero Trust architectures, the technical hurdles of automating certificate issuance at scale, and the clever use of runtime APIs to eliminate downtime during certificate rotations. Raina shares his insights on balancing security with high availability and the evolving landscape of encrypted traffic.

In a Zero Trust environment, why is it no longer sufficient to decrypt at the load balancer and send plaintext to backends? What specific technical hurdles arise when implementing re-encryption between the load balancer and internal application servers? Please provide step-by-step details on how this affects your overall latency.

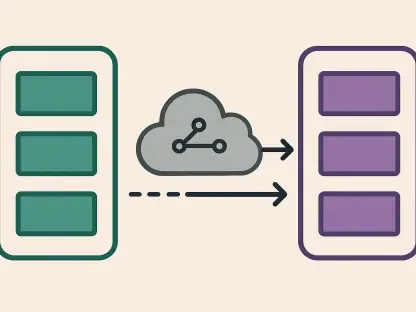

The old playbook of trusting the internal network is dead because once an attacker gains a foothold inside your VPC, plaintext traffic becomes a gift to them. In a Zero Trust model, we must assume the perimeter is already breached, which means encrypting every internal hop to ensure that data remains unreadable even to a lateral intruder. The primary technical hurdle is the overhead of managing a secondary set of internal certificates and the computational cost of performing a second handshake for every request. This process adds latency in three distinct steps: first, the initial decryption of the client’s TLS connection; second, the overhead of the load balancer establishing a new, encrypted TLS session with the backend; and third, the backend’s own CPU cycles spent decrypting that internal traffic. While this adds a few milliseconds to the round-trip time, it is a non-negotiable trade-off for 2025-era security standards.

When automating certificate issuance through HTTP-based validation, how do you manage token distribution across multiple load balancer nodes efficiently? What are the primary bottlenecks in the window between a domain being added and a certificate being hot-injected? Please share any metrics you use to track this flow.

We handle token distribution by using a shared storage location that all HAProxy nodes can access, ensuring that when DigiCert requests the validation file from any node, the response is consistent. The process relies on exposing the token via port 80 at a specific path, which avoids the “chicken and egg” problem of needing a certificate to get a certificate. The biggest bottlenecks are undoubtedly the time it takes for DigiCert to perform the validation and the inherent delays in global DNS propagation. We typically see a 10-minute window from the moment a customer adds a domain to the point where the certificate is live. To keep this on track, we monitor the “time-to-active” metric, ensuring our sync agents pick up and inject new certs within their 5-minute polling window.

Using a runtime API to update certificates avoids the downtime associated with reloading. How does a local sync agent accurately reconcile state differences from a central vault, and what are the operational risks if the in-memory store falls out of sync with the local files? Please elaborate with an anecdote.

The sync agent acts as a stateful bridge, maintaining a local manifest of what should be in memory versus what is currently stored in our central KMS. It periodically performs a diff, pulls missing .pem files to /etc/haproxy/certs/, and then issues a socket command to the HAProxy runtime API to hot-inject the new data. The biggest risk of a desync is “phantom certificates,” where a file exists on disk but hasn’t been pushed to memory, leading to users seeing expired or incorrect certs. I recall a situation where a network blip interrupted the API call after the file was written; the agent’s local state thought the job was done, but HAProxy was still serving the old cert. We had to implement a double-verification step where the agent queries the HAProxy socket to confirm the in-memory serial number matches the disk before marking the task as complete.

Relying on Server Name Indication (SNI) enables thousands of domains to share one IP address. How does this architecture maintain low latency during the TLS handshake when searching through thousands of in-memory certificates? How do you prepare for the broader industry shift toward Encrypted Client Hello?

HAProxy is incredibly efficient because it loads all certificates into a memory-resident map at startup or during hot-injection, which eliminates disk I/O during the request path. When a client sends the hostname in the SNI extension, HAProxy performs a high-speed lookup in this map to present the correct certificate instantly. To prepare for Encrypted Client Hello (ECH), which encrypts that hostname to prevent metadata leaking to eavesdroppers, we are tracking the adoption of TLS 1.3 extensions. ECH will require a more complex initial handshake where a “public” facing certificate is used to decrypt the inner client hello before the actual target certificate can be selected. It’s a shift from plaintext lookups to a multi-layered decryption process, and we are ensuring our load-balancing layer has the CPU headroom to handle that extra work.

Managing certificates in a centralized KMS requires a balance of high security and constant availability. How is the sync agent designed to handle KMS connection failures, and what strategies ensure that thousands of certificates are securely stored yet quickly accessible? Please describe the sync process in detail.

The sync agent is designed with a “fail-safe local” philosophy, meaning it always prioritizes the certificates it has already successfully cached on the local filesystem. If the KMS is unreachable, the agent continues to serve the existing certificates and enters a retry loop with exponential backoff to avoid hammering the security service once it recovers. The process involves the agent fetching an encrypted payload from the KMS, decrypting it using node-specific credentials, and writing the result to a restricted local directory. By decoupling the KMS fetch from the request path, we ensure that a central outage doesn’t drop existing traffic; it only delays the provisioning of new domains. This tiered approach—KMS to local disk, then local disk to in-memory store—provides the speed of local access with the security of a central vault.

Scaling to thousands of custom domains often involves using CNAME records rather than A records for backend flexibility. How does this choice impact your DNS management at scale, and what are the trade-offs when serving validation tokens over port 80? Please provide a step-by-step explanation of your DNS strategy.

Using CNAMEs is a game-changer for infrastructure agility because it allows us to point all customer domains to a single internal identifier; if we need to migrate our 3 HAProxy nodes to a new set of IP addresses, we only change one record on our end instead of asking 3,000 customers to update theirs. Our strategy begins with the customer creating a CNAME pointing to our load balancer. Then, for Domain Control Validation (DCV), we serve a token over port 80 because it is the only way to prove ownership before a valid TLS certificate exists. The trade-off is a brief moment of unencrypted traffic for that validation file, but since it contains no sensitive data—only a random string—the risk is negligible. This sequence—CNAME pointing, token serving, DigiCert validation, and finally certificate issuance—creates a seamless onboarding flow.

What is your forecast for the future of high-scale TLS termination and Zero Trust architectures?

I believe we are moving toward a “Double-Encryption” standard where the performance penalty of TLS-within-TLS becomes so optimized by hardware acceleration that it becomes the default for every single packet, regardless of whether it is internal or external. As Encrypted Client Hello becomes the norm, the “privacy-first” internet will make metadata as protected as the data itself, forcing load balancers to become even more sophisticated in how they route traffic without seeing the hostname in the clear. My advice for readers is to stop thinking of TLS as a “perimeter” task and start treating every service-to-service call as a public-facing connection; building that automation today is the only way to survive the scale and security threats of tomorrow.