Have you ever poured hours into debugging a Java application only to discover that a simple encoding mismatch turned your data into gibberish across platforms? This frustrating scenario has plagued developers for decades, with text files misbehaving when moved between systems due to inconsistent character sets. In a world where global collaboration and cross-platform compatibility define modern software, Java’s decision to standardize UTF-8 as the default charset in JDK 18 marks a pivotal shift. This isn’t just a technical update—it’s a lifeline for those battling encoding chaos in their codebases.

The significance of this change cannot be overstated. With applications now spanning diverse environments—from cloud servers to localized desktops—ensuring consistent data interpretation is critical. Java Enhancement Proposal (JEP) 400, implemented in JDK 18, addresses a longstanding pain point by making UTF-8 the universal default for input/output operations. This move not only reduces bugs but also aligns Java with global standards, promising a smoother path for developers navigating multi-platform challenges. Let’s dive into how this transformation unfolded and why it matters today.

A Critical Shift in Java’s Journey: Why Encoding Is Key

Java’s evolution has always aimed at robustness, but character encoding remained a silent thorn in its side for years. Before the landmark update in JDK 18, the platform’s reliance on operating system-specific charsets created unpredictable outcomes. This historical oversight meant that a program could function flawlessly in one environment but fail spectacularly in another, all due to differing default encodings like ISO-8859-1 or UTF-8 based on locale settings.

The stakes for fixing this issue have grown higher as software development becomes increasingly globalized. With teams collaborating across continents and systems, the need for a standardized approach to text handling became urgent. JEP 400’s introduction of UTF-8 as the default charset responds directly to this demand, offering a unified solution that minimizes errors and boosts confidence in cross-platform deployments.

This shift also reflects Java’s broader commitment to modernization. By addressing encoding inconsistencies, the platform ensures that developers can focus on innovation rather than troubleshooting obscure bugs. The decision to prioritize charset uniformity speaks to an era where seamless integration across diverse systems is no longer optional but essential for success.

The Mess of Platform-Specific Encoding: Java’s Past Struggles

Delving into Java’s history reveals a landscape riddled with encoding pitfalls. Since the days of JDK 1.1, classes such as FileReader and FileWriter defaulted to the host system’s charset when none was specified. This design choice, while seemingly practical at the time, led to chaos as files transferred between systems with different defaults often resulted in corrupted or unreadable data.

Consider a real-world scenario: a developer in Europe writes a file on a system defaulting to ISO-8859-1, but a colleague in Asia reads it on a UTF-8-based system. Without explicit charset definitions, the text could render incorrectly, introducing errors that are maddeningly hard to trace. Such issues were not rare; they disrupted workflows, especially during system upgrades or migrations, costing countless hours in debugging.

These challenges highlight a critical flaw in Java’s early architecture. The lack of a consistent default charset created a breeding ground for bugs, particularly in applications spanning multiple environments. It became clear that a universal standard was necessary to eliminate these discrepancies and safeguard data integrity across platforms.

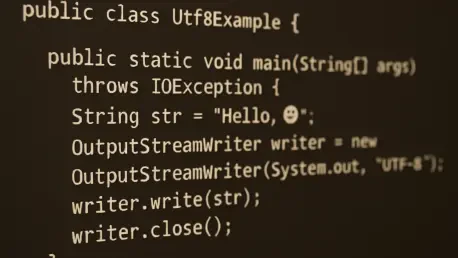

Decoding JEP 400: UTF-8 as the Ultimate Fix

Enter JEP 400, a proposal that redefined Java’s approach to character encoding with its rollout in JDK 18. By establishing UTF-8 as the default charset for I/O operations, this update directly tackles the inconsistency that haunted developers for years. UTF-8, known for its ability to handle a vast array of characters from various languages, aligns perfectly with the needs of a globally connected software ecosystem.

Take, for instance, a pre-JDK 18 application writing a text file without a specified charset. On one system, it might use ISO-8859-1, while on another, it defaults to something else, leading to mismatched interpretations. Post-JDK 18, the same code automatically adopts UTF-8, ensuring identical behavior across environments. This standardization, as detailed in JDK documentation, eliminates guesswork and reduces the risk of data corruption.

Importantly, flexibility remains intact. Developers can still override the default using JVM flags like -Dfile.encoding or by specifying charsets in constructors for unique scenarios. This balance of consistency and adaptability makes JEP 400 a practical solution, providing a safer baseline while accommodating specialized needs in diverse projects.

Echoes from the Community: Developers Cheer the UTF-8 Standard

The transition to UTF-8 didn’t emerge in a vacuum—it was fueled by years of feedback from the Java community. Discussions documented in JEP 400 proposals reveal a shared frustration over encoding errors that plagued legacy systems. One seasoned developer noted in a forum post, “I’ve lost days fixing charset mismatches in production code. UTF-8 as default is a long-overdue relief.”

This sentiment echoes across the industry, where UTF-8’s dominance in web standards and software frameworks made it a natural choice for Java. Its adoption enhances portability, ensuring that applications behave predictably whether deployed on a Linux server or a Windows desktop. Community consensus, as reflected in various developer blogs, points to this change as a significant step toward error-free coding.

Beyond technical benefits, the emotional weight of this update resonates deeply. Developers who once grappled with obscure bugs in international projects now see a clearer path forward. Their collective voice underscores why standardizing on UTF-8 isn’t just a feature—it’s a response to real human challenges faced in the trenches of software development.

Adapting to the Change: Guidance for Java Developers

With UTF-8 now the default charset, navigating this new landscape requires a blend of awareness and strategy. Developers should first embrace the default for most I/O operations, as it guarantees consistency across platforms. Testing applications in varied environments remains crucial to confirm that text handling behaves as expected under the new standard.

For projects interacting with legacy systems, explicit charset specifications are still vital. Using constructors to define encodings or leveraging JVM flags can address edge cases where UTF-8 might not align with older requirements. A practical tip is to document charset choices in code comments, ensuring team members understand the rationale behind deviations from the default.

Ultimately, the goal is to build robust applications that thrive in global contexts. By balancing the benefits of UTF-8 with mindful overrides when necessary, developers can harness JEP 400’s full potential. This approach not only mitigates risks but also empowers teams to deliver reliable software, no matter where it’s deployed.

Reflecting on a Milestone: What Came Next

Looking back, Java’s adoption of UTF-8 as the default charset through JEP 400 proved to be a transformative moment for the platform. It addressed a persistent source of frustration, smoothing out the wrinkles of cross-platform text handling with a universal standard. Developers who once dreaded encoding mismatches found a newfound stability in their workflows.

The ripple effects of this change extended into subsequent updates, inspiring further refinements in Java’s ecosystem. It set a precedent for tackling historical pain points with bold, community-driven solutions. The focus shifted from merely fixing bugs to proactively building a more cohesive development experience.

As the Java landscape continued to evolve, the lesson from this update remained clear: adaptability was key. Developers were encouraged to stay vigilant, testing charset behaviors in diverse scenarios and sharing insights with peers. Embracing ongoing education and collaboration became the next step, ensuring that the benefits of standardized encoding endured across future innovations.