The widespread fascination with quantum computing has reached a fever pitch, fueled by a relentless stream of corporate announcements that promise to redefine the limits of human calculation and industrial production. For several years, the narrative surrounding this technology has been one of imminent revolution, with industry titans suggesting that a “quantum advantage” is just around the corner, yet the actual timeline for practical deployment continues to shift further into the distance. This persistent gap between theoretical potential and engineering reality has created what many experts now describe as a quantum mirage, where the goal of a commercially viable system remains perpetually two to three years away regardless of the current date. While the underlying physics of these machines is fundamentally sound, the transition from high-level laboratory experiments to rugged, industrial-grade applications is proving to be one of the most significant technical challenges of the modern era. The industry currently finds itself caught in a high-stakes tension, balancing the need for massive capital investment with the sobering realization that the scaling laws of quantum hardware are far more unforgiving than those of classical silicon.

Current hardware milestones, such as those demonstrated by the latest superconducting processors, represent genuine feats of human ingenuity but often fail to translate into immediate real-world utility. For instance, high-profile experiments involving molecular simulations or complex sampling tasks are frequently performed on a scale so small that they lack the complexity required for meaningful drug discovery or materials science research. A simulation of a dozen atoms is a profound scientific achievement, yet it remains light-years away from modeling the thousands of atoms found in a single protein or an industrial catalyst. This disconnect creates a strategic delusion where symbolic benchmarks are mistaken for functional tools, leading to a developmental trajectory that appears to be moving forward while the most difficult obstacles remain unaddressed. As researchers encounter the true complexity of maintaining quantum states at scale, the exuberant marketing of the past few years is increasingly being tempered by the hard realities of noise, decoherence, and the massive infrastructure required to keep these delicate systems operational in a world full of interference.

The Mathematical Chasm: Requirements for Error Correction

The primary obstacle preventing quantum computers from moving beyond the experimental phase is the extreme fragility of physical qubits, which are the basic units of quantum information. Unlike classical bits that are either a one or a zero and are physically robust, qubits exist in a state of superposition that is easily disrupted by the slightest environmental noise, such as heat, vibration, or electromagnetic radiation. This phenomenon, known as decoherence, typically occurs within microseconds or milliseconds, causing the quantum information to “leak” and making long, complex calculations impossible. To build a machine that can actually perform useful work, the industry must move away from relying on these individual physical qubits and instead develop “logical qubits.” These are virtual units of information created by grouping hundreds or even thousands of physical qubits together using sophisticated error-correction codes. By checking for and correcting errors in real-time, a logical qubit can remain stable long enough to execute a meaningful algorithm, but the overhead required for this process is nothing short of astronomical.

Current research suggests that the ratio of physical to logical qubits is one of the most daunting metrics in all of computer science, with most estimates requiring at least 1,000 physical units to secure a single stable logical unit. If a transformative algorithm for breaking encryption or discovering a new carbon-capture material requires 10,000 logical qubits, the underlying hardware would need to support upwards of 10 million physical qubits. When compared to the state-of-the-art systems of 2026, which are only just beginning to experiment with a few hundred or a thousand physical qubits, the scale of the missing infrastructure becomes clear. This several-order-of-magnitude gap is not something that can be bridged through incremental optimization alone; it requires a fundamental shift in how quantum chips are manufactured and managed. Without a breakthrough that significantly lowers the error rates of physical qubits or a more efficient error-correction scheme, the path to a high-impact quantum computer will remain blocked by a mathematical wall that no amount of venture capital can circumvent.

Engineering Obstacles: Cryogenics and Interconnects

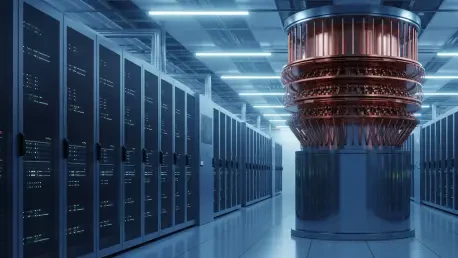

Beyond the abstract mathematical requirements lies a physical reality that is equally challenging: the necessity of maintaining extreme cryogenic environments for superconducting quantum processors. These systems must operate at temperatures around 15 millikelvin, which is significantly colder than the vacuum of deep space, requiring massive and expensive dilution refrigerators to function. As the industry attempts to scale from hundreds to thousands of qubits, the thermal footprint of these machines begins to exceed the cooling capacity of even the most advanced refrigeration technology. Every additional qubit and its associated control wiring introduces a small amount of heat into the system, and at such low temperatures, even a microscopic increase in thermal energy can trigger decoherence and ruin a computation. This thermodynamic limit suggests that we cannot simply build larger refrigerators or stack them together; rather, we must find ways to make the qubits themselves more resilient or find new methods of delivering control signals that do not bleed heat into the core of the machine.

The “wiring crisis” represents another significant bottleneck that threatens to stall progress in the near term, as each individual qubit currently requires dedicated coaxial cables for manipulation and measurement. In a system with a few hundred qubits, the interior of a quantum computer already resembles a dense “spaghetti of wires” that must be meticulously routed from room-temperature electronics down to the cryogenic core. Scaling this architecture to the millions of physical qubits required for error-corrected computation is physically impossible under current designs, as the volume of the cables alone would eventually exceed the size of the cooling chamber. While engineers are exploring multiplexing techniques to send multiple signals down a single line and looking into cryogenic CMOS controllers that can sit inside the refrigerator, these solutions often introduce new sources of noise and error. Furthermore, the physical layout of qubits on a two-dimensional grid limits their ability to interact with distant neighbors, forcing the system to move data across the chip via “SWAP” operations that consume precious time and increase the likelihood of computational failure.

The Resilient Standard: Competition With Classical Systems

A frequent oversight in the discussion of quantum potential is the relentless and rapid evolution of classical computing, which continues to provide a moving target for quantum supremacy claims. Every time a quantum breakthrough is announced, the classical computing community responds by developing more efficient algorithms or leveraging the massive parallel processing power of modern AI accelerators to close the performance gap. This competitive dynamic is particularly visible in the field of optimization, where classical solvers used in logistics, finance, and manufacturing have benefited from decades of refinement and are deeply integrated into global supply chains. For a quantum computer to be truly useful, it must do more than just match the speed of a supercomputer on a cherry-picked mathematical problem; it must provide a decisive and reproducible economic advantage over hardware that is already cheap, reliable, and widely available. To date, demonstrating such an advantage for a real-world business problem remains an elusive goal that is often overshadowed by the convenience of existing silicon-based solutions.

The historical pattern of “quantum supremacy” benchmarks illustrates this phenomenon perfectly, as early claims of quantum dominance were often followed by classical optimizations that reduced the perceived gap from years to mere days or hours. Classical hardware benefits from a mature ecosystem of software developers, standardized languages, and a global manufacturing base that can produce chips by the billions. In contrast, quantum machines are bespoke instruments that require specialized environments and highly trained personnel to operate. Even in areas where quantum algorithms have a theoretical edge, such as certain types of machine learning or chemical simulation, classical approximations are becoming increasingly sophisticated, often delivering “good enough” results at a fraction of the cost. This means that for the foreseeable future, quantum computers will likely serve as specialized co-processors for very specific tasks rather than general-purpose replacements for classical systems. The burden of proof remains on the quantum industry to show that its machines can offer more than just a marginal improvement over the increasingly powerful classical tools available to enterprises today.

Economic Constraints: Talent and Strategic Investment

The economic landscape of quantum computing differs significantly from the recent explosion of Artificial Intelligence, primarily due to the lack of immediate, production-ready applications that can drive a self-sustaining labor market. While AI saw a “gold rush” of talent as developers realized they could build and deploy useful tools almost immediately, quantum computing remains a niche academic pursuit that requires years of specialized training in physics and mathematics. There is currently a notable disconnect between the number of PhDs being produced and the number of actual jobs available for quantum software engineers outside of a few dozen research labs. This talent bottleneck suggests that the industry is still firmly in a research and development phase, lacking the organic education pipeline and the broad developer community needed to trigger a true technological revolution. Without a “killer app” that requires thousands of programmers to implement, the field struggles to attract the same level of grassroots interest that has propelled other areas of the tech industry.

Corporate investment in quantum technology is currently driven less by a search for immediate return on investment and more by defensive strategic positioning among the world’s largest technology firms. Companies like IBM, Google, and Microsoft are essentially funding multi-decade research projects as a hedge against their competitors, fearing the catastrophic risk of being left behind if a breakthrough in cryptanalysis or materials science occurs. These organizations maintain “loss-leader” cloud services that allow academic researchers to experiment with their hardware, primarily to build an ecosystem and cultivate mindshare for a future that may still be decades away. However, this strategy is inherently fragile, as it relies on the continued willingness of boards and shareholders to subsidize a technology that has yet to generate significant revenue. If the promised milestones continue to slip, there is a real risk of a “quantum winter,” where a loss of confidence leads to a sharp reduction in funding, potentially stalling progress just as the engineering challenges become most acute.

Proactive Defense: The Shift to Post-Quantum Standards

While the arrival of a powerful quantum computer is still a distant prospect, its projected capabilities have already triggered one of the most significant shifts in the history of global digital security. The mathematical proof that a fault-tolerant quantum machine could break the RSA and ECC encryption that protects everything from bank transfers to government secrets has forced a massive migration toward post-quantum cryptography (PQC). This is the only area where quantum technology is currently exerting a direct and tangible impact on the global economy, as organizations are being forced to spend millions of dollars to upgrade their infrastructure. This defensive movement is driven by the “harvest now, decrypt later” threat, where adversaries collect encrypted data today with the intention of decrypting it years from now once the hardware becomes available. Consequently, the transition to PQC is not an optional upgrade but a mandatory requirement for any entity that handles sensitive long-term data.

The National Institute of Standards and Technology (NIST) has already finalized several PQC standards, and the process of implementing these new algorithms into existing software stacks is a monumental task that involves updating millions of systems worldwide. This transition highlights a unique paradox: the most concrete economic activity generated by quantum computing to date is the cost of defending against it. For financial institutions and national security agencies, the threat of a future quantum computer is a more immediate reality than the machine itself. This shift requires a high level of cryptographic agility, as organizations must be able to swap out encryption methods as new vulnerabilities are discovered or as quantum hardware matures. By focusing on these defensive measures now, the global community is essentially devaluing the future impact of Shor’s algorithm, ensuring that by the time a machine capable of breaking current encryption exists, the most critical data will already be protected by quantum-resistant walls.

Strategic Direction: Navigating the Next Five Years

Looking ahead toward 2030, the most realistic expectation for the quantum industry is a period of steady, incremental gains rather than a sudden or disruptive revolution. We should expect to see qubit counts move into the low thousands and gate fidelities improve just enough to allow for more complex scientific simulations that were previously out of reach. These machines will likely find a niche in fundamental physics research, helping scientists understand the behavior of high-temperature superconductors or exotic quantum phases of matter. However, these successes will largely remain within the realm of academic and scientific merit, with very little spillover into the daily operations of most businesses. For the vast majority of enterprise tasks—ranging from logistics optimization to massive database management—classical high-performance computing and AI accelerators will remain the superior, more cost-effective, and more reliable choice for the foreseeable future.

To maintain momentum and avoid the pitfalls of overpromising, the industry must pivot its communication strategy away from broad “quantum age” rhetoric and toward hyper-specific, narrow benchmarks that demonstrate genuine progress. Transparency regarding the massive hurdles of error correction and thermodynamic scaling will be essential for managing the expectations of investors and policymakers who may be looking for quick wins. Moving forward, organizations should prioritize “quantum readiness” by auditing their cryptographic vulnerabilities and identifying specific, high-value problems that might one day benefit from a quantum edge, while remaining grounded in the reality that classical tools are still the primary drivers of innovation. The path to a fault-tolerant quantum computer is a marathon that has only just cleared its first few miles, and success will require a sustained, long-term commitment to solving the foundational engineering challenges that currently separate the mirage from the reality. Future developments will likely be characterized by a “hybrid” approach, where quantum processors are integrated into existing supercomputing clusters to handle specific, highly targeted sub-routines, rather than attempting to replace the classical infrastructure that underpins the modern world.