The transition from hardcoded, monolithic integrations to a unified communication standard marks a definitive turning point in how developers engineer agentic intelligence systems. As the industry moves away from the fragmented landscape of proprietary tool-calling methods, the Model Context Protocol (MCP) has emerged as a fundamental layer that promises to harmonize the interaction between large language models and the diverse data sources they must inhabit. This review examines the architectural underpinnings of MCP, evaluating how its design facilitates a modular ecosystem where artificial intelligence is no longer an isolated reasoning engine but a deeply integrated participant in complex digital workflows.

Evolution of AI Tool Integration and the Rise of MCP

In the early stages of the generative AI boom, integrating a model with a specific data source required a bespoke, tightly coupled implementation. Developers typically wrote custom wrappers that combined the logic of the large language model with the specific API requirements of the target tool, whether it was a document parser, a database, or a web search engine. This approach created a significant maintenance burden, as every update to the model’s capabilities or the external tool’s interface necessitated a manual overhaul of the integration logic. The resulting systems were often brittle, non-portable, and restricted to a single environment, preventing the reuse of valuable toolsets across different platforms or IDEs.

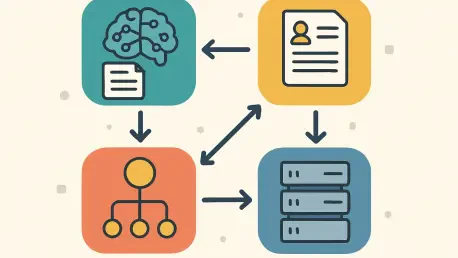

The emergence of the Model Context Protocol represents a shift toward a decoupled, client-server architecture that abstracts the complexities of tool interaction. By separating the reasoning capabilities of the model from the technical execution of tasks, MCP allows developers to build specialized “servers” that can be discovered and utilized by any compliant “client.” This evolution reflects a broader trend in software engineering toward microservices and standardization, where the goal is to create interoperable components that can be mixed and matched. In this context, MCP functions similarly to a universal adapter, enabling an AI model to interact with a local file system, a remote cloud service, or a specialized data repository without needing to understand the underlying implementation details of those systems.

This technological advancement is particularly relevant in the current landscape as the demand for “agentic” behavior grows. Today, users expect AI to do more than just summarize text; they expect it to interact with their local documents, search through complex codebases, and execute actions across multiple software platforms. As these requirements become more sophisticated, the traditional method of hardcoding every possible tool call becomes unsustainable. MCP provides the structural integrity needed to support these complex interactions at scale, offering a blueprint for a future where AI integration is as standardized as connecting a peripheral via USB.

Core Architectural Components and Mechanisms

Standardized JSON-RPC Communication

At the heart of the Model Context Protocol is a communication layer built on JSON-RPC, a lightweight remote procedure call protocol that uses JSON for data encoding. This choice is significant because it provides a simple yet robust framework for exchanging messages between the client and the server. By utilizing a standardized format, MCP ensures that messages regarding tool discovery, execution requests, and result returns are consistent across different implementations. This consistency is what allows a tool built for one AI environment to function seamlessly in another, as long as both sides adhere to the shared protocol.

The communication mechanism typically operates over standard input/output for local processes or through HTTP for remote interactions, providing flexibility in how the systems are deployed. When an AI model identifies the need to use a tool, the client translates this intent into a JSON-RPC request and sends it to the server. The server then processes the request, performs the necessary action, and sends a JSON-wrapped response back to the client. This structured exchange minimizes the risk of interpretation errors and ensures that the model receives precisely the data it needs to continue its reasoning process. Furthermore, the use of JSON-RPC allows for asynchronous communication, which is crucial for handling long-running tasks without blocking the main interaction loop.

The performance of this communication layer is a critical factor in the overall responsiveness of the AI system. Because JSON-RPC is text-based, it incurs some overhead compared to binary protocols; however, this is generally offset by its ease of debugging and widespread support across programming languages. In practice, the latency introduced by the protocol itself is often negligible compared to the time required for the AI model to generate a response or for the external tool to retrieve data. By prioritizing interoperability and ease of implementation, MCP has created a communication standard that lowers the barrier to entry for developers while maintaining the technical rigor required for production-level AI applications.

Dynamic Tool Discovery and Negotiation

One of the most innovative features of the MCP architecture is its capacity for dynamic discovery. In traditional setups, the list of tools available to an AI model is often hardcoded into the application logic, meaning that adding a new capability requires a code change and a redeployment. MCP disrupts this pattern by allowing a client to query a server at runtime to learn about its available functions. Through a standardized “tools/list” command, the server provides a comprehensive schema of its tools, including their names, descriptions, and the specific parameters they require.

This dynamic negotiation process transforms the AI model from a static entity into a flexible agent that can adapt to its environment. When a client connects to an MCP server, it essentially asks, “What can you do?” and receives a catalog of services in return. This information is then passed to the AI model, which uses the descriptions to determine which tool is most appropriate for the user’s current request. This layer of abstraction means that the server can be updated independently of the client; a developer can add a new PDF summarization tool or a database search function to the server, and the AI client will automatically recognize and utilize it upon the next connection.

The real-world significance of this feature cannot be overstated, particularly in environments like integrated development platforms or enterprise data hubs. It allows for a “plug-and-play” experience where users can connect various data silos to their AI assistant without any manual configuration. This capability also facilitates a more granular approach to security and permissions. Since the server controls which tools it exposes during the discovery phase, it can tailor its responses based on the user’s authorization level or the specific context of the task. This ensures that the AI model only interacts with the tools it is permitted to use, maintaining a robust boundary between the model’s reasoning and the underlying system’s data.

Current Trends in Modular AI Design

The move toward modularity in AI design reflects a broader industry shift away from monolithic, “all-in-one” applications toward a more fragmented and specialized ecosystem. We are seeing a trend where the “brain” of the system—the large language model—is increasingly being separated from the “limbs” and “senses” represented by external tools and data sources. This separation allows for greater innovation at each level of the stack, as model providers can focus on improving reasoning and language understanding while tool developers concentrate on building highly optimized services for specific domains like legal analysis, medical research, or software engineering.

Moreover, there is a growing emphasis on the concept of “headless AI,” where the intelligence resides in a service layer that can be accessed by various front-end interfaces. In this model, MCP acts as the glue that binds these disparate parts together. We are witnessing the rise of decentralized tool repositories where developers share MCP-compliant servers that anyone can use. This democratization of AI capabilities means that even small teams can build highly sophisticated agents by leveraging a library of pre-existing, standardized tools. The industry is moving toward a future where the value lies not just in the model itself, but in the richness of the ecosystem of tools and data that the model can access.

Consumer behavior is also influencing this trajectory, as users increasingly demand that their AI assistants be “context-aware.” This means the AI must understand the user’s local files, their emails, their calendar, and their specific project history. Standardized protocols like MCP make this level of context-awareness possible without forcing users to upload all their private data to a central cloud provider. By keeping the data-handling logic within a local or private MCP server, users can grant the AI model temporary access to their context while maintaining control over their information. This shift toward privacy-preserving, context-rich AI is a major driver behind the adoption of modular architectural standards.

Real-World Applications and Sector Impact

The impact of MCP is already visible across several key sectors, most notably in software development and professional services. In the realm of integrated development environments, MCP allows AI coding assistants to interact directly with a developer’s local file system, terminal, and version control systems. For example, an engineer can use an AI client to ask questions about a complex codebase, and the client can use an MCP server to search through thousands of files, identify relevant functions, and even run tests to verify a proposed fix. This level of deep integration significantly reduces the “context-switching” penalty that developers face when moving between their code and an external AI chat interface.

In the legal and financial sectors, MCP is being deployed to handle massive repositories of sensitive documents. A law firm might host a private MCP server that contains specialized tools for searching case law or analyzing contracts. An AI assistant, acting as the client, can then use these tools to extract specific clauses or summarize legal precedents without the actual documents ever leaving the firm’s secure infrastructure. This use case highlights the protocol’s ability to bridge the gap between powerful cloud-based reasoning and local, sensitive data, providing a path for AI adoption in highly regulated industries that were previously hesitant to embrace the technology.

Furthermore, the protocol is finding unique applications in data science and research. Scientists can create MCP servers that interface with specialized laboratory equipment or high-performance computing clusters. This allows them to use an AI assistant to orchestrate complex experiments, query real-time sensor data, and analyze results using natural language. By standardizing the interface between the AI and the scientific tools, MCP enables a more intuitive and collaborative research process. These implementations demonstrate that the protocol is not just a tool for developers, but a versatile framework that can be adapted to any field requiring the synthesis of expert knowledge and technical execution.

Technical Challenges and Adoption Hurdles

Despite its promising architecture, the Model Context Protocol faces several technical challenges that could slow its widespread adoption. One of the primary hurdles is the latency introduced by the multi-step communication process. In an MCP-based system, a single user query often involves several round trips between the client, the model provider, and the MCP server. If the server is hosted remotely or if the tool execution itself is slow, the overall response time can become frustrating for the user. While optimizations such as connection pooling and more efficient serialization are being developed, maintaining a snappy, real-time feel in a complex, multi-server environment remains a difficult task.

Security and trust also represent significant obstacles. Granting an AI model the ability to discover and call tools on a local system opens up potential vectors for “prompt injection” attacks, where a malicious user or a compromised website could trick the AI into executing harmful commands on the user’s machine. While the MCP architecture allows for granular permissions, the burden of ensuring that these permissions are correctly configured falls on the developer and the end-user. As AI agents become more autonomous, the industry must develop more sophisticated methods for verifying the intent of tool calls and ensuring that the interaction remains within safe boundaries.

Moreover, there is the ongoing challenge of market fragmentation. While MCP is gaining traction, it is not the only standard in town. Some major AI providers continue to push their own proprietary tool-calling formats, creating a “walled garden” effect that can stifle interoperability. For MCP to become the industry standard, it must demonstrate clear advantages in terms of developer experience and ecosystem support. The success of the protocol depends on a critical mass of developers choosing to build and share MCP servers, creating a network effect that makes it the default choice for any new AI integration. Ongoing efforts to simplify the creation of MCP servers and to integrate the protocol into popular web frameworks are crucial to overcoming these market-based limitations.

The Future of Composable AI Ecosystems

Looking ahead, the trajectory of the Model Context Protocol suggests a future where AI is built through composition rather than isolated creation. We can anticipate the development of a global marketplace for AI capabilities, where developers publish highly specialized MCP servers that can be “rented” or integrated into any agentic workflow. This would allow for the emergence of “meta-agents”—AI systems that don’t just use a few tools, but intelligently navigate a vast landscape of thousands of available services to solve complex, multi-faceted problems. The protocol will serve as the underlying fabric of this economy, providing the common language that allows these diverse components to cooperate.

Breakthroughs in edge computing are likely to further enhance the utility of MCP. As more processing power moves to local devices, we could see the rise of personal MCP servers that act as a secure, private gateway to an individual’s entire digital life. These servers would handle everything from managing smart home devices to organizing personal finances, all through a standardized interface that any AI assistant can use. This would move us closer to the vision of a truly personal AI—one that is deeply knowledgeable about its user’s specific context while remaining strictly under their control. The role of the protocol here is to ensure that this personal context is accessible to the AI in a safe, structured, and efficient manner.

In the long term, the widespread adoption of standardized protocols like MCP may lead to a fundamental restructuring of how software is built. Instead of designing applications with fixed user interfaces, developers might focus on creating “capability sets” exposed via protocols. This would allow for a more fluid and adaptive digital experience where the interface is generated on the fly by an AI assistant to suit the user’s immediate needs. The “composability” offered by MCP is a precursor to this shift, providing the technical foundation for a world where software is no longer a collection of static tools, but a dynamic and interconnected web of services orchestrated by artificial intelligence.

Assessment of the Model Context Protocol

The Model Context Protocol has fundamentally redefined the parameters of AI tool integration by providing a robust, modular, and standardized alternative to traditional, tightly coupled architectures. Its reliance on JSON-RPC for communication and its innovative dynamic discovery mechanism have created a framework where interoperability is a core feature rather than an afterthought. Throughout this review, it has become clear that the protocol’s strength lies in its ability to decouple the reasoning of the AI from the execution of tasks, fostering an ecosystem where specialized tools can be built, updated, and shared independently of the models that use them.

While technical hurdles such as latency and security remain areas of active development, the strategic advantages of adopting a protocol-based approach are significant. The move toward composable AI ecosystems, supported by standards like MCP, offers a clear path toward more scalable, maintainable, and privacy-preserving intelligence systems. The protocol has successfully transitioned from a niche developer tool to a foundational element of professional AI infrastructure, proving its utility in high-stakes environments like software engineering and document analysis. This modularity not only lowers the barrier to entry for developers but also empowers users to maintain control over their data in an increasingly AI-driven world.

Ultimately, the impact of the Model Context Protocol extended far beyond simple function calling; it provided the necessary structure for the shift from text-generating bots to action-oriented agents. By establishing a universal contract between the brain and the tools, it paved the way for a more integrated and capable digital landscape. The protocol acted as a catalyst for a new era of software development, where the focus shifted from building monolithic silos to creating reusable, standardized capabilities. As the technology matured, it became evident that the architectural choices made in the design of MCP were instrumental in shaping the flexibility and reach of the AI solutions that followed.