The rapid evolution of large language models has fundamentally shifted from simple conversational interfaces to autonomous agents capable of executing complex workflows across distributed systems. While these models possess immense reasoning capabilities, they frequently encounter a significant limitation when required to interact with private data or proprietary infrastructure without standardized integration protocols. This is where the Model Context Protocol (MCP) enters the landscape, functioning as a universal connector that allows AI agents to discover and invoke tools in a predictable manner across various environments. By adopting this protocol, developers move away from the brittle nature of bespoke API integrations and toward a modular ecosystem where an agent can seamlessly query databases or trigger cloud functions. AWS Bedrock AgentCore Runtime accelerates this transition by providing a serverless environment specifically designed to host these MCP servers, ensuring that the necessary infrastructure for scaling and session isolation is managed by the cloud provider. The objective of this guide is to demonstrate how a functional, production-ready AI agent can be built and deployed within a single hour, leveraging the efficiency of modern serverless tools and the standardization provided by MCP. This workflow effectively eliminates the traditional bottlenecks associated with container orchestration, allowing developers to focus strictly on the logic of the tools the agent will use to solve real-world problems.

1. Constructing the Model Context Protocol Server

The initial step in establishing a robust AI tooling ecosystem involves setting up a dedicated development environment that prioritizes speed and dependency management. Python remains the primary language for such implementations due to its extensive library support, with modern tools like “uv” offering a significantly faster alternative to traditional package managers for handling complex dependency trees. Creating a project directory for the infrastructure health server requires initializing a bare environment and adding the core components, specifically the model context protocol and the bedrock agentcore libraries. These libraries provide the necessary abstractions to turn standard Python functions into discoverable tools that an artificial intelligence model can interpret and use. Once the environment is staged, the developer utilizes the FastMCP class to instantiate a server, which serves as the backbone for the upcoming tool definitions. This approach ensures that the underlying transport mechanisms and communication protocols are handled through a standardized interface, reducing the amount of boilerplate code required to get a basic server running. By starting with a clean, container-ready structure, the transition from local development to cloud-based deployment becomes far more predictable and less prone to errors related to environment discrepancies.

With the server infrastructure initialized, the focus shifts to the definition of specific tools that provide the agent with actionable capabilities within a cloud environment. For a DevOps-focused agent, these tools might include functions to check service statuses, list recent deployments, or retrieve active infrastructure alerts from monitoring systems. Each function is decorated with the tool decorator provided by FastMCP, which automatically registers it with the server and exposes its metadata. It is critical to include highly descriptive docstrings and precise type hints for every argument, as this information is the primary source of context the large language model uses to decide which tool is appropriate for a given user query. For instance, a tool designed to fetch service health should clearly define its input as a string representing the service name and its output as a structured dictionary containing metrics like uptime and latency. In a production scenario, these functions would interface with real monitoring APIs or databases, though mock data can be used during the initial build phase to validate the logic. The server is typically configured to run using a streamable HTTP transport method, which is the preferred protocol for high-performance communication with the AWS Bedrock AgentCore Runtime, ensuring that tool execution remains efficient.

2. Verifying Tool Functionality in Local Environments

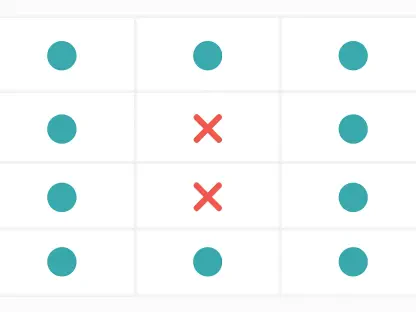

Testing the newly created server in a local environment is a non-negotiable phase of the development lifecycle that ensures the tool logic behaves as expected before any cloud resources are provisioned. Developers can launch the server locally using standard Python execution commands, after which the server begins listening for incoming requests on a designated port. To verify that the tools are correctly registered and accessible, the Model Context Protocol provides an inspector tool that offers a graphical or command-line interface for interacting with the server. This inspector allows the developer to view the list of available tools, examine their expected arguments, and manually trigger calls to see the resulting payloads. Observing how the server responds to various inputs is essential for identifying edge cases, such as how the system handles unrecognized service names or missing parameters. This immediate feedback loop is significantly faster than the turnaround time for a full cloud deployment, allowing for rapid iteration on tool descriptions and logic. Ensuring that the data returned by the server is structured correctly guarantees that the downstream agent will be able to parse the information accurately and integrate it into its reasoning process without encountering unexpected formatting errors.

Beyond simple functional verification, the local testing phase provides an opportunity to refine the instructional context that will be passed to the artificial intelligence model. If a tool’s purpose is ambiguous or its parameters are poorly defined, the agent may struggle to invoke it correctly, leading to hallucinated inputs or failed executions. By using tools like curl to send raw HTTP requests to the local MCP endpoint, developers can simulate the exact communication pattern the AWS runtime will eventually use. This level of granular testing confirms that the streamable transport is functioning correctly and that the headers and body of the messages adhere to the protocol specifications. It also allows for the measurement of response times and the assessment of error handling strategies; for example, if an external API call fails, the tool should return a meaningful error message rather than a generic system crash. Addressing these reliability concerns early in the process prevents complex debugging sessions later on when the code is running in a managed cloud environment. High-quality local testing serves as the foundation for a stable production deployment, building confidence that the agent will have a reliable set of capabilities to draw upon when interacting with end-users in a real-world setting.

3. Preparing the Code for AgentCore Runtime Integration

Preparing the local Python server for the Bedrock AgentCore Runtime involves wrapping the existing logic in a managed application structure that can handle the nuances of cloud execution. This is achieved by incorporating the BedrockAgentCoreApp class, which provides the necessary hooks for the AWS environment to interact with the code. The developer must define a specific entry point using an app-level decorator, which acts as the gateway for all incoming payloads from the agent runtime. Within this handler, the MCP server’s run method is called, maintaining the streamable HTTP transport that was validated during the local testing phase. This architectural change is what allows the code to transition from a standalone script to a managed microservice capable of running within the AWS ecosystem. It essentially creates a bridge between the standardized Model Context Protocol and the specialized infrastructure of the AgentCore Runtime. This modification ensures that when the runtime receives a request to execute a tool, it knows exactly which function to call and how to route the results back to the agent. This step is pivotal because it shifts the responsibility of execution environment management to AWS, allowing the server to inherit the benefits of managed scaling and high availability.

For developers who prefer a more streamlined approach to cloud readiness, the AgentCore Starter Toolkit offers an automated way to scaffold the entire project structure. By running a single initialization command with the appropriate protocol flag, the toolkit generates all the necessary configuration files, including a Dockerfile, IAM role definitions, and an agentcore.json configuration file. This automation is particularly valuable because it ensures that the container image used for deployment follows best practices for security and performance. The generated Dockerfile is optimized for Python applications, ensuring that dependencies are cached correctly and the final image size is kept to a minimum. Furthermore, the toolkit handles the complex task of defining the identity and access management policies required for the server to operate within the AWS environment. Instead of manually writing JSON policies for IAM, the developer can rely on the toolkit to create roles that follow the principle of least privilege, granting only the specific permissions needed for the runtime to function. This automated scaffolding not only saves significant time but also reduces the likelihood of configuration errors that could lead to security vulnerabilities, making it an essential part of the 60-minute deployment timeline.

4. Executing the Deployment to AWS Infrastructure

The actual transition of the code from a local workstation to the AWS cloud is handled through a sequence of commands that orchestrate the build and deployment pipeline. The first of these commands is the configuration step, which prepares the underlying AWS resources needed to host the application. This includes the creation of an Amazon Elastic Container Registry repository to store the server’s container images and the provisioning of the IAM roles that were defined during the scaffolding phase. This step is crucial because it sets up the security and storage backbone for the entire deployment, ensuring that all subsequent operations have a designated place to reside. The process is designed to be largely invisible to the developer, as the toolkit interacts with the AWS CLI to handle the necessary API calls in the background. By abstracting away the complexities of resource provisioning, the developer is freed from the need to use more complex infrastructure-as-code tools for basic deployments. This approach aligns with the goal of rapid prototyping and deployment, allowing a functional server to move into a cloud environment with minimal friction and zero manual console configuration, which is a major advantage for teams looking to iterate quickly on AI capabilities.

Once the infrastructure is configured, the final deployment command initiates the build and publishing process, which is managed by AWS CodeBuild to ensure a consistent and isolated build environment. This process involves packaging the local code and its dependencies into a container image, pushing that image to the previously created registry, and finally deploying it to the AgentCore Runtime. One of the most significant advantages of this workflow is that it does not require Docker to be installed or running on the developer’s local machine; all containerization tasks are handled in the cloud. This removes a common barrier to entry for developers and ensures that the build environment is identical for every team member. Upon completion of the deployment, the system provides a unique Runtime Amazon Resource Name, which serves as the primary identifier for the deployed server. This ARN is essential for the next steps, as it allows agents and other services to target the specific instance of the MCP server. The entire cycle from local code to a live cloud endpoint is completed in a matter of minutes, demonstrating the power of modern serverless runtimes in accelerating the development of advanced artificial intelligence applications and the tools that support them.

5. Validating the Deployed Environment and Integration

Validating the cloud-based deployment involves interacting with the newly created runtime through the AWS CLI to confirm that it is correctly processing requests and managing tool execution. The first test typically involves calling the invoke-agent-runtime command with a payload that requests a list of available tools. If the deployment was successful, the server will respond with a JSON-formatted list of the tools defined in the Python code, including their descriptions and argument specifications. This proves that the container is running correctly, the networking is configured properly, and the MCP server is communicating with the runtime as intended. Following this general check, the developer should attempt to call a specific tool using a more complex payload that includes input arguments. For example, triggering the infrastructure health check for a specific service name allows the developer to verify that the internal logic of the tool is executing properly within the microVM environment. Monitoring these initial calls provides insights into the latency and performance characteristics of the cloud-hosted server, ensuring that it meets the requirements for a responsive user experience when integrated into a conversational agent.

The final phase of the implementation is the integration of the deployed MCP server into a conversational AI agent that can utilize the tools to answer complex queries. This is achieved by creating an agent instance—using a framework like Strands or Amazon Bedrock—and providing it with a client that connects to the deployed server using IAM authentication. The agent is given a system prompt that outlines its role, such as acting as a DevOps assistant, and instructions on how to use the available tools to surface information about system health or deployment history. Because the tools are discovered dynamically through the Model Context Protocol, the agent automatically understands what capabilities it has and how to invoke them without needing any hard-coded orchestration logic. When a user asks a question, the agent analyzes the query, determines which tools are necessary, and executes the calls in the appropriate order. This creates a seamless experience where the artificial intelligence can correlate data from multiple tools—such as identifying that a specific deployment caused a latency spike—and provide a synthesized, actionable answer to the user. This level of automation represents the true potential of AI agents, moving them beyond simple chat interfaces into the realm of proactive digital assistants.

6. Architectural Advantages and Operational Considerations

The decision to use AWS Bedrock AgentCore Runtime for deploying MCP servers brings several significant architectural advantages that are difficult to replicate in a custom-managed environment. Central to these benefits is the use of dedicated microVMs for every session, which provides a high degree of isolation between different users and workloads. This security model ensures that even if one tool execution encounters a critical error, it cannot affect other sessions or the underlying infrastructure. Furthermore, the runtime handles authentication natively using IAM SigV4, which is the standard for secure communication within the AWS ecosystem. This eliminates the need for developers to implement custom OAuth flows or token management systems, as the existing AWS credentials provide all the necessary security context. The system also offers automatic scaling, which means that as the number of concurrent agent sessions increases, the runtime dynamically allocates more resources to handle the load without any manual intervention. This managed approach to infrastructure allows development teams to focus entirely on building high-value tools rather than spending cycles on the operational overhead of maintaining clusters.

Reflecting on the deployment process, the combination of the Model Context Protocol and AWS AgentCore Runtime effectively lowered the barrier to creating functional AI tools. The workflow moved from initial project setup to a live, scalable cloud deployment in under an hour, which demonstrated a significant improvement over traditional integration methods. Throughout the implementation, the emphasis remained on creating clear tool definitions and leveraging automated scaffolding to handle the complexities of AWS infrastructure. The transition from local testing to a production-ready environment was managed through a few concise commands, ensuring that the final output was both secure and high-performing. For future iterations, the integration of an AgentCore Gateway could provide a single entry point for multiple MCP servers, allowing an agent to access an even broader array of capabilities. Additionally, adopting the AG-UI protocol could enhance the user experience by providing standardized methods for displaying tool execution progress and interactive interfaces. Once the experimental phase was completed, the use of the teardown command efficiently removed all provisioned resources, illustrating a clean and cost-effective lifecycle for modern AI development.