The modern cloud ecosystem has transitioned from a fragmented collection of automated scripts to a unified, intelligent framework where operational excellence is treated as an immutable software contract rather than a set of manual guidelines. In the current landscape of 2026, the Amazon Web Services environment demands a sophisticated integration of reliability, security, and machine-driven decision-making to maintain competitive parity. Engineers have moved past the era where deployment frequency was the sole metric of success, instead prioritizing the stability and self-healing capabilities of global architectures. This shift represents a fundamental maturation of the DevOps philosophy, where the focus is now on creating resilient systems that can anticipate failure and adapt without human intervention. The introduction of regional namespaces and more granular control over global resources has simplified what used to be a complex web of configuration management, allowing teams to scale across international borders with unprecedented precision. Consequently, the role of the DevOps professional has evolved from a traditional system administrator into a specialized architect of autonomous delivery ecosystems that power the most critical sectors of the global economy.

Establishing a Foundation of Total Automation

The contemporary engineering standard dictates that any manual intervention within a production environment is classified as a critical failure of the underlying system architecture. Organizations now utilize Infrastructure as Code as the definitive source of truth for every component of their cloud presence, spanning from complex network topologies to the most granular Identity and Access Management permissions. This transition ensures that the environment is entirely reproducible and verifiable, removing the risks associated with configuration drift and undocumented changes. Every modification to the infrastructure is subjected to a rigorous pull-request workflow, where automated validation engines and policy-as-code checks serve as the primary gatekeepers. These checks verify that proposed changes comply with organizational standards for performance, cost, and security before they are ever allowed to enter the deployment phase. By treating infrastructure with the same rigor as application code, teams have achieved a level of consistency that was previously unattainable, fostering a culture where stability is built into the very foundation of the technological stack.

Deployment architectures have reached a high state of maturity by emphasizing the reduction of the blast radius for every update pushed to the cloud. Modern pipelines frequently employ sophisticated canary releases and blue-green deployment strategies to ensure that new versions of software can be validated against live traffic with minimal risk to the broader user base. This approach is supported by the use of immutable artifacts, which are stored in versioned repositories like Amazon ECR and S3 to guarantee that the exact same binary or container image moves from development to production without modification. If a performance regression or an unexpected error is detected by the automated monitoring systems during a rollout, the pipeline is designed to perform an instantaneous rollback to the last known stable state. This level of control allows organizations to maintain near-zero downtime while continuing to innovate at a rapid pace. The synergy between high-level automation tools and strategic deployment patterns has transformed the release process from a high-stakes event into a routine, low-risk operational procedure that happens hundreds of times a day across global regions.

Strengthening Security through Shift-Left Practices

Security has successfully transitioned from a final checklist at the end of a project to a core component that is woven into every stage of the software development lifecycle. The industry has standardized on a zero-trust architecture, where identity serves as the primary perimeter for every individual workload and microservice interaction. By leveraging the IAM Identity Center and implementing strict least-privilege roles, engineers ensure that even if one component is compromised, the potential for lateral movement within the system is virtually eliminated. Automated threat detection tools, such as Amazon GuardDuty and AWS Security Hub, provide continuous, real-time visibility into the security posture of the entire environment, flagging vulnerabilities the moment they appear. This shift-left approach means that developers receive immediate feedback on the security implications of their code, allowing them to remediate issues before they ever reach a staging or production environment. The result is a more resilient infrastructure that remains secure even as the complexity of the underlying services continues to grow across multiple accounts and regions.

Governance at a massive scale is now handled through centralized management frameworks that allow for the simultaneous oversight of hundreds of AWS accounts. Using Service Control Policies and AWS Config within the broader AWS Organizations structure, platform teams can enforce compliance requirements and security guardrails automatically. This compliance-as-code methodology ensures that every new account or resource provisioned within the organization adheres to the same set of rigorous standards without requiring manual review from a centralized security team. For instance, a policy might prevent the creation of unencrypted storage volumes or restrict network access to specific approved regions, effectively neutralizing common human errors before they can manifest as actual risks. This automated governance does not hinder the velocity of individual development teams; rather, it provides them with a safe environment in which they can experiment and deploy with the confidence that they are operating within the established boundaries of the organization’s risk profile. The integration of security into the automated workflow has effectively turned compliance into a silent, continuous background process.

Leveraging AI and Platform Engineering for Scalability

The integration of agentic artificial intelligence into operational workflows has introduced a new era of autonomous management for cloud-native applications. These AI-driven systems are capable of performing complex self-healing tasks and automated triage that previously required hours of manual investigation by senior engineers. By analyzing patterns across massive datasets of performance traces and system logs, agentic patterns can identify the root cause of an incident and execute a remediation script via AWS Lambda in a matter of seconds. This capability is particularly vital in highly regulated sectors such as healthcare and finance, where uptime and accuracy are paramount. For example, if a service begins to experience latency due to a memory leak in a new deployment, the AI agent can automatically trigger a rollback while simultaneously opening a detailed ticket for the development team that includes the exact line of code responsible for the degradation. This shift toward autonomous operations allows human engineers to move away from the “firefighting” aspect of their roles and focus on higher-level architectural challenges and strategic initiatives.

Platform engineering has emerged as the standard organizational model for managing the cognitive load on application developers who must navigate increasingly complex cloud environments. By building Internal Developer Platforms, organizations provide standardized “golden paths” that abstract away the intricacies of networking, scaling, and secret management. These platforms offer reusable templates and self-service portals that allow developers to provision their own environments within the pre-defined safety of the company’s infrastructure standards. A significant development in this area is the simplification of global resource management through the use of regional namespaces, which has resolved long-standing naming collision issues in services like S3. This architectural change allows engineers to maintain consistent naming conventions across different geographical locations, making the management of international infrastructure much more intuitive and less prone to error. By reducing the friction involved in deploying and scaling applications, platform engineering ensures that the focus remains on delivering business value rather than wrestling with the underlying mechanics of the cloud provider’s interface.

Optimizing Outcomes with FinOps and Observability

The discipline of FinOps has been fully integrated into the standard DevOps lifecycle, ensuring that cost awareness is a proactive rather than a reactive concern. In the current cloud landscape, the financial implications of every architectural decision are evaluated automatically during the continuous integration and delivery process. Pipelines are now equipped with logic that can flag or even block deployments that exceed a specific cost-to-performance ratio, preventing the common problem of cloud sprawl and runaway expenditures. This integration allows engineering teams to see the direct financial impact of their code changes in real-time, encouraging the development of more efficient and cost-effective software. By using tagging policies and environment time-to-live settings, organizations can ensure that resources are only consumed when they are actually needed, further optimizing the infrastructure spend. The result is a culture where financial accountability is shared across the entire organization, leading to a more sustainable and profitable approach to cloud operations that aligns technological growth with actual business requirements.

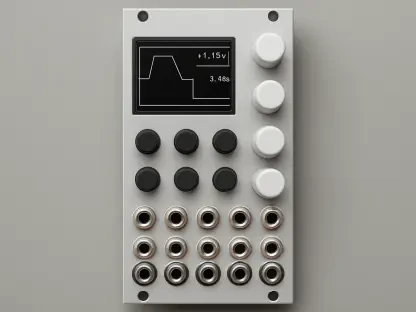

Transitioning from reactive monitoring to proactive observability has fundamentally changed how organizations maintain the health of their complex distributed systems. By utilizing advanced anomaly detection and distributed tracing through services like CloudWatch, teams can identify subtle performance regressions that would have gone unnoticed by traditional threshold-based alerts. This deep visibility allows engineers to understand the internal state of a system based on the data it produces, enabling them to fix potential issues before they ever impact the end-user experience. An integrated feedback loop ensures that if a service’s specific objectives are threatened, the system can trigger an automated response to scale resources or reroute traffic. This move toward observability ensures that the focus is always on the user’s perspective of the system’s health, rather than just the status of individual servers or databases. By prioritizing the collection and analysis of high-cardinality data, modern DevOps teams have achieved a level of system transparency that makes the management of global, multi-cloud environments a manageable and highly predictable task.

Achieving Resilience through Strategic Implementation

The maturation of DevOps on AWS has demonstrated that long-term success was built upon the foundations of automation, intelligence, and a relentless focus on outcomes rather than just technical speed. Organizations that thrived were those that transitioned away from bespoke, manual configurations and embraced a unified ecosystem where every action was verifiable and reproducible. By implementing high-level Infrastructure as Code and hardening the software supply chain through artifact signing, teams created a high-trust environment that significantly reduced the risk of both human error and malicious interference. These practices allowed for a seamless flow of value from the developer’s workstation to the global production environment, ensuring that the technology remained an enabler of business goals. The historical shift toward identity-centric security and zero-trust governance effectively neutralized many of the common vulnerabilities that plagued early cloud implementations, providing a stable platform for the next generation of digital services.

Moving forward, the focus for engineering leadership shifted toward the operationalization of telemetry and the continued integration of AI-assisted decision-making. Those who mastered the use of distributed tracing and proactive observability were able to maintain higher levels of service availability while simultaneously reducing the operational burden on their staff. The inclusion of FinOps directly into the delivery cycle became a mandatory practice for maintaining fiscal health in an era of massive horizontal scaling. By treating the production environment as an enforceable software contract, organizations ensured that their infrastructure remained safe, cost-effective, and easy to scale across any number of global regions. The ultimate lesson from this evolution was that resilience was not a feature to be added later but a fundamental design requirement that had to be addressed at every stage of the development lifecycle. This comprehensive approach to cloud engineering has set a new standard for how modern enterprises build and maintain the digital foundations of their global operations.