The rapid proliferation of containerized workloads has fundamentally altered how modern enterprises manage software delivery, yet it has also introduced a persistent security gap that traditional scanning tools struggle to close. Harbor has long served as a cornerstone of the cloud-native ecosystem, offering a private, trusted registry for storing and signing container images, but its reliance on static, point-in-time scanning is no longer sufficient for the current threat environment. By integrating the Qualys QScanner as a specialized adapter, organizations are now able to transition from reactive, event-based security checks to a continuous assessment model that remains active throughout the entire image lifecycle. This shift ensures that as new vulnerabilities emerge, the security posture of every stored artifact is updated automatically, preventing the “blind spots” that typically occur between scheduled scan cycles.

Addressing the Limitations of Current DevSecOps

Overcoming Operational Silos: The Point-in-Time Fallacy

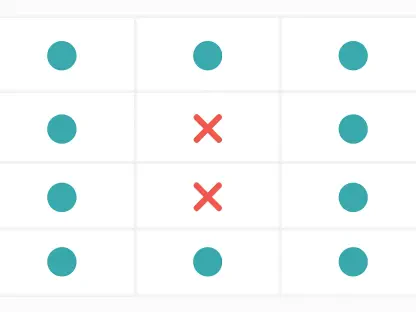

The traditional divide between development and security operations often results in a fragmented workflow where tools like Trivy are used for initial builds while different enterprise platforms handle production monitoring. This lack of synchronization creates a scenario where developers receive one set of results in their CI/CD pipeline, only to have security teams flag different issues once the image resides in the registry. Such fragmentation leads to massive amounts of redundant processing, as thousands of images are pulled, unpacked, and rescanned across various stages of the delivery lifecycle. This cycle not only wastes valuable CPU and storage I/O but also forces teams to spend hours reconciling conflicting data from disparate vulnerability databases, leading to significant operational friction and delayed releases.

Beyond the logistical hurdles of tool fragmentation, the industry is grappling with the inherent failure of the “shift-left” philosophy when applied as a singular event. The assumption that a clean scan at the time of an image push guarantees permanent safety is a dangerous misconception in a landscape where hundreds of new Common Vulnerabilities and Exposures (CVEs) are disclosed weekly. An image containing a library that was considered secure on Monday can easily become a critical risk by Wednesday without a single line of code changing. To combat this, many organizations resort to aggressive, scheduled rescanning policies that involve pulling entire image layers back out of the registry for analysis. This approach is increasingly unsustainable at enterprise scale, as it inflates cloud egress costs and places an immense burden on network bandwidth and registry performance.

Reducing Alert Fatigue: Moving Toward Actionable Intelligence

When security teams are inundated with thousands of raw CVE reports, the resulting alert fatigue often leads to critical vulnerabilities being overlooked or ignored by developers. Traditional scanning methods typically provide a basic severity score without considering whether a vulnerability is actually exploitable within the context of the specific container environment. This creates a “noise” problem where development teams are tasked with patching low-risk components simply because they appeared on a report. By shifting toward a more integrated approach, organizations can begin to correlate vulnerability data with real-world threat intelligence, ensuring that remediation efforts are focused on the flaws that pose a genuine threat to the production environment rather than chasing every minor library update.

The integration of advanced scanning logic directly into the Harbor registry allows for a more nuanced understanding of risk that spans the gap between a developer’s workstation and the runtime environment. Instead of delivering a static list of bugs, a unified system can provide enriched context, such as whether a specific vulnerability has an available exploit kit or if it is being actively targeted in the wild. This level of detail transforms security from a “gatekeeper” that slows down production into a collaborative partner that provides clear, prioritized instructions. Consequently, the relationship between Dev and SecOps improves as the focus shifts from a quantity-based metric of “vulnerabilities found” to a quality-based metric of “risk reduced,” streamlining the path from code commit to secure deployment.

A Technical Shift in Scanning Architecture

The Role of SBOMs: Foundation for Continuous Evaluation

The technical heart of the Qualys QScanner integration lies in its ability to transform how container images are analyzed through the generation of a Software Bill of Materials (SBOM). When a developer pushes a new image to Harbor, the QScanner performs an exhaustive initial scan to identify every library, binary, and dependency nested within the layers. Rather than just outputting a vulnerability report, it creates a comprehensive digital manifest that acts as a permanent record of the image’s contents. This SBOM is then indexed and stored, effectively decoupling the identification of components from the detection of vulnerabilities. By treating the image as a set of known parts, the system avoids the need to interact with the heavy image layers during every subsequent security update or database refresh.

Once this digital manifest is established, the heavy lifting of security analysis is offloaded to the Qualys backend platform, where it is monitored against an ever-evolving threat intelligence database. This architectural shift means that when a new CVE is discovered, the system simply checks the existing SBOMs for the affected component rather than re-downloading and re-scanning the physical container image. This “scan once, assess continuously” model eliminates the need for repeated compute cycles and network egress, which are the primary cost drivers in large-scale registry management. As a result, the registry remains performant and responsive, while the security posture of the entire repository is updated in near-real-time as new threat data becomes available from global sources.

Scalable Infrastructure: Optimizing Registry Performance

Modern container environments often scale into the hundreds of thousands of images, making traditional scan-on-push or scheduled-scan models a bottleneck for infrastructure teams. By utilizing metadata-driven analysis, the integration ensures that the Harbor registry does not experience the typical performance degradation associated with large-scale vulnerability assessments. Because the actual analysis happens in the cloud-based backend, the local Harbor instance is spared from the intensive CPU and memory usage required to unpack complex image layers repeatedly. This allows organizations to maintain high availability and low latency for their developers, even when the security platform is processing updates for a massive library of legacy and current container images simultaneously.

Furthermore, this metadata-centric approach provides a consistent security framework across diverse hardware architectures, including both AMD64 and ARM-based deployments. As enterprises increasingly adopt heterogeneous infrastructure to optimize costs and performance, having a security tool that treats an SBOM with the same rigor regardless of the underlying processor architecture is critical. The QScanner ensures that the security manifest is the single source of truth, providing a unified view of risk that follows the application from development to production. This level of architectural consistency simplifies the management of complex, multi-cloud environments and ensures that security policies are applied uniformly, regardless of where the image was built or where it will eventually be deployed.

Economic and Operational Benefits

Maximizing Efficiency: Smart Risk Modeling and Cost Savings

Transitioning to an inventory-centric security model provides immediate financial relief by drastically reducing the hidden costs associated with container registry maintenance. In many cloud environments, the egress fees generated by repeatedly pulling large images for security scanning can represent a significant portion of the monthly infrastructure bill. By eliminating these repetitive data transfers through the use of SBOM-based re-evaluation, enterprises can redirect those funds toward innovation and core development. Additionally, the reduction in storage I/O and compute requirements means that organizations can run their registry infrastructure on smaller, more cost-effective instances without sacrificing the frequency or depth of their security assessments.

Operational efficiency is further enhanced by moving away from standard CVSS (Common Vulnerability Scoring System) metrics toward more sophisticated risk modeling like the Qualys Detection Score (QDS). While CVSS provides a theoretical severity level, QDS incorporates real-world factors such as exploit maturity, evidence of active attacks, and the reachability of the vulnerable code. This allow security teams to filter out the noise and focus exclusively on high-priority threats that require immediate attention. By providing developers with a curated list of “must-fix” items rather than a mountain of low-impact alerts, organizations can significantly improve their mean time to remediation (MTTR). This focused approach not only secures the environment more effectively but also boosts developer morale by removing unnecessary busywork from their schedules.

Future-Proofing Registry Security: Actionable Next Steps

The shift toward continuous assessment in Harbor represents a fundamental change in how enterprises must approach the security of their software supply chains. To fully realize the benefits of this integration, organizations should prioritize the standardization of SBOM generation as a mandatory part of their container push process. By ensuring that every image in the registry has a corresponding digital manifest, security leaders can gain 100% visibility into their software inventory without the overhead of traditional scanning. Looking forward, the focus will likely shift toward “reachability analysis,” where tools not only identify a vulnerable library but also determine if the application’s code actually executes the flawed function, further refining the prioritization process.

As a final strategic consideration, teams should begin integrating these continuous security insights directly into their orchestration platforms to enable automated policy enforcement. For instance, an image that was initially cleared for production but later flagged by a continuous SBOM assessment could be automatically quarantined or blocked from scaling within a Kubernetes cluster. This creates a closed-loop system where the registry serves as a dynamic intelligence hub rather than a static storage bin. By adopting these forward-looking practices, enterprises can ensure that their security posture remains resilient in the face of an increasingly volatile threat landscape, turning their container registry into a proactive defense mechanism rather than a point of vulnerability.