Vijay Raina is a preeminent expert in enterprise SaaS technology and secure software architecture, specializing in the deployment of autonomous systems within highly regulated sectors. With a deep background in designing robust frameworks for the Defense Industrial Base and financial institutions, he focuses on bridging the gap between probabilistic AI behavior and the deterministic rigor required by global compliance standards. His work often centers on creating “trustless” architectures where AI agents operate as untrusted components within strictly governed, traceable environments.

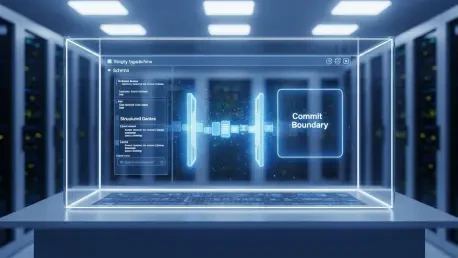

In this discussion, we explore the technical infrastructure necessary to move beyond experimental AI into production-ready agentic workflows. Our conversation covers the implementation of the “Commit Boundary” to neutralize injection attacks, the mathematical scoring of risk to prevent reviewer fatigue, and the mapping of system constraints to frameworks like NIST SP 800-171. Raina also provides a technical deep dive into why strictly typed schemas are the only viable conduit for auditability and how to maintain safety invariants over long-horizon autonomous tasks.

How do you practically implement a “Commit Boundary” between an agent’s probabilistic suggestions and the actual system execution? What specific validation steps ensure that a syntactically valid but malicious request does not trigger an unauthorized state transition?

The “Commit Boundary” is practically implemented by treating the agent’s output not as a command, but as a “TypedActionRequest” that must pass through a series of deterministic gates before reaching an executor. First, we enforce syntactic integrity using Pydantic models to ensure the agent’s intent matches a fixed schema, effectively neutralizing prompt injections that masquerade as valid RPC calls. We then apply semantic validation where policy-as-code checks, such as a minimum 20-character auditable justification, are required for any state-modifying action. Finally, we implement a layered enforcement framework where the executor service is entirely decoupled, operating under least-privilege principles and requiring a 16-character idempotency key to prevent accidental or malicious re-execution. By moving from “tool correctness” to “boundary enforcement,” we ensure that even if an agent is compromised, the blast radius is restricted by hard-coded system constraints.

Human-in-the-loop oversight often leads to reviewer fatigue and rubber-stamping. What criteria define the scoring logic for routing actions to different tiers like security or legal review, and how do you determine which low-risk tasks should remain fully automated?

To combat fatigue, we use a deterministic risk-scoring algorithm that evaluates 0–100 points based on data sensitivity and action impact rather than subjective intuition. For instance, any request involving Controlled Unclassified Information (CUI) or PII starts with a base sensitivity score of 35 to 45 points, while destructive actions like “access_grant” add another 50 points to the tally. We define the “AUTO” tier for any action scoring below 20, such as simple read requests or non-mutating validations, allowing these to bypass manual queues entirely. Conversely, any state-changing action targeting CUI data triggers an immediate “SECURITY_REVIEW” regardless of the total score, ensuring that human judgment is reserved for high-stakes transitions like $10,000+ financial transfers or admin privilege escalations. This targeted approach ensures that reviewers only see the “medium” and “high” risk tiers, keeping their focus sharp on the actions that truly matter for organizational safety.

Regulated industries require strict handling of Controlled Unclassified Information. How can architectural constraints like least-privilege executors and immutable audit logs be mapped to frameworks like NIST SP 800-171?

Mapping to NIST SP 800-171 Rev. 3 requires transforming regulatory mandates into enforceable system constraints at the Commit Boundary. We fulfill the “Access Control” requirement by ensuring the Executor operates as a distinct service identity with role-specific permissions, meaning the agent never possesses production credentials. For “Audit and Accountability,” every state transition is recorded in an immutable log that captures the TypedActionRequest, the risk score, and the human approval decision, creating a traceable chain from agent intent to system outcome. Data sensitivity labels like “CUI” or “INTERNAL” serve as the primary signals for our routing logic, ensuring that high-sensitivity data is restricted to hardened targets and never leaks into public-facing systems. By treating the audit trail as a first-class deliverable, we provide the verifiable record of causal provenance that federal auditors demand for non-federal information systems.

Using unstructured natural language for audit records creates ambiguity in high-compliance environments. What are the advantages of using strictly typed schemas as the sole conduit for agent actions, and how does this approach facilitate reproducible change evaluations during a forensic audit?

Strictly typed schemas eliminate the “black box” nature of AI by forcing every agent intent into a deterministic format that a machine—and an auditor—can interpret without ambiguity. When we use a schema as the sole conduit, we enable reproducible change evaluations because auditors can perform structured diffs on the action requests rather than trying to parse a model’s conversational history. These schemas function as governance gates by embedding policy logic directly into the data model; for example, a “WRITE” action is programmatically rejected if it lacks a corresponding evidence URL or ticket link. This uniformity across logs ensures that regardless of which model generated the request, the fields remain consistent, allowing for automated pattern detection and forensic analysis during an incident. In a high-compliance world, we don’t audit model weights; we audit the structured packets that crossed the boundary into the production environment.

Autonomous agents can perform a series of individually valid actions that collectively lead to an unsafe global state. How do you design resource budgets or causal trace propagation to detect these emergent failure modes?

Addressing emergent failure modes requires shifting focus from isolated actions to the entire lifecycle of an agent session. We implement resource and action budgets that impose hard limits on the number of state-modifying operations allowed per hour or per resource, preventing an agent from “drilling” into a system through a thousand tiny, valid steps. Furthermore, we use causal trace propagation by attaching persistent trace identifiers to every action, which allows us to reconstruct the execution lineage even if the model’s internal memory is cleared or reset. By embedding logical invariants into our approval gates—such as a rule that prohibits simultaneous privilege escalation and audit log modification—we catch sequences that would otherwise bypass traditional point-in-time checks. This “distributed system” approach to safety ensures that the cumulative impact of an agent’s work remains within the bounds of the organization’s risk tolerance.

In high-stakes domains like finance or defense, the identity of the requester is as important as the action itself. How should an execution service be decoupled from the agent to prevent privilege escalation, and what role does idempotency play in maintaining system integrity during infrastructure failures?

Decoupling is achieved by treating the agent as a “confused deputy” that is never granted direct access to sensitive APIs or databases; instead, it submits a request to a hardened Executor service that holds the actual credentials. This separation ensures that even if an agent is tricked into requesting a malicious action, the Executor only performs operations permitted by its own narrow, role-based access control (RBAC) profile. Idempotency is our primary defense against “zombie” actions; by requiring a unique 16-character key for every request, we ensure that a transient network failure doesn’t result in a double-charge or a duplicate access grant when the agent retries. This setup maintains system integrity by making every proposed state change a one-time event that is anchored by identity and temporal sequence, protecting the system from both infrastructure hiccups and adversarial manipulation.

What is your forecast for the adoption of agentic workflows in regulated industries?

I forecast that the adoption of agentic workflows will move away from “chat-based” interfaces toward “schema-driven” background processes where the AI is an invisible but governed engine. Over the next 24 to 36 months, I expect frameworks like NIST IR 8596 and laws like Colorado SB24-205 to force a standard where agentic “intent” is separated from “execution” by default across all high-risk domains. Organizations that attempt to deploy agents without a deterministic Commit Boundary will likely face severe “reviewer fatigue” and regulatory pushback, leading to a retrenchment toward simpler automation. However, for those who adopt structured audit trails and tiered risk routing, we will see a massive acceleration in operational speed, as 80% of low-risk tasks will be safely automated, leaving human experts to focus solely on the 20% of actions that require true ethical and legal scrutiny. Ultimately, the winners in this space will be defined not by the sophistication of their LLMs, but by the rigor of their architectural constraints.