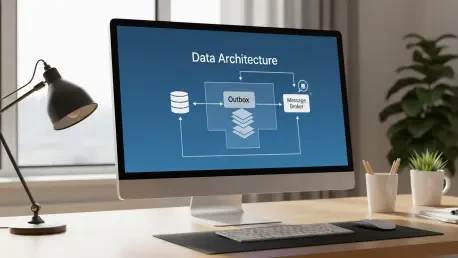

Modern software engineering frequently encounters the “dual-write” problem, a situation where a single user action necessitates updating a primary database while simultaneously notifying downstream services through a message broker. In an ideal environment, these two operations would occur in perfect synchronization, but the reality of distributed systems involves network latencies, partial failures, and unexpected service restarts that can leave data in a state of terminal inconsistency. When a developer attempts to save a new record to a database and then immediately publish an event to a system like Apache Kafka or RabbitMQ, there is a distinct window of vulnerability between these two network calls. If the application crashes after the database commit but before the message transmission, the rest of the ecosystem remains unaware of the change, creating a “silent failure” that is notoriously difficult to debug and even harder to reconcile manually.

To mitigate these risks, architects turn to the Transactional Outbox pattern, a reliability strategy that leverages the inherent atomic properties of modern database engines to bridge the gap between state changes and event propagation. Instead of treating the database update and the event publication as two separate, independent tasks, this pattern treats them as a single unit of work that must either succeed entirely or fail entirely. This transition from synchronous messaging to an asynchronous, database-backed approach provides a foundation for eventual consistency, ensuring that every significant state change is eventually broadcast to the wider system without the fragility of traditional two-phase commits. By adopting this methodology, organizations can build resilient microservices that maintain high availability while strictly adhering to data integrity requirements across a sprawling infrastructure.

1. Modify the Data Model: Integrating Communication Into Persistence

The first critical step in implementing this pattern involves a fundamental shift in how entities are structured within the persistence layer, moving away from simple records to objects that carry their own intent. In a typical Python environment using the Beanie Object Document Mapper (ODM) with MongoDB, this means expanding the document schema to include a dedicated field or embedded object representing the pending message. This OutboxMessage structure usually contains the target topic, a serialized version of the event payload, and a timestamp indicating when the intent was recorded. By embedding this information directly into the primary document, the application ensures that the metadata necessary for external notification is physically co-located with the business data it describes, setting the stage for a truly atomic operation.

Beyond simple storage, this modification requires careful consideration of the data types and serialization formats to ensure compatibility with downstream consumers. Using Pydantic models to define the outbox structure allows for strict validation of the payload before it even reaches the database, preventing malformed events from cluttering the queue. Furthermore, including metadata such as a unique message identifier or a schema version within the embedded outbox object helps maintain long-term stability as the system evolves. This structural change effectively transforms the database into a temporary buffer for outbound communication, ensuring that no message is ever “forgotten” because it was never decoupled from the source of truth that generated it in the first place.

2. Establish a Partial Index: Optimizing the Discovery of Pending Tasks

Once the data model has been updated to accommodate outbox messages, the next technical challenge is ensuring that the system can efficiently identify which records need to be processed without incurring significant performance overhead. In large-scale databases containing millions of documents, performing a full collection scan to find a handful of pending messages would be disastrous for latency and resource consumption. This is where the concept of a partial index becomes indispensable; by configuring the database to only index documents where the outbox field exists and is not null, developers can create a highly focused lookup table. This index remains remarkably small and resides entirely in memory, allowing the background worker to locate the next task in a matter of microseconds, regardless of the overall size of the dataset.

In MongoDB, this is achieved through a partialFilterExpression, which instructs the engine to ignore any document that does not meet the specific criteria of having an active outbox message. This approach is significantly more efficient than a standard index, as it avoids the write-cost of updating index entries for documents that have already had their messages processed and removed. By specifically targeting the created_at timestamp within the outbox object, the system also gains the ability to process events in a strictly chronological order, which is often a requirement for maintaining logic across distributed workflows. This optimization ensures that the consistency layer does not become a bottleneck, allowing the application to scale horizontally while maintaining a lean and responsive database infrastructure.

3. Execute an Atomic Write: Guaranteeing Integrity at the Source

The core of the Transactional Outbox pattern lies in the execution of the atomic write, where the primary business data and the outbox message are persisted in a single transaction. In the context of a document database like MongoDB, atomicity is guaranteed at the single-document level, meaning that when an application calls an insert or update function on a user profile that includes an embedded outbox message, the operation is binary. It either succeeds in its entirety, or the entire request is rejected, leaving the database in its original state. This eliminates the possibility of a “partial success” scenario where a user is created but the notification is lost, or vice-versa, providing a solid guarantee that the system’s internal state and its external communication intent are perfectly synchronized.

Implementing this logic within a web framework like FastAPI involves a straightforward transition from calling a message broker client to simply saving a document. By removing the external network call to a broker like Dapr or Kafka from the request-response cycle, the endpoint becomes much more resilient to third-party outages and experiences lower latency. The application logic focuses solely on the database, which is typically the most stable component of the stack, and leaves the complexities of network retries and broker protocols to the background layer. This separation of concerns not only simplifies the codebase but also provides a “safe harbor” for data, ensuring that once a transaction is committed, the intent to notify the rest of the world is permanently recorded and shielded from transient application crashes.

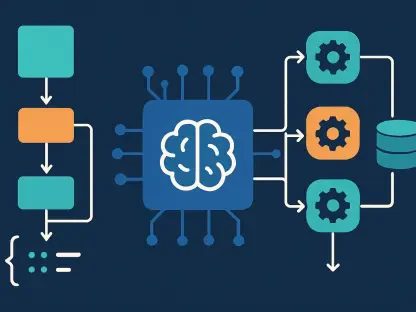

4. Deploy a Message Relay Worker: Separating Persistence From Communication

With the data safely persisted, the responsibility for actual delivery shifts to a dedicated message relay worker, which functions as a bridge between the database and the message broker. This worker is an independent background process or an asynchronous task that runs continuously, polling the database for any documents identified by the partial index as having unsent messages. Because this process operates outside the critical path of the user request, it can be tuned for maximum reliability, employing aggressive retry strategies and sophisticated error handling that would be inappropriate for a standard API endpoint. The worker ensures that even if the message broker is temporarily offline for several minutes, no data is lost; the messages simply wait in the database until connectivity is restored.

The design of this relay worker must be robust enough to handle high volumes of data while remaining light on resource consumption. By using the previously established partial index, the worker can use a “find and process” loop that is both fast and predictable. In a typical implementation, the worker retrieves the oldest pending message, attempts to publish it, and then proceeds to the next record. This decoupling allows the application to handle bursts of traffic without overwhelming the message broker, as the outbox acts as a natural buffer. Furthermore, running the relay as a separate service allows for independent scaling; if the volume of events grows, additional worker instances can be deployed to increase throughput without requiring changes to the primary API services.

5. Broadcast the Event: Interfacing With the Distributed Ecosystem

The broadcasting phase is where the relay worker finally interacts with the external world, typically utilizing a sidecar or a client library like Dapr to abstract the complexities of the underlying pub-sub infrastructure. When the worker identifies a pending outbox message, it extracts the payload and the target topic, then passes this information to the message broker for distribution to interested subscribers. Utilizing an abstraction layer like Dapr is particularly advantageous because it allows the system to remain agnostic of the specific broker being used, whether it be Redis, NATS, or a cloud-native solution like Amazon SNS. The worker’s primary focus at this stage is to ensure that the broker acknowledges receipt of the event before moving to the next step of the cycle.

If the broadcast attempt fails due to a network timeout or a broker-side error, the relay worker must be designed to fail gracefully. Because the message has not yet been removed from the database, the next iteration of the worker’s loop will find the same record and attempt the publication again. This “at-least-once” delivery guarantee is a hallmark of the outbox pattern, providing a fail-safe mechanism that guards against the inherent unreliability of network communication. The worker can be configured with exponential backoff strategies to prevent hammering a failing broker, ensuring that the system remains stable even during prolonged infrastructure outages. This ensures that the message is eventually delivered, fulfilling the promise of eventual consistency across the entire microservice landscape.

6. Remove the Sent Message: Finalizing the Lifecycle of an Event

Once the message broker has successfully acknowledged the receipt of an event, the relay worker must perform a final cleanup operation to prevent the same message from being sent repeatedly. This is accomplished by updating the source document in the database to remove the outbox field, effectively “retiring” the notification intent. In MongoDB, the $unset operator is the ideal tool for this task, as it removes the specific field from the document in an atomic fashion. This final database update is the signal that the outbox lifecycle is complete, and because of the partial index configuration, the document will immediately disappear from the worker’s view, allowing it to focus on the next pending task in the queue.

The timing of this removal is critical to the integrity of the pattern, as it must only occur after a successful confirmation from the message broker. If the worker were to remove the message before receiving an acknowledgement, a crash at that precise moment would result in a lost event, defeating the entire purpose of the outbox pattern. Conversely, by waiting for the broker’s “OK,” the system accepts the possibility that a message might be sent twice if the worker crashes between the broadcast and the removal. This trade-off—guaranteed delivery at the cost of potential duplicates—is a fundamental reality of distributed systems. The removal step ensures that under normal operating conditions, the database remains clean and the background worker operates with maximum efficiency, only touching records that truly require attention.

7. Incorporate Idempotency Measures: Handling the Reality of Duplicates

The final and perhaps most important consideration in the Transactional Outbox pattern is the implementation of idempotency within the downstream services that consume these events. Because the relay worker is designed for “at-least-once” delivery, there are edge cases where a message is successfully published to the broker, but the worker crashes before it can delete the message from the database. When the worker restarts, it will inevitably pick up the same message and publish it again, leading to a duplicate event. If the consuming service is not designed to handle this, it might perform a sensitive action twice—such as charging a credit card or sending a duplicate welcome email—which can have significant negative consequences for the end user and the business.

To solve this, downstream consumers should maintain a record of processed message IDs or use unique business keys to verify if an event has already been handled. When a new message arrives, the consumer first checks its own “processed events” table; if the message ID is found, the consumer simply acknowledges the message without executing the logic again. This defensive programming ensures that the entire distributed system remains consistent even when the underlying transport mechanism produces duplicates. By combining the atomic guarantees of the Transactional Outbox on the producer side with strict idempotency on the consumer side, developers can create a robust, self-healing architecture that maintains absolute data integrity across multiple services and databases, regardless of the failures that occur in between.

The implementation of the Transactional Outbox pattern represented a significant leap forward in how distributed systems managed the inherent risks of dual-writes. By moving the point of failure from a fragile network call to a reliable database transaction, architects were able to provide a level of consistency that was previously reserved for monolithic applications. As infrastructure continues to evolve, the principles of atomicity and eventual consistency will remain the bedrock of reliable software design. Future developments will likely focus on deeper integration between database engines and message brokers to further automate these patterns, but the fundamental requirement for idempotent consumers and atomic state changes will persist as a core tenet of resilient engineering. Moving forward, teams should prioritize the standardization of outbox schemas and the adoption of robust background processing frameworks to ensure that their systems can withstand the unpredictable nature of modern cloud environments.