Vijay Raina is a preeminent authority in enterprise SaaS technology and software architecture, specializing in bridging the gap between deep technical execution and executive-level strategy. With extensive experience in navigating the complexities of software design and organizational leadership, he has become a leading voice on how engineering teams can communicate value to non-technical stakeholders. His insights focus on transforming the traditional, often cumbersome reporting processes into streamlined, data-driven narratives that align with modern agile methodologies.

The following discussion explores the critical friction between high-velocity engineering cycles and the static nature of executive boardrooms. We examine the phenomenon of reporting latency, the “snapshot trap” that leads to data rot, and the strategic refactoring of technical debt into financial terms. By applying principles like observability and the “Don’t Repeat Yourself” (DRY) rule to corporate communication, this dialogue provides a blueprint for engineering leaders to secure trust, budget, and resources through clarity and automation.

Engineering teams often operate in fast-paced sprints while boardrooms rely on monthly static reports. How does this reporting latency impact critical decision-making, and what specific communication breakdowns occur when high-velocity technical data is forced into a traditional waterfall-style format?

Reporting latency acts as a silent killer of organizational agility, much like high latency degrades the performance of a distributed system. When we force two-week sprint data into a 30-day reporting cycle, we introduce a massive “translation gap” where the signals are delayed and the noise is amplified. By the time a CTO presents a 50-page slide deck, the information is often two weeks old, leading to a breakdown where the board is making decisions based on “stale data” rather than the current reality of the codebase. This mismatch often results in missed opportunities for course correction or delayed approvals for critical infrastructure projects that the team needed days or weeks ago. It creates a dynamic where engineering is seen as a black box, and the executive team loses the ability to act with the same velocity as the developers on the ground.

Static screenshots of live dashboards frequently lead to data rot and a loss of fidelity by the time they are reviewed. How can leaders shift from documenting past events to providing actionable observability, and what strategies help prevent non-technical stakeholders from focusing on the wrong metrics?

The “dashboard screenshot” is a classic anti-pattern that forces us to spend the first 10 minutes of a meeting explaining that a bug count is lower now than it was when the slide was created five days ago. To move toward true observability, we must stop providing documentation and start providing curated signals that allow for “drill-down” capabilities during the review. Instead of showing a list of 50 closed JIRA tickets, which invites non-technical stakeholders to micromanage activity, we should present a trend line of Story Points Completed versus Planned Capacity. This shifts the focus from “what was done” to the health of the delivery system itself. By curating these signals, we guide the audience to look at velocity and volume rather than getting lost in the weeds of specific, low-level tasks that don’t reflect the bigger picture.

Framing technical debt as a “financial interest payment” or a “tax” on new development changes the conversation with CFOs. How do you visualize this trade-off effectively, and what metrics best demonstrate the correlation between unaddressed debt and the slowing velocity of new feature delivery?

Visualizing technical debt requires moving away from abstract coding concepts and toward the language of the balance sheet. I recommend using a stacked bar chart that clearly delineates “New Features” versus “Maintenance Work” over several sprints. When the maintenance portion of that bar starts to grow, it serves as a sensory, visual proof that the team is paying a “tax” on every new line of code written. You can specifically tell a CFO that for every sprint we delay fixing a legacy service, we are essentially paying a 15% interest rate that eats into our innovation budget. This correlation becomes undeniable when you show that as the “Maintenance” bar expands, the “Feature Velocity” bar shrinks, creating a clear financial argument for additional headcount or dedicated refactoring cycles.

Cloud infrastructure costs are often a massive expense, yet they are rarely contextualized beyond total spend. How should engineering leaders present unit economics, such as cost per transaction, to prove efficiency, and what is the best way to structure a formal request for additional headcount?

Engineering leaders need to treat the cloud bill as a component of product health rather than just a line item of “spend.” By presenting unit economics—like “Cost per Active User” or “Cost per Transaction”—you demonstrate that rising AWS or Azure costs are a direct reflection of business growth rather than technical inefficiency. This provides the necessary context: if the cost per transaction is decreasing while total spend is increasing, the system is actually becoming more efficient as it scales. When it comes to requesting headcount, this data-driven approach is your strongest tool; you aren’t just asking for more people, you are showing how the current “Maintenance tax” is blocking the ROI of new features. A successful request ties that headcount directly to the reduction of that tax and the acceleration of the revenue-generating roadmap.

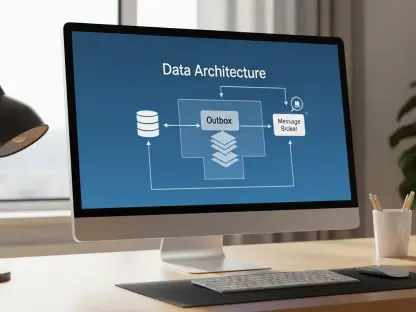

Applying the “Don’t Repeat Yourself” principle to reporting involves standardizing schemas and automating data pulls from tools like JIRA. What are the practical steps for building this automated reporting pipeline, and how do you iterate on this architecture based on stakeholder feedback?

The first step is to “refactor the monolithic deck” into a standardized 5-slide schema that acts as a reliable API for your stakeholders. You then implement automation by using plugins or custom scripts to pull metrics directly from JIRA, Datadog, or Tableau into your slide placeholders, eliminating the manual drudgery of copy-pasting. Once the pipeline is live, you must treat it like an evolving product, asking for specific feedback after every board meeting to see if the “Technical Debt visualization” or “Risk Mitigation” slides hit the mark. This iterative process ensures that the reporting architecture stays lean and relevant, reducing the administrative burden on engineering directors while increasing the clarity of the data provided. It’s about moving from a “manual build” process to a “continuous integration” model for corporate communication.

High-level summaries often act as an “API Response” for the board. What essential components must be included in an executive status update to ensure clarity, and how do you visualize complex dependencies so that leaders understand why a delay in one team blocks another?

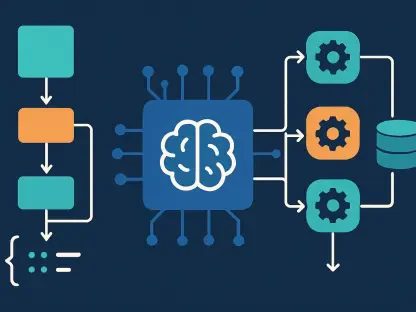

An effective executive “API Response” must start with the BLUF—the Bottom Line Up Front—which includes a RAG status (Red/Amber/Green) and a clear “ask” for budget, headcount, or risk acceptance. To handle dependencies, you need a roadmap visualization that isn’t just a list of dates but a map of integration points. By visually highlighting where Team A’s output becomes Team B’s input, you make the non-linear nature of software development tangible for the board. This prevents the “why isn’t it done?” frustration by showing that a delay in a third-party API or a foundational service creates a ripple effect across the entire product roadmap. It changes the conversation from “why is this team slow” to “how do we unblock this critical dependency.”

What is your forecast for the future of executive reporting in engineering organizations?

I believe we are moving toward a world where the static PowerPoint deck will be replaced by dynamic, real-time “Executive Observability Platforms.” In the next few years, the most successful engineering leaders won’t spend days preparing for a monthly review; instead, they will provide stakeholders with an interactive, high-level interface that allows for on-demand insights into velocity, unit economics, and risk. We will see a shift where the “Monthly Operating Review” becomes a continuous, asynchronous conversation fueled by automated data pipelines rather than a high-pressure monthly event. This will finally close the gap between Agile engineering and Waterfall management, allowing organizations to move at a singular, unified speed.