The modern data center has effectively migrated into the cloud, yet many organizations find themselves struggling to maintain control over the very technologies meant to provide them with agility. While provisioning a cluster in Azure Kubernetes Service (AKS) takes only a few clicks, the distance between a functional deployment and a resilient, enterprise-grade operation is vast. Without a rigorous approach to management, the benefits of containerization—namely speed and scalability—can quickly be overshadowed by architectural debt and unpredictable costs. This guide addresses the transition from “Day 1” implementation to “Day 2” operational excellence, focusing on the sophisticated strategies required to sustain a high-performance environment.

As enterprises scale their digital footprint from 2026 toward 2030, the necessity of mastering AKS becomes a matter of competitive survival. The transition to production-grade operations involves more than just keeping services running; it requires a deep understanding of multi-dimensional scaling, zero-trust security architectures, and strategic cost optimization. By moving beyond basic configurations, IT leaders can ensure that their infrastructure remains an asset rather than a liability. This comprehensive analysis details how to navigate these complexities to achieve a stable and efficient cloud-native ecosystem.

Why Enterprise Best Practices are Essential for Cloud-Native Success

Relying on default configurations in a production environment is a recipe for catastrophic failure in an era of sophisticated cyber threats and economic volatility. Best practices serve as a protective barrier against “scaling loops,” where conflicting automation scripts destabilize the system, and security breaches that exploit overly permissive defaults. When an enterprise adheres to established guidelines, it transforms its infrastructure from a fragile collection of scripts into a robust platform capable of supporting mission-critical workloads. This discipline is the foundation of infrastructural elasticity, ensuring that the system can expand or contract based on demand without manual intervention.

Furthermore, following a standardized operational framework mitigates the risk of “cloud sprawl” and ballooning budgets that often accompany unmanaged growth. Significant financial discipline is achieved when teams move away from reactive troubleshooting toward proactive policy enforcement. By implementing these standards, organizations gain a predictable and repeatable deployment model that reduces human error. The benefits extend beyond technical stability; they foster a culture of excellence where DevOps teams can focus on delivering value through software rather than fighting fires within the underlying cluster.

Actionable Strategies for Mastering the AKS Ecosystem

Implementing Multi-Dimensional Scaling for High-Density Workloads

Achieving true elasticity in AKS requires a synchronized choreography between individual application components and the underlying virtual hardware. Scaling is not a linear process but a multi-dimensional one involving the Horizontal Pod Autoscaler (HPA) and the Cluster Autoscaler (CA). While HPA increases the number of pod replicas to distribute traffic, the Cluster Autoscaler ensures that the physical pool of compute nodes expands to accommodate these new pods. This relationship must be carefully tuned, as a lack of coordination can lead to “pending” pods that have no place to run, causing service interruptions during critical traffic spikes.

To make these autoscalers effective, developers must provide them with accurate data through the configuration of resource requests and limits. Without these definitions, the scheduler is essentially flying blind, unable to determine where to place workloads or when to trigger a node scale-up event. Moreover, integrating the Vertical Pod Autoscaler (VPA) can help right-size applications that are not easily distributed. However, it is vital to avoid running HPA and VPA on the same resource for the same metric, such as CPU usage, to prevent them from conflicting and creating an unstable environment.

Case Study: Leveraging KEDA for Event-Driven Efficiency

Consider the case of a global logistics provider that struggled with high operational costs due to idle compute resources during off-peak hours. By implementing Kubernetes Event-driven Autoscaling (KEDA), the organization moved beyond simple resource-based scaling to a system triggered by real-time demand signals from Azure Service Bus. This allowed their microservices to scale to zero when no messages were in the queue, effectively eliminating compute charges during periods of inactivity. This event-driven approach ensured that the infrastructure was only as large as the immediate task required, demonstrating a peak level of architectural efficiency.

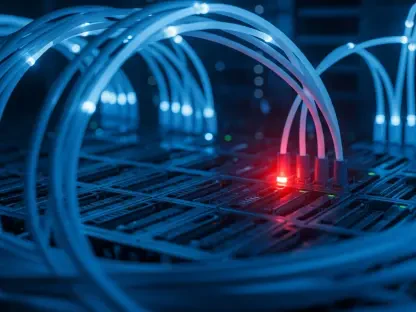

Hardening the Cluster with Zero-Trust Identity and Networking

Security in the modern enterprise must assume that the internal network is just as hostile as the public internet. Moving away from legacy security methods, such as static Kubernetes Secrets, is the first step toward a zero-trust posture. Azure AD Workload Identity facilitates this by using OpenID Connect (OIDC) federation, which allows pods to access cloud resources like Azure Key Vault or Storage Accounts without the need for long-lived credentials. This shift significantly reduces the risk of credential leakage and simplifies the management of service identities across the entire fleet.

Network isolation represents the second pillar of a hardened cluster environment. By default, Kubernetes allows any pod to communicate with any other pod, which provides a path for lateral movement during a security incident. Implementing Network Policies allows administrators to define explicit rules for traffic flow, ensuring that only authorized services can interact. This granular control is essential for multi-tenant environments where sensitive data must be strictly separated from public-facing components. Utilizing Azure Policy for Kubernetes further extends this governance by enforcing guardrails that prevent the deployment of non-compliant or insecure container images.

Case Study: Implementing Namespace Isolation and Global Deny Policies

A financial services firm recently overhauled its AKS security by adopting a “deny-all” default ingress and egress strategy within its multi-tenant clusters. By utilizing namespace isolation and strict Network Policies, the team ensured that a compromise in a development environment could not migrate to a production database. They further bolstered this defense by using Azure Policy to audit every deployment against a set of organizational standards, such as prohibiting the use of privileged containers. This multi-layered approach successfully minimized their attack surface and ensured compliance with strict industry regulations.

Optimizing Financial Performance through Intelligent Resource Management

Financial transparency and control are often the most difficult aspects of managing a large-scale AKS environment. To maximize the return on investment, enterprises should leverage advanced cost-saving mechanisms like Azure Spot Node Pools for workloads that are fault-tolerant. Spot instances offer deep discounts by utilizing spare Azure capacity, making them ideal for batch processing or CI/CD runners. However, because these instances can be evicted with little notice, they must be paired with Reserved Instances or standard node pools to maintain the stability of the core application services.

Beyond hardware selection, the concept of “bin packing” is crucial for maximizing resource density. This involves configuring pod placement so that nodes are utilized to their fullest extent before new ones are provisioned. The Cluster Start/Stop feature provides another layer of fiscal control, allowing non-production clusters to be completely shut down during nights and weekends when they are not in use. By viewing infrastructure as a dynamic cost center rather than a fixed expense, DevOps teams can achieve a high degree of financial agility while maintaining the performance levels required by the business.

Case Study: Reducing Compute Costs with Spot Instance Integration

An e-commerce enterprise successfully reduced its monthly compute spend by 90% for its data processing pipelines by integrating Spot Node Pools into its AKS architecture. The DevOps team configured their non-critical batch jobs to run exclusively on these discounted instances while keeping the web-facing storefront on stable Reserved Instances. They implemented robust retry logic within their applications to handle potential evictions seamlessly. This strategy allowed the company to redirect significant portions of its IT budget toward new feature development rather than just maintaining existing infrastructure.

Achieving Long-Term Operational Excellence in AKS

Mastering the complexities of Azure Kubernetes Service required a fundamental shift in how leadership viewed infrastructure management. The transition from managing individual servers to managing high-level policies and constraints became the defining characteristic of successful teams. By automating the most difficult aspects of scaling and security, organizations found that they could maintain a high velocity of deployment without sacrificing stability. The integration of Managed Prometheus and Grafana for proactive monitoring proved to be a non-negotiable step, providing the visibility needed to identify bottlenecks before they impacted the end-user experience.

The evolution of these platforms highlighted the importance of continuous learning and adaptation as cloud capabilities matured. IT leaders who embraced these best practices discovered that their infrastructure could finally keep pace with the rapid demands of modern business. Looking forward, the emphasis on managed governance and intelligent automation will only intensify. Organizations that prioritized these operational standards early on were better positioned to integrate emerging technologies and scale their operations globally with minimal friction. Ultimately, the success of an enterprise cloud strategy rested on the ability to turn technical complexity into a streamlined, policy-driven engine for growth.