The deceptive silence of a perfectly green monitoring dashboard during the initial stages of a system rollout often precedes the most catastrophic infrastructure meltdowns in modern computing. A deployment begins with cautious optimism, as the first ten percent of traffic migrates to a new version without triggering a single alert or latency spike. Confident in the stability of the latest build, engineering teams scale the release to fifty and then seventy-five percent, only to watch the entire architecture collapse into a fiery pile of timeouts and database locks. Post-mortem analyses frequently reveal a recurring culprit: the old system had not finished its critical work when the new system began aggressively seizing control of the environment. This collision of states creates a chaotic middle ground where neither version can effectively handle the load, proving that the deployment process itself—rather than the code—is frequently the root cause of systemic failure.

The persistence of these failures suggests a fundamental misunderstanding of how energy and state move through a distributed network. When software engineers treat a deployment as a simple handoff, they ignore the physical reality of in-flight data and lingering connections. This gap between the “Readiness” of a new pod and the “Finality” of an old one is where the seeds of a cascade are sown. Instead of a smooth transition, the system experiences a turbulent overlap that exhausts shared resources, such as connection pools and thread limits, ultimately leading to a total blackout.

From Fluid Dynamics to Distributed Systems: The Terence Tao Insight

In 2014, world-renowned mathematician Terence Tao encountered a strikingly similar phenomenon while attempting to solve a complex problem in fluid dynamics regarding energy concentration. His objective was to prove that fluid flow could concentrate energy at a single point, but his initial mathematical models failed because the energy dispersed across multiple scales simultaneously. Tao realized that by trying to push energy from large scales to small scales as fast as possible, he was actually preventing the concentration he sought. The energy was spreading out, becoming thin and uncontrollable across various layers of the system, much like how a rushed software deployment spreads its load across conflicting versions.

The solution to this mathematical impasse came from an engineering concept suggested by Tao’s wife: the airlock. By programming a specific delay that ensured one stage of the fluid energy transfer was entirely complete before the next gate opened, Tao could concentrate the energy effectively without losing control to dispersion. This realization bridged the gap between theoretical mathematics and practical stability. If energy—or data—is allowed to exist in too many states at once, the resulting chaos prevents the system from reaching its intended goal, leading to a state of equilibrium that looks more like a collapse.

Breaking the Cycle of Dispersion Through Controlled Stages

To prevent the dreaded cascade failure, modern infrastructure must move away from the “dispersion” model where multiple deployment stages overlap and fight for limited hardware resources. The standard approach of rolling updates often assumes that once a new container is healthy, the old one is no longer relevant. However, the airlock principle suggests that stability is only achieved when there is no ambiguity about which version of the system is currently “holding” the energy of the request. By treating each step of a rollout as a pressurized chamber, engineers can ensure that the system remains stable even under the extreme pressure of high-traffic transitions.

The airlock pattern functions exactly like its namesake on a space station: one door must be fully sealed and the internal pressure equalized before the second door is permitted to open. In the world of software, this means ensuring that Stage 1 of a rollout is not merely “running” but has fully drained its legacy tasks before Stage 2 is allowed to begin. This deliberate concentration of activity in a single active stage makes any emerging problems highly visible, contained within a specific percentage of the fleet, and—most importantly—easily reversible without affecting the integrity of the entire cluster.

“Ready” vs. “Drained”: The Metric That Actually Matters

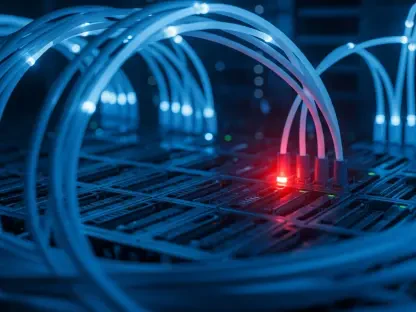

Most modern deployment tools focus on a metric known as “Readiness,” which essentially asks if the new version of the code is capable of accepting new work. While this is necessary, the Airlock Pattern prioritizes “Draining,” a much more critical metric that asks whether the old version has finished all its in-flight requests, closed its database connections, and successfully flushed its internal buffers. A system is only safe to progress to the next gate when the old version is truly done, rather than just when the new version passes a basic health check. If the “drain” is ignored, the infrastructure begins to accumulate “ghost” work that consumes memory and CPU cycles without contributing to the success of the new deployment.

A server can appear healthy on a dashboard while still processing hundreds of background jobs or holding open thousands of zombie connections to a primary database. If the deployment moves forward while these resources are tied up, the new version will find itself starved of the very capacity it needs to survive. By switching the primary success metric from the readiness of the new to the emptiness of the old, teams create a hard stop that prevents the accumulation of technical debt during the most vulnerable moments of the software lifecycle.

Identifying Supercriticality Before the Crash

The research conducted by Tao also highlights a hidden danger known as supercriticality, a state where small-scale chaos begins to dominate the large-scale stability of a system. In IT infrastructure, this manifests when individual components, such as a specific pod or a single microservice node, fail at a rate significantly faster than the overall system average. When a dashboard shows a seemingly manageable two percent error rate across the entire cluster, but a deep dive reveals a fifty percent error rate on a specific set of nodes, the system has entered a supercritical state. The chaos is no longer being dampened by the surrounding infrastructure; instead, it is concentrating.

In these moments, the common instinct to add more capacity by spinning up additional servers is often a fatal mistake. Adding more capacity to a supercritical system is akin to adding fuel to a forest fire; the new resources simply inherit the unstable state and provide more room for the failure to spread. The Airlock Pattern dictates that the only safe response to such a divergence in metrics is to immediately halt the transition and investigate the localized failure. Scaling up during an airlock violation only ensures that the subsequent failure will be larger and more difficult to contain.

Implementing the Airlock Framework in Your Infrastructure

Adopting this structured approach requires a cultural shift that prioritizes state integrity over the raw speed of deployment. While business requirements often push for faster release cycles, the mathematical truth discovered by Tao suggests that by slowing down and ensuring stage-gate integrity, engineers avoid the dispersion that leads to systemic collapse. This methodology turns the deployment pipeline into a series of discrete, verifiable events where the transition between states is as carefully managed as the code itself.

Three Rules for Architectural Safety

Applying the Airlock Pattern effectively requires strict adherence to three core architectural constraints:

- One Active Stage at a Time: Only one gate should be in a state of transition at any given moment. This keeps the blast radius of any potential failure localized to a specific, manageable portion of the infrastructure.

- Drain Before Advance: Engineers must define clear, measurable thresholds for in-flight work. For example, a rollout should not move from 25% to 50% until the number of active connections on the legacy version drops below a predefined safe limit.

- Halt on Supercriticality: Automated monitoring must be implemented to compare per-node error rates against cluster-wide averages. If these numbers diverge beyond a specific ratio, the deployment should automatically pause to prevent small-scale failures from amplifying.

Beyond Deployments: Universal Applications

The Airlock Pattern is a versatile framework that extends far beyond simple application rollouts and should be applied to any high-risk operational task. In database migrations, it is vital to ensure that a structural change has fully propagated to all replicas before initiating the next phase of the update to prevent read inconsistencies. During secret rotations, old credentials should never be revoked until every dependent service has explicitly confirmed the successful adoption of the new ones. Similarly, in cache invalidation and feature flag rollouts, the “gate” must remain closed until the previous state has been entirely cleared from the network.

As organizations moved toward more complex, distributed architectures, the necessity of the Airlock Pattern became undeniable. Engineering teams began integrating these “drain checks” directly into their continuous integration and delivery pipelines, replacing simple timers with sophisticated state-aware sensors. By 2026 and beyond, the focus shifted from how fast a feature could be shipped to how cleanly the previous version could be retired. This transition in thought turned the deployment process from a source of anxiety into a predictable, mathematically sound operation that prioritized the long-term health of the system over the short-term speed of the release. Teams that embraced these rigorous gate-keeping protocols found they could actually deploy more frequently because they no longer spent their nights recovering from the aftershocks of a rushed transition.