The traditional software-as-a-service market is currently facing a reckoning that mirrors the intensity of historical economic corrections, yet the underlying cause is fundamentally distinct from previous financial cycles. This phenomenon, widely categorized by industry analysts as a “value reset,” represents a significant departure from the era of exuberant growth and high valuation multiples that defined the early decade. Investors are no longer evaluating software companies solely on their current quarterly earnings or immediate revenue targets; instead, there is a profound skepticism regarding the long-term durability of legacy business models in an environment where artificial intelligence can generate code and execute workflows at near-zero marginal cost. This shift has led to a dramatic contraction in market capitalization for even the most profitable enterprises, as the perceived risk of obsolescence begins to outweigh the stability of recurring subscription fees.

The most visible indicator of this crisis is the erosion of valuation multiples among top-tier providers such as Salesforce, ServiceNow, and Adobe. For years, these companies enjoyed growth premiums based on the assumption that their platforms would remain the central nervous system of the enterprise for decades. However, when a company’s valuation multiple drops significantly—for instance, from 25x to 12x—the market is essentially signaling that its conviction in that company’s survival over a twenty-year horizon has been halved. This re-evaluation is driven by the realization that AI is compressing the time required for both software development and enterprise deployment. Traditionally, implementing a major software suite was a multi-year commitment, but current AI agents can be integrated into existing data streams almost instantly, introducing a level of future uncertainty that makes long-term investment in legacy architectures appear increasingly hazardous.

The Evolution of AI and the Threat of Relegation

From Static Tools to Autonomous Operators

The current technological landscape is moving beyond the “reactive chatbot” paradigm, where users interacted with AI via simple queries, toward a sophisticated model of proactive “operators” or “agentic” architectures. A primary example of this transition is the deployment of Anthropic’s Claude Cowork, which functions not as a mere assistant but as a digital colleague capable of executing multi-step, complex workflows independently. Unlike earlier iterations of generative AI that required constant human prompting, these agents are designed to understand a high-level objective and independently determine the necessary steps to achieve it. This represents a fundamental shift in the value proposition of software; the focus has moved from providing a tool for human workers to providing a digital worker that utilizes various tools on behalf of the organization.

For instance, an autonomous agent does not simply assist a human in filling out a spreadsheet; it can independently navigate local file systems, extract relevant data from disparate receipt images or PDF invoices, and format a cohesive strategic report without human intervention. It can ingest conflicting market research, synthesize the information, and draft a comprehensive document that accounts for various internal data points across different platforms. This level of autonomy redefines the relationship between the user and the software. The agent is no longer a passive interface but an active participant in the production of work. As these capabilities become more refined from 2026 to 2028, the premium once paid for user-friendly interfaces is being redirected toward the underlying intelligence that actually completes the task, leaving traditional UI-heavy software in a precarious position.

The Shift Toward an Orchestration Layer

While it is unlikely that AI will completely eradicate the need for complex systems such as Customer Relationship Management or Enterprise Resource Planning platforms, there is a growing risk of these systems being “relegated” to the background. In the established software model, value is captured by the application itself; a business pays for a specific platform to manage its sales pipeline or marketing strategy, and each application operates as a siloed sandbox. However, the emergence of autonomous agents like Claude Cowork threatens to break these silos by operating across multiple platforms simultaneously. In a modern workflow, an agent can integrate with Slack, Microsoft Teams, and various cloud storage solutions, pulling data from one and executing actions in another without the user ever opening the individual application interfaces.

This shift suggests that individual SaaS applications are increasingly being viewed as low-margin back-end repositories—essentially “dumb pipes” for data storage—while the cognitive orchestration and ultimate value-add happen at the external agent layer. If an agent can synthesize competitor analysis from a document storage app and then draft a presentation via an API, the specific features of the underlying software become secondary to the agent’s ability to access the data. Consequently, the financial investment follows the intelligence. If the primary value resides in the orchestration layer, the applications beneath it lose their ability to command premium pricing. This transition forces software providers to decide whether they will remain as specialized databases or attempt to build their own orchestration layers to remain relevant in the eyes of the enterprise buyer.

Strategic Defenses and the Battle for Data Control

The Security of Proprietary Data Moats

In response to the threat of relegation, many SaaS providers are doubling down on their most valuable asset: proprietary data. The industry is currently witnessing a strategic divide between two philosophies of data management. Some large-scale suite providers are pursuing a “full-stack” or closed route, attempting to position themselves as the exclusive workspace for AI. By restricting data access and ensuring that AI-driven insights can only be generated within their native environments, these companies hope to create a “data moat” that prevents external agents from extracting value. This strategy is built on the premise that integrated, secure AI within a single, controlled ecosystem is more attractive to risk-averse enterprises than a fragmented approach involving third-party agents.

However, this closed-loop strategy faces significant resistance from sophisticated enterprises that demand the flexibility to use their own custom-built agents across all their data platforms. Organizations are increasingly looking to develop “super-employees”—internal AI models fed by the entirety of the company’s data, regardless of which software holds it. SaaS providers thus face a difficult dilemmif they restrict API access to protect their data, they risk alienating customers who prioritize interoperability and flexibility. Conversely, if they remain fully open, they risk becoming a commodity service where an external agent performs all the high-value work. The tension between maintaining a proprietary ecosystem and supporting an open data landscape will likely define the competitive dynamics of the software industry from the current period through the end of the decade.

Economic Adjustments and Workforce Consolidation

The transition toward an agent-led software environment is also fundamentally altering the economic structure of the modern corporate workforce. There is a visible trend toward job function consolidation, where an AI-enabled employee can now perform duties that were previously distributed across multiple specialized roles. For example, a single manager, supported by a fleet of autonomous agents, can oversee product development, user experience design, and basic engineering tasks that once required a full team. This shift significantly increases the “revenue per employee” metric, which could lead to higher corporate valuations for the companies that successfully implement these efficiencies, even as the traditional software vendors they use experience a valuation squeeze.

Paradoxically, while the valuation of traditional SaaS companies is under pressure, actual spending on software may experience a short-term increase. Because AI agents often function as “digital headcount,” they frequently require their own licenses or seats to interact with tools like Slack, Notion, or various project management platforms. An organization might deploy thousands of autonomous agents, each needing authenticated access to the company’s software stack, thereby temporarily driving up seat-based licensing costs. However, this is largely considered a transitional phase. As the industry matures, it is expected that there will be a move away from traditional per-seat licensing toward value-based or outcome-based pricing models, where the software cost is tied directly to the tasks performed or the efficiency gains achieved by the AI.

Identifying the New Winners in the Tech Ecosystem

Data Infrastructure as a Durable Foundation

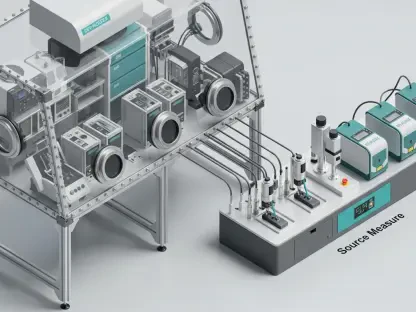

While the application-layer software market navigates its identity crisis, data infrastructure companies are emerging as the primary beneficiaries of the agentic revolution. Entities that provide the underlying storage, transformation, and accessibility services required for AI agents to function—such as Snowflake, Databricks, and ClickHouse—are increasingly viewed as the most durable investments in the tech sector. The effectiveness of any autonomous agent is entirely dependent on the quality and readiness of the data it processes. Without a clean, well-organized, and highly accessible data foundation, even the most advanced AI models cannot provide meaningful value to an enterprise.

These infrastructure providers are shielded from the “relegation” threat because they do not compete with AI agents; rather, they serve as the essential fuel that powers them. As organizations move toward building internal AI models and deploying sophisticated agents, the demand for robust data pipelines and real-time processing capabilities continues to grow. From 2026 to 2029, the strategic focus for many enterprises will be on consolidating their data into these high-performance environments to ensure that their digital workforces have the information they need to operate autonomously. This positioning allows infrastructure companies to maintain strong pricing power and high market confidence, as they represent the bedrock upon which the new era of intelligent software is being constructed.

The Strategic Value of Real-Time Communication

The communications sector, encompassing both Unified Communications as a Service and Contact Center as a Service, holds a unique and resilient position in the current market. Unlike static databases or specialized application silos, communication platforms generate high-value conversational data—including voice, video, and messaging—that provides a window into real-time customer sentiment and internal organizational dynamics. This type of data is notoriously difficult to replicate and serves as a critical input for training and directing AI agents. Furthermore, the infrastructure required to support real-time communication must meet extremely high standards of reliability, often referred to as “five-nines” uptime, which creates a significant barrier to entry for new competitors.

Because communication platforms serve as the “connective tissue” of an enterprise, they are ideally positioned to host the agents that coordinate work across the organization. An agent embedded within a communication platform can monitor discussions, identify action items, and trigger workflows in other applications based on the natural language used by employees. This integration of proprietary, real-time interaction data with complex, mission-critical infrastructure provides these companies with a more resilient moat than standard application code. As AI becomes more capable of generating code and simple software features, the value of the platform that facilitates human and machine interaction becomes increasingly apparent, securing the role of communication providers in the new enterprise stack.

Orchestrating the New Digital Workforce

The industry successfully navigated the transition from rigid, siloed systems toward a more fluid and intelligent architecture that prioritized orchestration over simple record-keeping. It became clear that the value of software was no longer found in its ability to store data, but in its capacity to facilitate the movement and transformation of that data through autonomous intelligence. Organizations that thrived were those that stopped viewing software as a collection of isolated tools and began treating their technology stack as a unified environment for digital work. This shift required a fundamental change in procurement strategies, moving away from long-term, seat-based contracts and toward flexible, API-first models that favored interoperability and data transparency.

Moving forward, businesses must prioritize the quality and accessibility of their internal data to ensure that they can leverage the full potential of autonomous agents. The most effective strategy involves investing in robust data infrastructure while demanding that application providers offer open, high-performance APIs. This approach prevents vendor lock-in and allows the enterprise to maintain control over its “digital headcount.” Success in this era was defined by the ability to orchestrate a hybrid workforce of humans and agents, where the primary objective was the execution of complex tasks rather than the mere management of software interfaces. The era of the connector has arrived, and the winners are those who facilitated the seamless integration of intelligence across the entire digital landscape.