The landscape of artificial intelligence is currently shifting from simple chat interfaces toward autonomous agents capable of reasoning over complex, interconnected datasets to provide high-fidelity answers. While traditional large language models often struggle with hallucinations or lack of domain-specific context, the emergence of graph-based architectures has provided a robust solution for ensuring data integrity and explainability. Neo4j Aura Agent serves as a comprehensive, end-to-end platform designed to bridge the gap between static data repositories and dynamic AI agents by linking them directly to knowledge graphs. This system simplifies the integration of GraphRAG (Graph Retrieval-Augmented Generation), allowing developers to deploy production-ready agents that do not just guess based on probability but actually navigate a structured web of facts. By centralizing the creation, connection, and management of these digital entities, the platform removes the traditional friction associated with manual orchestration of vector databases and relational stores.

Beyond merely providing a storage layer, this environment offers a low-code approach to building agents that can interpret ontologies and execute complex logic without requiring extensive boilerplate code. The fundamental benefit of this architecture lies in its ability to provide a “semantic layer” that sits between the raw data and the generative model, ensuring that every response is grounded in the specific relationships defined within the graph. As organizations transition toward more regulated and high-stakes AI applications in 2026, the demand for transparency and governance has made these graph-integrated systems essential. Aura Agent addresses these needs by offering autogeneration tools that can scan an existing database schema and immediately produce a functioning agent capable of sophisticated reasoning. This leap in accessibility means that even teams without deep expertise in graph theory can now leverage the power of nodes and relationships to fuel their next generation of intelligent applications.

1. Establishing a Foundation Through Graph-Based Knowledge

To understand how these agents operate, one must first grasp the concept of a knowledge graph, which organizes data as a network of nodes and relationships rather than flat rows and columns. In the Neo4j ecosystem, this is implemented as a Property Label graph, where entities like “Customer” or “Product” are nodes, and their interactions, such as “Purchased” or “Reviewed,” are the relationships connecting them. This structure is inherently more flexible than traditional SQL databases because it mirrors the way humans naturally categorize information, allowing the AI to understand the context and hierarchy of data points. When an agent queries a knowledge graph, it isn’t just looking for keyword matches; it is traversing a map of information to find how different concepts influence one another. This relational awareness is what gives the agent its “memory” and its ability to provide nuanced answers to complex questions.

Building upon the knowledge graph foundation, GraphRAG represents a significant advancement over standard retrieval methods by incorporating graph structures into the AI’s search path. Standard vector search might find documents that are mathematically similar to a query, but GraphRAG goes further by identifying the specific entities within those documents and exploring their connections. For example, a graph-augmented vector search can find a relevant node and then traverse its relationships to gather all related metadata, ensuring the context window of the LLM is filled with the most pertinent facts. Additionally, the system supports Text2Cypher, where the agent dynamically writes Cypher queries—the standard language for graph databases—to explore the data on the fly. For high-stakes scenarios requiring absolute precision, developers can also implement query templates, which are pre-approved, expert-written queries that the agent can trigger when specific conditions are met, ensuring that the retrieval logic remains both accurate and governed.

2. Managing Data Sources and Ontological Schemas

The effectiveness of an AI agent is fundamentally tied to the quality and variety of data it can access, which is why the platform supports a wide array of ingestion methods for both structured and unstructured information. For structured data residing in enterprise warehouses like Snowflake, Databricks, or Postgres, the Data Importer tool provides a streamlined pathway to map relational tables into a graph format. This process involves defining how columns translate to node properties and how foreign keys become the relationships that tie the graph together. By importing data this way, the agent gains immediate access to historical records and transactional data, allowing it to perform quantitative analysis alongside qualitative reasoning. This structural integration ensures that the agent’s knowledge base is consistent with the organization’s existing “source of truth,” preventing the data silos that often plague isolated AI projects.

Handling unstructured data, such as PDF contracts, research papers, or internal wikis, requires a different but equally integrated approach through entity extraction and merging. Using specialized Python packages and ecosystem integrations like LangChain or LlamaIndex, developers can process large volumes of text to identify key concepts and automatically insert them into the graph as nodes. This is where the concept of an ontology becomes vital; it serves as a formal data model or schema that instructs the AI on how to categorize these newly discovered entities. An ontology defines the rules of the domain—for instance, specifying that an “Employee” can “Report To” a “Manager”—which allows the agent to make logical inferences even when the raw text is ambiguous. By merging structured warehouse data with extracted unstructured insights, the agent operates on a holistic view of the corporate landscape, making it far more capable than a system relying on a single data type.

3. Configuring and Launching Your Digital Agent

The actual development process begins with setting up the agent’s identity and operational boundaries within the Aura interface. This involves more than just naming the agent; it requires the creation of a comprehensive system prompt that defines the agent’s persona, its goals, and its behavioral constraints. A well-crafted prompt ensures the agent knows it should act as a “Technical Support Specialist” or a “Financial Analyst,” tailored to the specific database it will be querying. Once the database is selected as the knowledge source, the platform can autogenerate the initial configuration by scanning the graph schema. This allows the agent to understand which nodes and relationships are available for exploration, effectively creating a custom map for the AI to follow before the first user interaction even occurs.

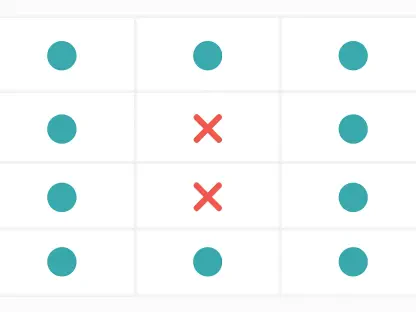

Once the identity is established, the developer must equip the agent with functional tools that dictate how it interacts with the data. These tools generally fall into three categories: vector similarity search for finding semantically related content, Text2Cypher for generating dynamic queries, and Cypher templates for executing rigid, high-precision logic. By providing a mix of these tools, the developer allows the agent to choose the most efficient path for any given user request. For instance, if a user asks for a summary of a document, the agent might use vector search; if they ask for the total number of sales in a specific region, it might opt for a Cypher template to ensure numerical accuracy. This multi-tool approach is what differentiates a basic chatbot from a sophisticated agent capable of handling diverse and complex workflows.

4. Validating Performance and Moving to Production

Before an agent is released to end-users, it must undergo rigorous validation in a controlled testing playground where its reasoning and logic can be scrutinized. One of the standout features of the Neo4j Aura Agent is its ability to reveal the “thinking” process behind every response, showing exactly which tools were called and what queries were executed. Because Cypher is a human-readable language that describes relationship patterns (e.g., (Person)-[:WORKS_AT]->(Company)), developers can easily verify if the agent is traversing the graph correctly. This transparency is crucial for debugging and for building trust with stakeholders who may be skeptical of “black box” AI. If the agent makes a mistake, the developer can see whether the error was in the retrieval logic, the underlying data, or the prompt instructions, allowing for rapid iterative refinement.

Once the agent’s performance meets the required standards, the transition to a production environment is designed to be as seamless as possible. Developers can select a publicly available endpoint, and the platform will automatically deploy the agent to a secure infrastructure authenticated via API key and secret pairs. This managed deployment handles the complexities of scaling and security, ensuring that the agent remains responsive even under heavy load. Furthermore, because the platform provides managed LLM inference and embeddings, teams do not have to worry about managing separate accounts or rotating API credentials for third-party model providers. This integrated approach significantly reduces the operational overhead, allowing developers to focus on the logic and utility of the agent rather than the underlying plumbing of the AI stack.

5. Integrating Agents Into the Modern Tech Stack

For organizations looking to extend the reach of their AI agents beyond a simple chat window, the platform supports advanced integration techniques like the Model Context Protocol (MCP). By wrapping an Aura agent in an MCP server, it can be easily discovered and invoked by other AI systems or professional tools like Claude Desktop. This allows for a “multi-agent” ecosystem where different specialized agents can collaborate; for example, a general-purpose assistant could call upon a Neo4j-powered “Contract Agent” to retrieve specific legal details from a knowledge graph. This interoperability is key to creating a unified AI experience across an enterprise, where the graph serves as a centralized intelligence hub that fuels various applications through a standardized communication protocol.

Finally, for custom application development, the agent can be called directly through standard web requests via its secure API endpoint. This enables developers to embed the agent’s capabilities into existing mobile apps, internal dashboards, or customer-facing websites with minimal effort. The API returns not just the final answer, but also the metadata regarding token usage, execution time, and the reasoning steps taken, providing full visibility into the agent’s operation at scale. As we move through 2026, the ability to rapidly deploy these explainable, graph-powered systems is becoming a competitive necessity. By moving from experimental prototypes to production-ready agents that leverage the structural power of knowledge graphs, companies can finally deliver on the promise of AI that is both highly intelligent and rigorously grounded in fact.

6. Evolving Toward Actionable Graph Intelligence

The journey toward building effective AI agents has shifted from a focus on sheer model size to a focus on data quality and structural integrity. By leveraging Neo4j Aura Agent, developers have successfully reduced the technical barriers that previously made GraphRAG and complex ontologies difficult to implement. The platform’s ability to unify data ingestion, tool configuration, and secure deployment into a single workflow has moved the industry away from fragmented, fragile AI setups toward resilient, production-grade systems. The transparency provided by human-readable Cypher queries and visible reasoning chains has finally addressed the “black box” problem, making AI an accountable part of the enterprise infrastructure. As these systems become more prevalent, the focus will likely shift toward optimizing the ontologies themselves to capture even more nuanced business logic.

Moving forward, teams should prioritize the refinement of their graph schemas to ensure they are capturing the most impactful relationships for their specific use cases. The next logical step for many will be to experiment with multi-agent orchestration, using the Model Context Protocol to link disparate data silos into a single, navigable intelligence network. This approach encourages a modular strategy where agents are treated as specialized microservices, each master of its own domain but capable of communicating through a shared graph-based context. By continuing to iterate on these patterns, organizations will move beyond simple information retrieval into the realm of proactive agentic reasoning. The future of AI does not just involve better models, but better ways of connecting those models to the complex web of real-world information that defines modern business.