The rapid ascension of Hancom’s Open Data Loader PDF v2.0 to the top of the GitHub trending list signifies a profound shift in how developers approach the persistent challenges of structured data extraction for machine learning. Reaching such a prominent milestone just one week after its mid-June release highlights a massive global appetite for tools that can effectively bridge the gap between static document formats and the hunger of large language models for high-quality training data. With over seven thousand stars and hundreds of forks, this project has moved beyond a simple utility to become a central pillar in the artificial intelligence development lifecycle. The significance of this achievement lies not just in the numbers but in the validation of a technical approach that prioritizes precision over speed, addressing the notorious PDF bottleneck that has historically slowed down the implementation of retrieval-augmented generation systems in corporate environments across the world today.

Technical Innovations in Automated Document Parsing

The Shift Toward Hybrid Extraction Models

The transition from basic text scraping to sophisticated structural analysis represents a major milestone in the evolution of document processing technology. Version 2.0 introduces a refined hybrid extraction engine that cleverly combines direct programmatic extraction with advanced AI-based vision methods to ensure that complex layouts remain intact during the conversion process. This dual-layered approach is particularly effective when dealing with documents that feature irregular formatting, such as overlapping text boxes or non-standard font encoding that often defeats traditional parsing tools. By decomposing these intricate PDF structures into machine-readable formats like JSON or Markdown, the tool allows AI models to recognize hierarchical relationships between headings, body text, and footnotes. This nuanced understanding is essential for maintaining context during the chunking phase of data preparation, ensuring that the resulting vector embeddings accurately reflect the original intent and logical flow of the source material.

Local Execution for Data Security and Privacy

A critical feature of this updated tool is its ability to operate entirely within local environments, ensuring that sensitive data never leaves the safety of an organization’s internal servers. In an era where data sovereignty and privacy are paramount, the move away from cloud-dependent extraction services allows enterprises to process confidential documents without risking exposure to third-party providers. This architecture not only enhances security but also significantly improves performance by eliminating the latency associated with uploading and downloading large datasets. By running the extraction engine on local hardware, developers can maintain complete control over their data pipeline, which is particularly vital for industries like finance, healthcare, and legal services. Furthermore, this localized approach simplifies compliance with strict international data protection regulations, providing a robust solution for companies that must adhere to rigorous auditing standards while still leveraging the latest advancements in artificial intelligence and automated data processing.

Ecosystem Integration and the Future of Open Data

Open-Source Accessibility via Apache 2.0 Licensing

The strategic decision to adopt the Apache 2.0 license has fundamentally changed the competitive landscape by lowering the barrier to entry for enterprises looking to scale their AI operations. This permissive licensing model allows companies to modify and extend the technology for commercial purposes without the fear of restrictive vendor lock-in or prohibitive licensing fees. As businesses increasingly prioritize the development of sovereign AI capabilities, the availability of high-performance, open-source extraction tools becomes a critical asset for maintaining long-term independence. Hancom’s move to make its core PDF processing logic available to the public suggests a broader vision of creating an expansive, open data platform that serves as a universal translator for legacy formats. This shift toward open-source accessibility encourages a collaborative environment where improvements in document parsing are shared across the industry, accelerating the overall pace of innovation and helping organizations overcome the data silos that have traditionally hampered their digital transformation efforts.

Strategic Framework Compatibility and Implementation

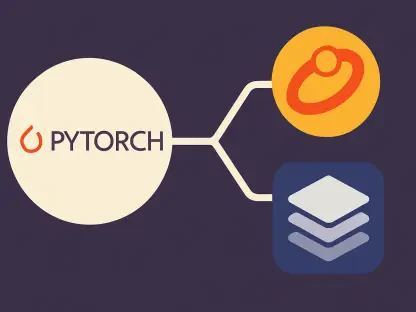

Building on its technical strengths, the tool was integrated as an official component of the LangChain framework, while plans were set for connections with LlamaIndex and Gemini CLI by the end of 2026. This level of interoperability ensured that developers could seamlessly incorporate the loader into existing workflows, reducing the time required to move from raw data to an operational system. CEO Kim Yeon-su emphasized that this achievement validated the technical completeness of the platform and marked a strategic shift toward global market expansion. To capitalize on these advancements, IT leaders looked toward implementing localized extraction environments that minimized latency while maximizing document privacy. By deploying these tools on-premises, organizations protected sensitive intellectual property during the training phase, ensuring that data remained secure from external exfiltration. Developers prioritized the adoption of these hybrid engines to solve accuracy issues in reading order, ultimately creating more reliable AI assistants that reflected the true content of their massive corporate archives.