The rapid expansion of artificial intelligence has created a paradoxical landscape where the software powering global innovation often lacks the standardized ethical guardrails required for long-term stability. While private corporations race to deploy proprietary models, the underlying open-source infrastructure remains the quiet engine driving nearly every major breakthrough in machine learning and data processing today. Recognizing the critical need for a more secure and transparent foundation, the Apache Software Foundation has officially introduced a strategic program aimed at fortifying this ecosystem. With an initial commitment of $10 million, the organization seeks to ensure that the tools developers rely on are not only technically robust but also aligned with rigorous safety standards. This investment marks a pivotal moment for the community, shifting the focus from pure performance to a more holistic view of technical accountability and public trust in high-stakes automated environments.

Securing the Open Source Backbone

Funding the Future of Ethical Development

The financial architecture of this initiative begins with a robust $1.75 million seed contribution, signaling a collaborative effort between major industry players and security-focused organizations. Leading the way is Anthropic, an AI safety and research company, which has committed $1.5 million to the cause, alongside a $250,000 contribution from Alpha-Omega, a group dedicated to the security of open-source software. This capital is designated to flow into the foundation over a three-year period, covering the span from 2026 to 2028. By establishing such a significant financial runway, the foundation can move beyond reactive bug-fixing and toward a proactive stance in defining how generative models and autonomous agents interact with distributed systems. The goal is to raise the full $10 million by the end of this cycle, providing the necessary resources to maintain the integrity of natural language processing and data pipeline tools that serve millions of users.

This move addresses a growing concern among engineers regarding the “black box” nature of modern AI. When critical infrastructure like Apache Spark or Flink is integrated with advanced machine learning models, the potential for unforeseen vulnerabilities increases exponentially. The Responsible AI Initiative provides a structured framework to mitigate these risks by funding dedicated security audits and enhancing the transparency of code releases. By utilizing the new funds to bolster the Apache Trusted Release platform, the foundation ensures that every piece of software passing through its ecosystem meets stringent safety benchmarks. This systematic approach allows developers to integrate complex AI features without compromising the stability of their enterprise applications, effectively bridging the gap between cutting-edge research and reliable production environments where failure is not an option for businesses.

Prioritizing Human Oversight in Automation

Central to this new strategy is the “community over code” philosophy, which mandates that technological advancement must never outpace human accountability. The foundation has established clear guidelines that require high levels of human oversight for all projects involving agentic AI development. These standards are designed to prevent the accidental deployment of biased or harmful algorithms by ensuring that diverse groups of contributors remain at the helm of the decision-making process. Unlike vendor-locked platforms where proprietary interests might overshadow ethical considerations, the open-source model relies on a global network of peers to peer-review and validate software. This initiative formalizes that process, creating a set of universal best practices for licensing integrity and security that apply to every project under the foundation’s vast umbrella, regardless of its specific technical niche.

Furthermore, the initiative recognizes that the governance of AI is not merely a technical challenge but a social one that requires a broad range of perspectives. To support this, the foundation is introducing a specialized “Responsible AI” track at its flagship “Community Over Code” conference. This platform will serve as a hub for experts to discuss the ethical implications of automated data processing while offering scholarships for hackathons and local meetups. By lowering the barrier to entry for developers from diverse backgrounds, the organization ensures that the development of AI tools remains a democratic process. Such engagement prevents the consolidation of power within a few massive tech firms, instead fostering an environment where innovation is driven by a global community dedicated to the public good and the long-term safety of the digital infrastructure we use every day.

Expanding the Global Innovation Ecosystem

Empowering Developers With Advanced Modeling

A significant portion of the new funding is dedicated to providing Apache project contributors with direct access to sophisticated AI language and code models. This democratization of high-level compute resources allows individual developers and smaller teams to experiment with state-of-the-art technology that was previously reserved for well-funded corporate laboratories. By integrating these models into the development workflow, the foundation hopes to accelerate the improvement of core services across its entire stack, from real-time data pipelines to observability tools. This hands-on access enables contributors to identify potential failure points in AI-driven software early in the development cycle, leading to more resilient codebases. Consequently, the entire open-source community benefits from a feedback loop where advanced tools are used to build even more secure and efficient foundational technologies.

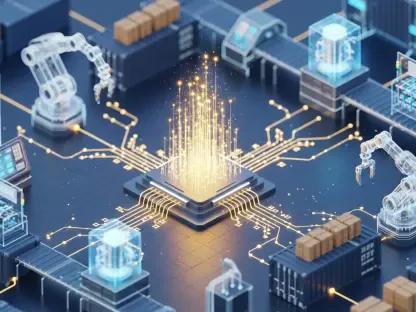

The focus on the entire AI/ML stack ensures that no part of the ecosystem is left vulnerable to the unique challenges posed by modern workloads. From the secure infrastructure layer to the sophisticated data processing frameworks, the initiative aims to create a unified front against security threats and ethical lapses. This comprehensive support model is essential because AI does not exist in a vacuum; it relies on a complex web of interconnected systems to function. By strengthening the links between these systems, the foundation is creating a more stable environment for innovation to flourish. Developers can now focus on building creative solutions to complex problems, knowing that the underlying libraries and frameworks they are using are backed by a well-funded, community-led initiative that prioritizes long-term security and transparency over short-term commercial gains.

Implementing Actionable Standards for Industry Growth

As the industry moves forward through 2027 and 2028, the success of this initiative will be measured by its ability to translate high-level ethical goals into practical, actionable technical requirements. Organizations adopting these open-source tools should begin by conducting comprehensive audits of their current AI implementations to ensure alignment with the foundation’s new transparency standards. It is no longer sufficient to deploy a model and hope for the best; instead, teams must implement rigorous monitoring systems that track both the performance and the decision-making logic of their automated agents. Integrating the Apache Trusted Release protocols into internal CI/CD pipelines will provide an extra layer of verification, ensuring that any software utilized in production environments has been vetted for common vulnerabilities and adheres to the latest responsible usage guidelines established by the global community.

Looking ahead, the shift toward public governance in AI suggests that the most successful technologies will be those that prioritize trust and verifiability. Stakeholders should actively participate in the newly established “Responsible AI” tracks and community forums to help shape the evolving standards of the industry. By contributing back to the open-source ecosystem, companies can ensure that the tools they depend on remain robust and secure. The actionable next step for any developer or business leader is to move away from opaque, proprietary solutions in favor of transparent, community-vetted frameworks that offer a clear path toward ethical automation. Embracing this model not only mitigates regulatory and security risks but also positions organizations at the forefront of a more responsible and sustainable digital future where technology serves the interests of the many rather than the few.